Hierarchical Patch Diffusion Models For High Resolution Video Generation Cvpr 2024

Hierarchical Patch Diffusion Models For High Resolution Video Generation We improve pdms in two principled ways. first, to enforce consistency between patches, we develop deep context fusion an architectural technique that propagates the context information from low scale to high scale patches in a hierarchical manner. In this work, we developed the hierarchical patch diffusion model for high resolution video synthesis, which effi ciently trains in the end to end manner directly in the pixel space, and is amenable to swift fine tuning from a base low resolution diffusion model.

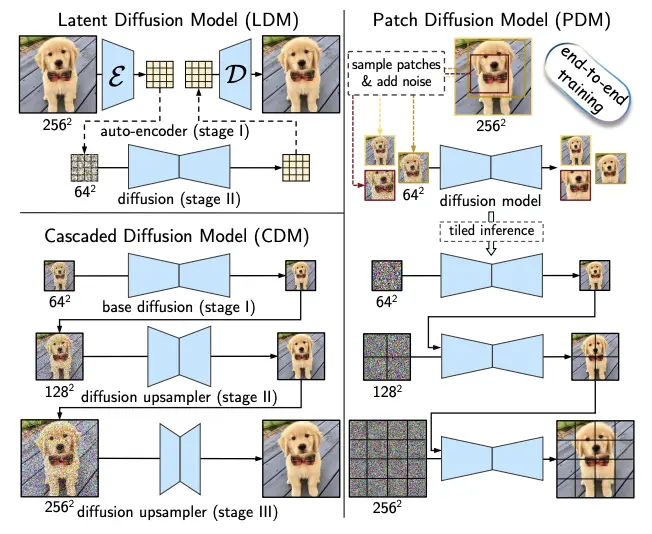

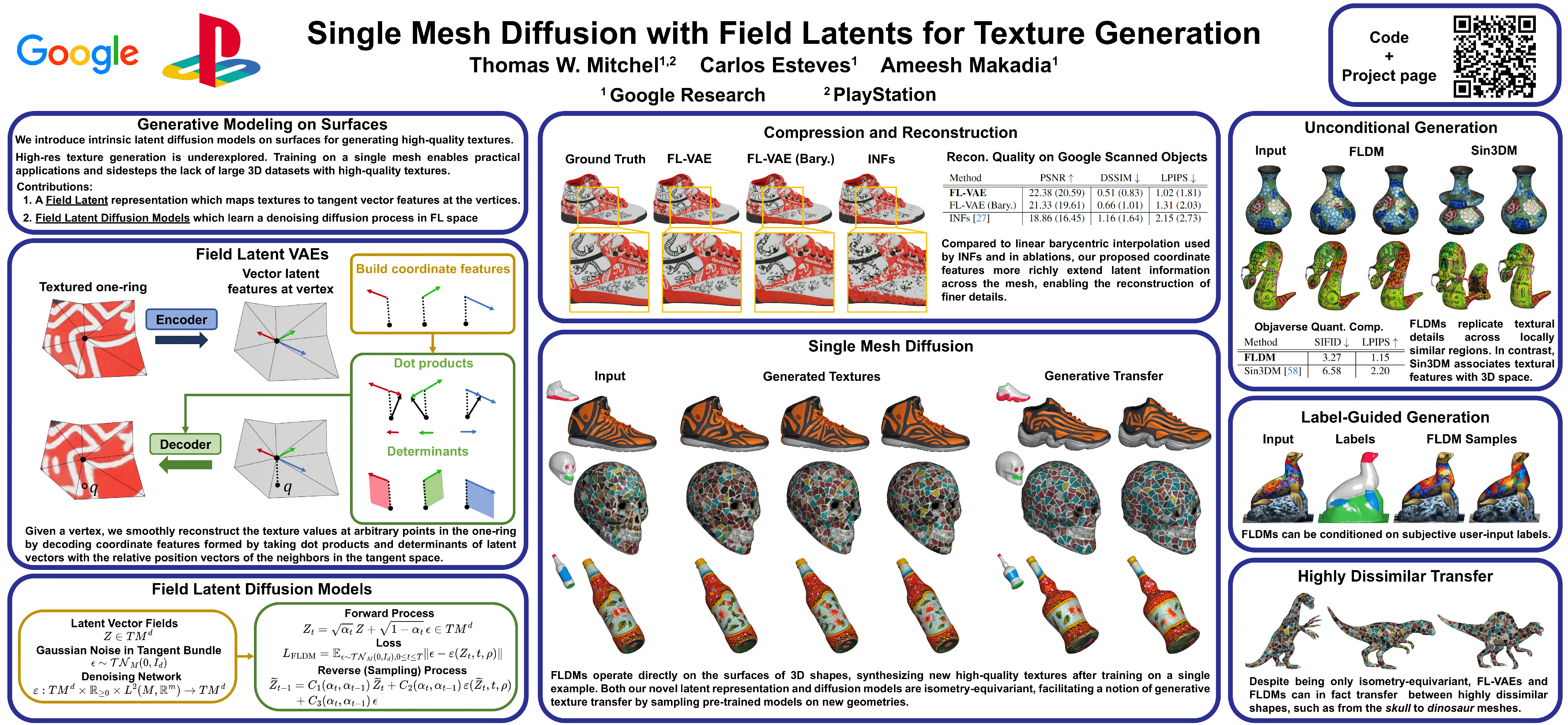

Cvpr Poster Single Mesh Diffusion Models With Field Latents For Texture In our work, we develop hierarchical patch diffusion, which never operates on full resolution inputs, but instead optimizes the lower stages of the hierarchy to produce spatially aligned context information for the later pyramid levels to enforce global consistency between patches. Diffusion models have demonstrated remarkable performance in image and video synthesis. however, scaling them to high resolution inputs is challenging and requi. In this work we study patch diffusion models (pdms) a diffusion paradigm which models the distribution of patches rather than whole inputs keeping up to 0.7% of the original pixels. In this work, we study patch diffusion models (pdms) a diffusion paradigm which models the distribution of patches, rather than whole inputs, keeping up to ≈ 0.7\% of the original pixels. this makes it very efficient during training and unlocks end to end optimization on high resolution videos. we improve pdms in two principled ways.

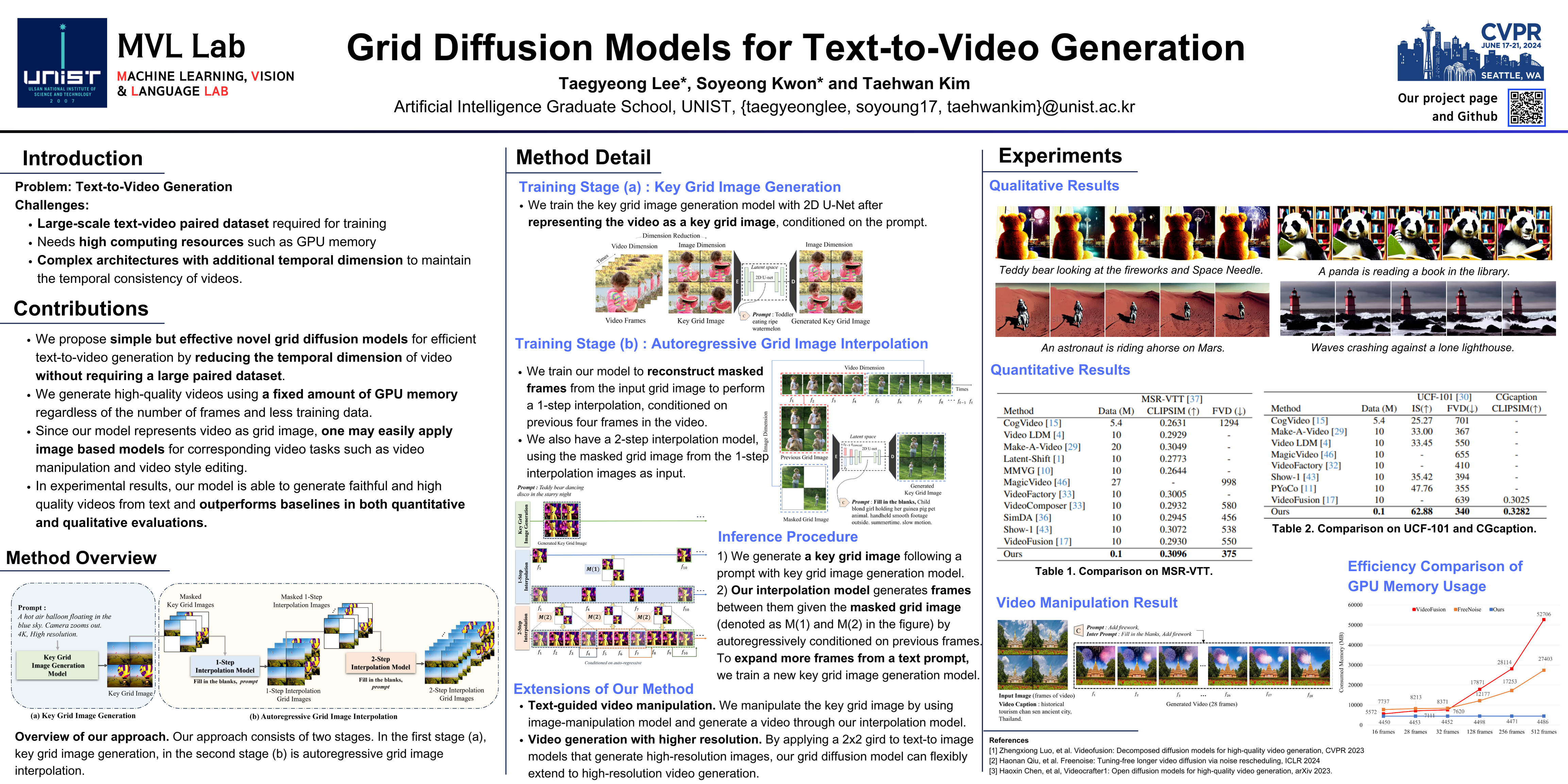

Cvpr Poster Grid Diffusion Models For Text To Video Generation In this work we study patch diffusion models (pdms) a diffusion paradigm which models the distribution of patches rather than whole inputs keeping up to 0.7% of the original pixels. In this work, we study patch diffusion models (pdms) a diffusion paradigm which models the distribution of patches, rather than whole inputs, keeping up to ≈ 0.7\% of the original pixels. this makes it very efficient during training and unlocks end to end optimization on high resolution videos. we improve pdms in two principled ways. Hierarchical patch diffusion models for high resolution video synthesis [cvpr 2024] snap research hpdm. In this work, we developed the hierarchical patch diffusion model for high resolution video synthesis, which efficiently trains in the end to end manner directly in the pixel space, and is amenable to swift fine tuning from a base low resolution diffusion model. Figure 5. hpdm t2vis able to eficiently fine tune from the standard low resolution generator to high resolution 64 × 288 × 512 text to video generation when fine tuned from a low resolution 36 × 64 diffusion for just 15,000 training steps. This paper proposes a novel diffusion method, dubbed temporally consistent patch diffusion models (tc dpm), for infrared to visible video translation, extending the patch diffusion model, and proposes a semantic guided denoising, leveraging the strong representations of foundational models.

Rombach High Resolution Image Synthesis With Latent Diffusion Models Hierarchical patch diffusion models for high resolution video synthesis [cvpr 2024] snap research hpdm. In this work, we developed the hierarchical patch diffusion model for high resolution video synthesis, which efficiently trains in the end to end manner directly in the pixel space, and is amenable to swift fine tuning from a base low resolution diffusion model. Figure 5. hpdm t2vis able to eficiently fine tune from the standard low resolution generator to high resolution 64 × 288 × 512 text to video generation when fine tuned from a low resolution 36 × 64 diffusion for just 15,000 training steps. This paper proposes a novel diffusion method, dubbed temporally consistent patch diffusion models (tc dpm), for infrared to visible video translation, extending the patch diffusion model, and proposes a semantic guided denoising, leveraging the strong representations of foundational models.

Comments are closed.