Grokking Grokking

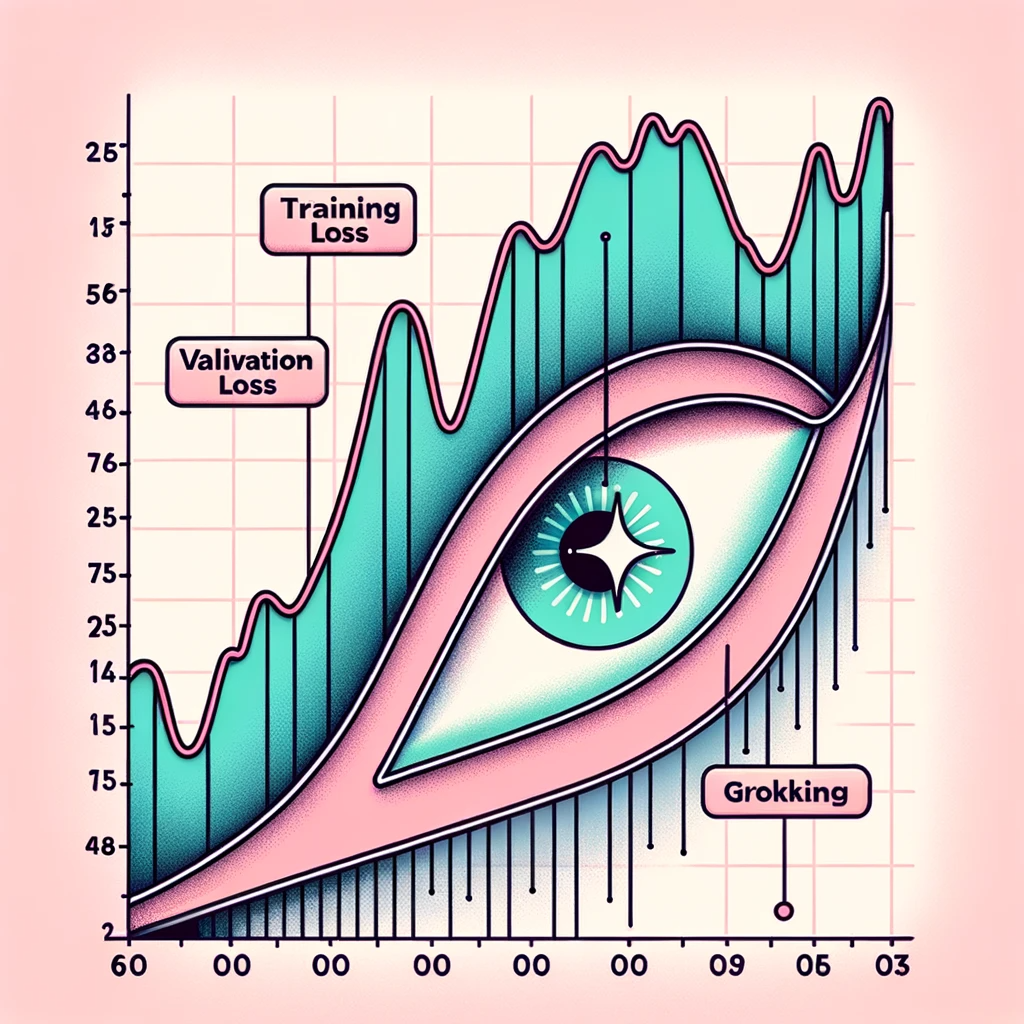

Grokking Grokking In ml research, "grokking" is not used as a synonym for "generalization"; rather, it names a sometimes observed delayed‑generalization training phenomenon in which training and held‑out performance do not improve in tandem, and in which held‑out performance rises abruptly later. Grokking, or delayed generalization, is an intriguing learning phenomenon where test set loss decreases sharply only after a model’s training set loss has converged. this challenges conventional understanding of the training dynamics in deep learning networks.

Grokking Price Grokking To Usd Research News Fundraising Messari Grokking is a sudden phase transition in neural network training where a model shifts from memorizing its training data to genuinely generalizing — understanding the underlying pattern well enough to solve examples it has never seen. Definition of grokking: grokking refers to a surprising phenomenon of delayed generalization in neural network training. a model will perfectly fit the training data (near 100% training accuracy) yet remain at chance level on the test set for an extended period. Grokking is a fascinating phenomenon where a model, after a period of apparent stagnation, suddenly experiences a rapid and significant improvement in performance. The conversation then shifted to tian’s recent research, focusing on the phenomenon in large ai models known as “grokking.” “grokking,” a term coined by science fiction writer robert a. heinlein, means to understand something so deeply and intuitively that it becomes part of you.

Grokking Algorithms Pl Courses Grokking is a fascinating phenomenon where a model, after a period of apparent stagnation, suddenly experiences a rapid and significant improvement in performance. The conversation then shifted to tian’s recent research, focusing on the phenomenon in large ai models known as “grokking.” “grokking,” a term coined by science fiction writer robert a. heinlein, means to understand something so deeply and intuitively that it becomes part of you. In conclusion, grokking exposes the nuanced interplay of varying training variables, encouraging us to look beyond initial impressions. through a physics flavored lens, it suggests that abruptly evolving a model from memorization centric to rule adhering is less mystical, and more rooted in nature’s rhythm, paralleling dramatic realities. Grokking, or delayed generalization, is an intriguing learning phenomenon where test set loss decreases sharply only after a model's training set loss has converged. This paper reviews the phenomenon of “grokking” in neural networks, where models initially overfit their training data but later experience a sudden improvement in test performance after prolonged training. Recent work by liu et al. frames grokking within a broader spectrum of learning dynamics, identifying four distinct phases: confusion, memorization, grokking, and comprehension.

Some Thoughts On Grokking Ouail Kitouni In conclusion, grokking exposes the nuanced interplay of varying training variables, encouraging us to look beyond initial impressions. through a physics flavored lens, it suggests that abruptly evolving a model from memorization centric to rule adhering is less mystical, and more rooted in nature’s rhythm, paralleling dramatic realities. Grokking, or delayed generalization, is an intriguing learning phenomenon where test set loss decreases sharply only after a model's training set loss has converged. This paper reviews the phenomenon of “grokking” in neural networks, where models initially overfit their training data but later experience a sudden improvement in test performance after prolonged training. Recent work by liu et al. frames grokking within a broader spectrum of learning dynamics, identifying four distinct phases: confusion, memorization, grokking, and comprehension.

Comments are closed.