Gpu Kernel Performance Bottlenecks How To Analyze And Optimize With

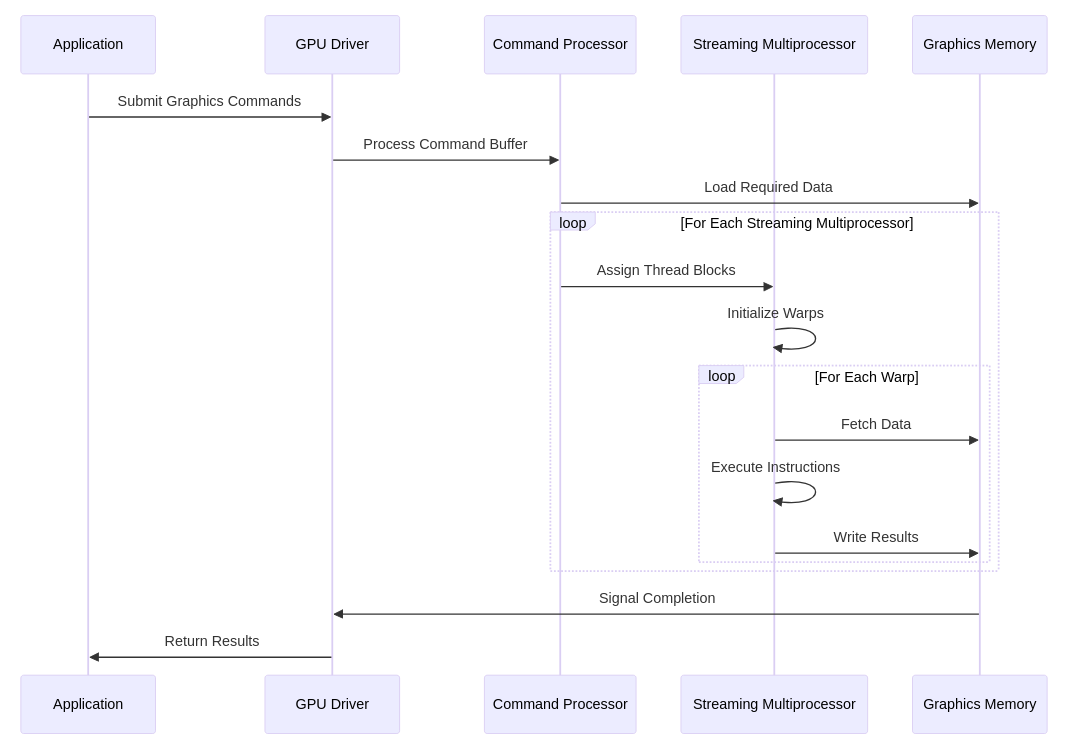

Gpu Kernel Performance Bottlenecks How To Analyze And Optimize With Profiling and optimizing gpu code involve different considerations and utilize specialized tools compared to cpu code profiling. here's an overview of available tools and resources for gpu code:. Starting from an input kernel, kernelagent repeatedly profiles the kernel, diagnoses performance bottlenecks, prescribes architecture aware optimizations, synthesizes optimization knowledge, explores alternative optimization paths in parallel, and measures each candidate.

Github M4riio21 Gpu Kernel Performance Dataset Analysis Of Kaggle Part 3 of our gpu profiling series guides beginners through practical steps to identify and optimize kernel bottlenecks using rocm tools. Here we present starlight, an open source, highly flexible tool for enhancing gpu kernel analysis and optimization. starlight autonomously describes roofline models, examines performance metrics, and correlates these insights with gpu architectural bottlenecks. Gpu profiling helps to get some insights of gpus behavior to identify and fix performance bottlenecks. the following steps are performed iteratively until achieving the desired performance:. This guide demonstrates how to use the tools available with the tensorflow profiler to track the performance of your tensorflow models. you will learn how to understand how your model performs on the host (cpu), the device (gpu), or on a combination of both the host and device (s).

Optimizing Ai Inference A Deep Dive Into Gpu Performance Cpu Gpu profiling helps to get some insights of gpus behavior to identify and fix performance bottlenecks. the following steps are performed iteratively until achieving the desired performance:. This guide demonstrates how to use the tools available with the tensorflow profiler to track the performance of your tensorflow models. you will learn how to understand how your model performs on the host (cpu), the device (gpu), or on a combination of both the host and device (s). Gpu optimisation is iterative — fixing one bottleneck often reveals the next. the process continues until the kernel’s performance is within acceptable distance of the theoretical ceiling, or until the dominant bottleneck shifts to a different kernel or a system level constraint. Profiling and optimizing gpu code involve different considerations and utilize specialized tools compared to cpu code profiling. It provides detailed insights that guide your optimization efforts, ensuring you focus on the areas yielding the greatest performance improvements for inference. In an age of constrained compute, learn how to optimize gpu efficiency through understanding architecture, bottlenecks, and fixes ranging from simple pytorch commands to custom kernels.

Optimizing Ai Inference A Deep Dive Into Gpu Performance Cpu Gpu optimisation is iterative — fixing one bottleneck often reveals the next. the process continues until the kernel’s performance is within acceptable distance of the theoretical ceiling, or until the dominant bottleneck shifts to a different kernel or a system level constraint. Profiling and optimizing gpu code involve different considerations and utilize specialized tools compared to cpu code profiling. It provides detailed insights that guide your optimization efforts, ensuring you focus on the areas yielding the greatest performance improvements for inference. In an age of constrained compute, learn how to optimize gpu efficiency through understanding architecture, bottlenecks, and fixes ranging from simple pytorch commands to custom kernels.

Kernel Performance For Single Gpu Case Download Table It provides detailed insights that guide your optimization efforts, ensuring you focus on the areas yielding the greatest performance improvements for inference. In an age of constrained compute, learn how to optimize gpu efficiency through understanding architecture, bottlenecks, and fixes ranging from simple pytorch commands to custom kernels.

Comments are closed.