Gpu Cuda Calculation Optimization Rokken

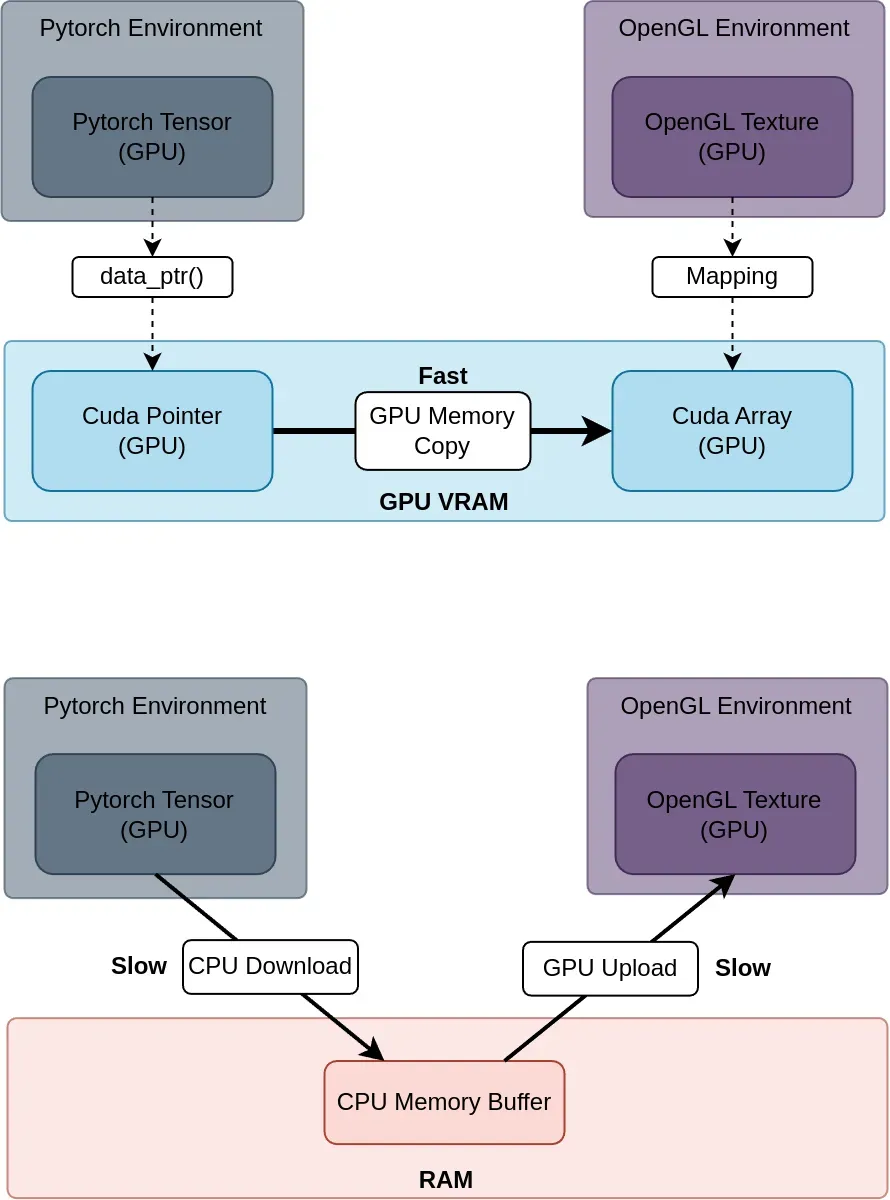

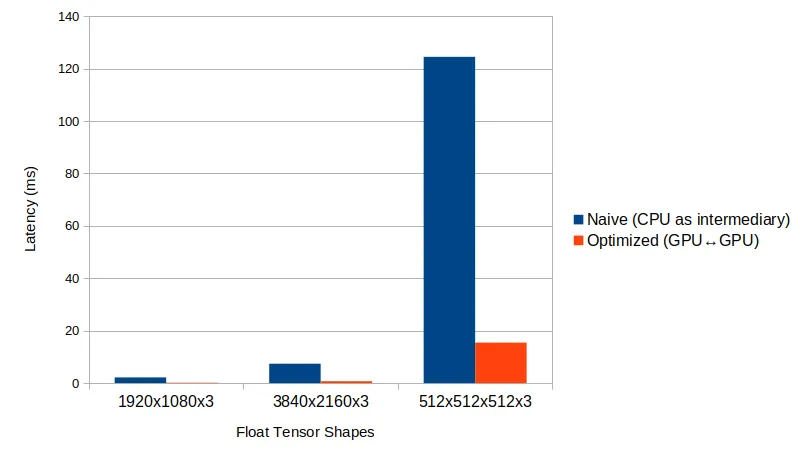

Gpu Cuda Calculation Optimization Rokken We've developed low latency transfer methods optimized for leading ai frameworks such as pytorch and tensorflow, alongside popular graphics libraries like opengl and vulkan. our focus is on delivering a fast, responsive user experience, even under heavy computational or i o loads. It covers optimization strategies across memory usage, parallel execution, and instruction level efficiency. the guide helps developers identify performance bottlenecks, leverage gpu architecture effectively, and apply profiling tools to fine tune applications.

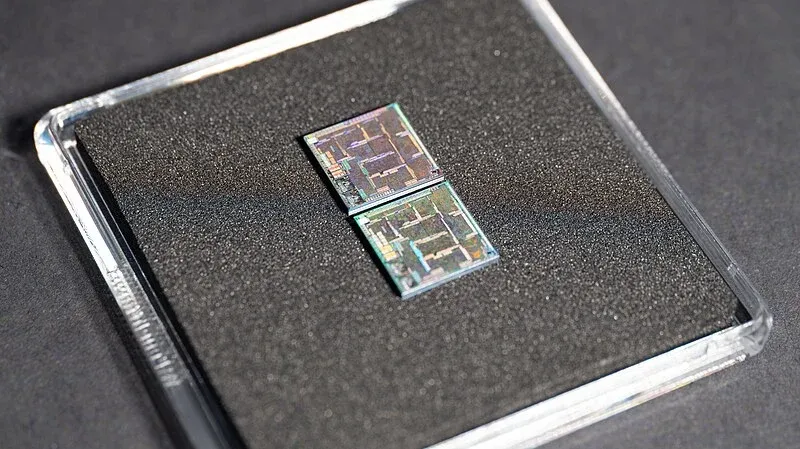

Gpu Cuda Calculation Optimization Rokken Optimizations on the intermediate representation produced by gpu compilers or architectural techniques to improve performance are outside the scope of this work. in this article, we use cuda terminology, but most optimizations are also applicable to opencl and non nvidia hardware. Mark saroufim, an engineer on the pytorch team at meta, presents a re recorded talk on cuda performance checklist. this talk is a direct sequel to lecture 1, which focused on the importance of gpu performance. this lecture covers common tricks to improve cuda and pytorch performance. Learn a step by step cuda performance tuning workflow to optimize gpu kernels, improve memory usage, and boost application speed. Cuda compiler (nvcc) and optimization nvcc, the cuda compiler, plays a crucial role in translating cuda code into machine executable instructions for nvidia gpus. it incorporates sophisticated optimization techniques to maximize performance, such as instruction scheduling, register allocation, and memory access optimization.

Gpu Cuda Calculation Optimization Rokken Learn a step by step cuda performance tuning workflow to optimize gpu kernels, improve memory usage, and boost application speed. Cuda compiler (nvcc) and optimization nvcc, the cuda compiler, plays a crucial role in translating cuda code into machine executable instructions for nvidia gpus. it incorporates sophisticated optimization techniques to maximize performance, such as instruction scheduling, register allocation, and memory access optimization. Learn how to use the cuda occupancy calculator to optimize gpu resource allocation and improve kernel performance through precise tuning and workload balancing. We are a team of experts and we are dedicated to providing the best solutions to our clients. Those keen on optimizing gpu performance are advised to learn about the features of the latest gpu architectures, understand the gpu programming language landscape, and gain familiarity with performance monitoring tools like nvidia nsight and smi. Cuda toolkit documentation 13.2 update 1 develop, optimize and deploy gpu accelerated apps the nvidia® cuda® toolkit provides a development environment for creating high performance gpu accelerated applications. with the cuda toolkit, you can develop, optimize, and deploy your applications on gpu accelerated embedded systems, desktop workstations, enterprise data centers, cloud based.

Gpu Cuda Calculation Optimization Rokken Learn how to use the cuda occupancy calculator to optimize gpu resource allocation and improve kernel performance through precise tuning and workload balancing. We are a team of experts and we are dedicated to providing the best solutions to our clients. Those keen on optimizing gpu performance are advised to learn about the features of the latest gpu architectures, understand the gpu programming language landscape, and gain familiarity with performance monitoring tools like nvidia nsight and smi. Cuda toolkit documentation 13.2 update 1 develop, optimize and deploy gpu accelerated apps the nvidia® cuda® toolkit provides a development environment for creating high performance gpu accelerated applications. with the cuda toolkit, you can develop, optimize, and deploy your applications on gpu accelerated embedded systems, desktop workstations, enterprise data centers, cloud based.

Github Logicbolt Gpu Calculation With Cuda Cuda Development Those keen on optimizing gpu performance are advised to learn about the features of the latest gpu architectures, understand the gpu programming language landscape, and gain familiarity with performance monitoring tools like nvidia nsight and smi. Cuda toolkit documentation 13.2 update 1 develop, optimize and deploy gpu accelerated apps the nvidia® cuda® toolkit provides a development environment for creating high performance gpu accelerated applications. with the cuda toolkit, you can develop, optimize, and deploy your applications on gpu accelerated embedded systems, desktop workstations, enterprise data centers, cloud based.

Comments are closed.