Gpu Cloud Reserve Gpu Instance On Single Or Multiple Node Gpu Cloud

Gpu Cloud Reserve Gpu Instance On Single Or Multiple Node Gpu Cloud This document describes the features and limitations of compute engine instances that have attached gpus. to accelerate specific workloads on compute engine, you can either deploy an. This comprehensive guide covers the latest best practices for managing nvidia gpus in multi node kubernetes clusters, including installation, configuration, optimization, and monitoring strategies validated against official kubernetes documentation and industry implementations.

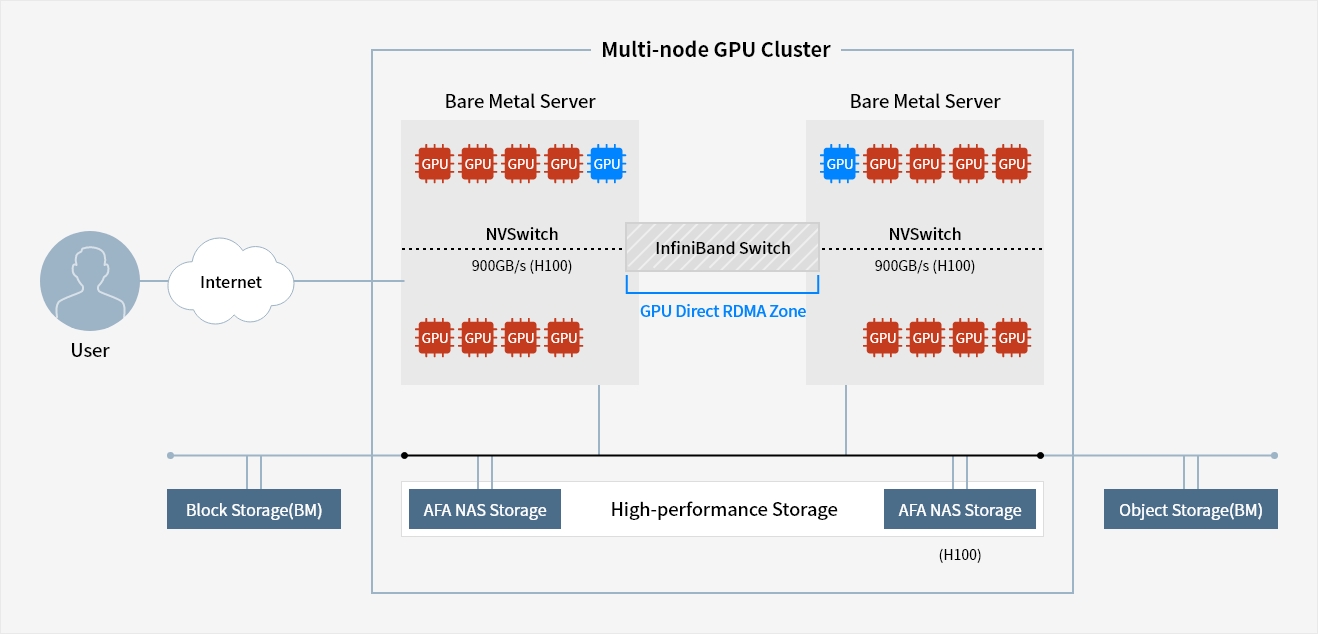

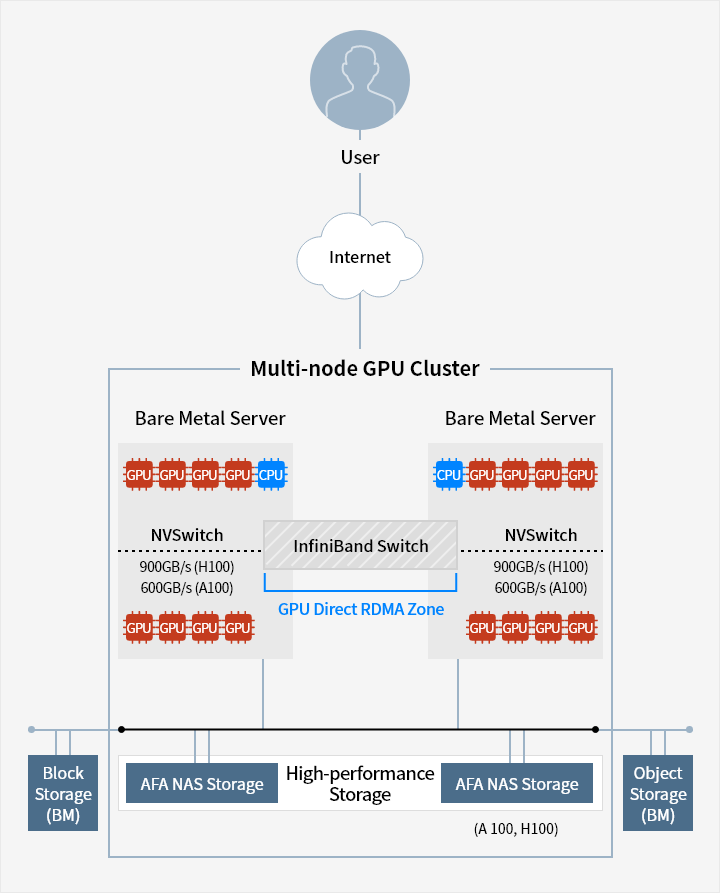

Multi Node Gpu Cluster Cloud Product Samsung Sds You can reserve accelerated compute instances for up to six months in cluster sizes of one to 64 instances (512 gpus or 1024 trainium chips), giving you the flexibility to run a broad range of ml workloads. Explore when to use single gpu setups and when to scale to multi gpu clusters. learn how gpu cloud platforms optimize speed, cost efficiency and scalability. Instead of relying on a single device with fixed memory and processing limits, a cluster distributes workloads across multiple nodes, allowing you to parallelise computation, expand available memory and increase throughput. The new multi instance gpu (mig) feature allows gpus (starting with nvidia ampere architecture) to be securely partitioned into up to seven separate gpu instances for cuda applications, providing multiple users with separate gpu resources for optimal gpu utilization.

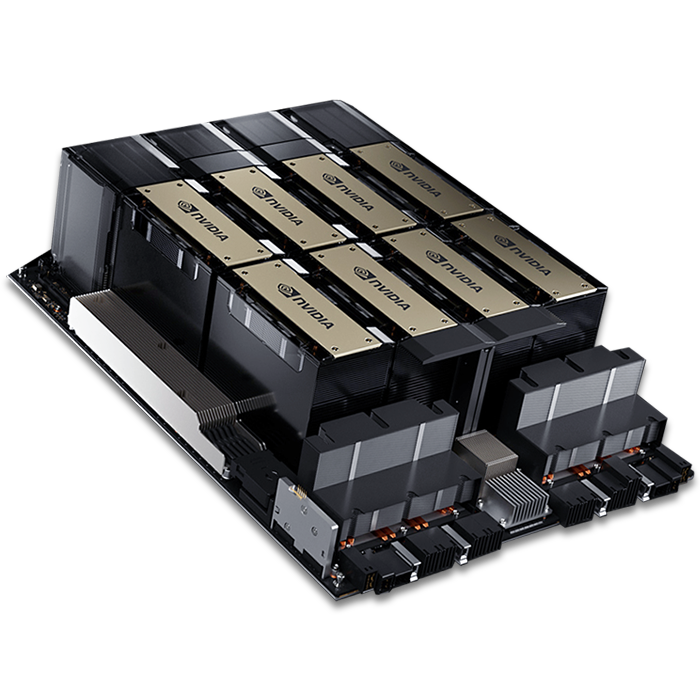

Multi Node Gpu Cluster Cloud Product Samsung Sds Instead of relying on a single device with fixed memory and processing limits, a cluster distributes workloads across multiple nodes, allowing you to parallelise computation, expand available memory and increase throughput. The new multi instance gpu (mig) feature allows gpus (starting with nvidia ampere architecture) to be securely partitioned into up to seven separate gpu instances for cuda applications, providing multiple users with separate gpu resources for optimal gpu utilization. In my last blog post we deployed a single ecs host with access to gpu. we can utilise a gpu to speed up our computer vision tasks by 10x when using a gpu compared to using cpu. Gpu fractionalization is a powerful technique that allows multiple workloads to share a single gpu, maximizing hardware utilization and reducing costs. in many scenarios, a single workload may not fully utilize a gpu’s compute capacity, leading to expensive idle resources. Cast ai supports nvidia multi instance gpu (mig) technology, enabling you to partition powerful gpus into smaller, isolated instances. this feature maximizes gpu utilization and cost efficiency by allowing multiple workloads to share a single physical gpu while maintaining hardware level isolation. Reserve latest and ‘hard to get’ gpu instances in the cloud for only the time that you need – reserve nvidia dgx h100, dgx a100, gh200 and other gpu instances & get started quickly without waiting months – gpu as a service (pay as you go) – reserve for weeks, months or annual subscription – single node or multi node clusters for llm.

Comments are closed.