Github Ubc Tea Improving Fairness In Image Classification Via

Github Ubc Tea Improving Fairness In Image Classification Via First, we convert the input images into their sketches and feed them to the following classification model for prediction. towards further fairness improvement, in the second step we introduce a fairness loss function to mitigate the bias in the model. In this paper, we propose to use image sketching methods to improve model fairness among several bias types (i.e. color, sex, age) based on the intuition that a suitable sketching method can filter out the bias information while keeping semantic information for classification.

Github Ubc Tea Improving Fairness In Image Classification Via Fairness of classifiers across skin tones in dermatology. in anne l. martel, purang abolmaesumi, danail stoyanov, diana mateus, maria a. zuluaga, s. kevin zhou, daniel racoceanu, and leo joskowicz, editors, medical image computing and computer assisted. The pytorch implementation of neurips workshop paper "improving fairness in image classification via sketching" ( arxiv.org pdf 2211.00168.pdf) improving fairness in image classification via sketching readme.md at main · ubc tea improving fairness in image classification via sketching. The pytorch implementation of neurips workshop paper "improving fairness in image classification via sketching" ( arxiv.org pdf 2211.00168.pdf) activity · ubc tea improving fairness in image classification via sketching. Without losing the utility of data, we explore the image to sketching methods that can maintain the useful semantic information for the targeted classification while filtering out the useless bias information. in addition, we design a fair loss for further improving the model fairness.

Github Abyssinianguy Fairness And Classification This Is An Attempt The pytorch implementation of neurips workshop paper "improving fairness in image classification via sketching" ( arxiv.org pdf 2211.00168.pdf) activity · ubc tea improving fairness in image classification via sketching. Without losing the utility of data, we explore the image to sketching methods that can maintain the useful semantic information for the targeted classification while filtering out the useless bias information. in addition, we design a fair loss for further improving the model fairness. The study highlights bias prevalence, dataset specific disparities, and ineffectiveness of existing mitigation strategies. fairmedfm provides an open source and extensible pipeline, aiming to enhance fairness evaluation and promote equitable ai applications in medical imaging. Without losing the utility of data, we explore the image to sketching methods that can maintain useful semantic information for the target classification while filtering out the useless bias. My current research lies in improving trustworthiness and efficiency in machine learning algrithms and foundation models. i am also into novel agentic ai systems. i aim to narrow the gap between ai research and its applications by developing the next generation trustworthy ai systems. To our best knowledge, this is the first work to mitigate unfairness with respect to multiple sensitive attributes in the field of medical imaging. the code is available at github ubc tea fcro fair classification orthogonal representation.

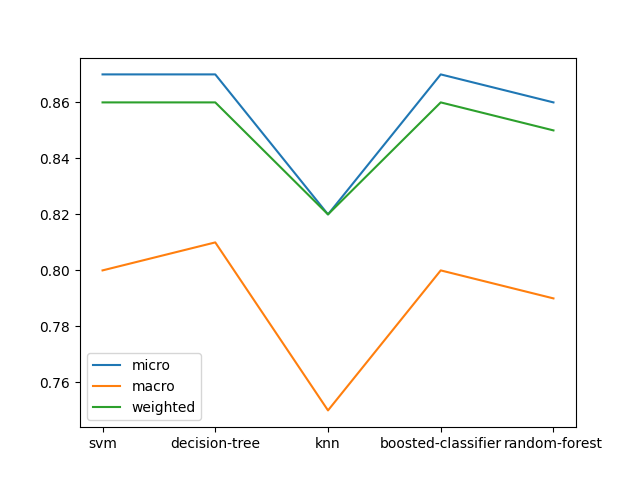

Github Hosavagyan Fairness Metrics Classification And Clustering The study highlights bias prevalence, dataset specific disparities, and ineffectiveness of existing mitigation strategies. fairmedfm provides an open source and extensible pipeline, aiming to enhance fairness evaluation and promote equitable ai applications in medical imaging. Without losing the utility of data, we explore the image to sketching methods that can maintain useful semantic information for the target classification while filtering out the useless bias. My current research lies in improving trustworthiness and efficiency in machine learning algrithms and foundation models. i am also into novel agentic ai systems. i aim to narrow the gap between ai research and its applications by developing the next generation trustworthy ai systems. To our best knowledge, this is the first work to mitigate unfairness with respect to multiple sensitive attributes in the field of medical imaging. the code is available at github ubc tea fcro fair classification orthogonal representation.

Comments are closed.