Github Tomscheffers Arrow Lake Incremental Data Lakes In Apache Arrow

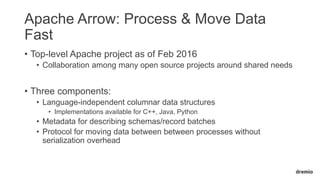

Github Tomscheffers Arrow Lake Incremental Data Lakes In Apache Arrow Incremental data lakes in apache arrow. contribute to tomscheffers arrow lake development by creating an account on github. It introduces apache arrow as a solution for high performance data interchange that minimizes serialization overhead and enables efficient cross system communication.

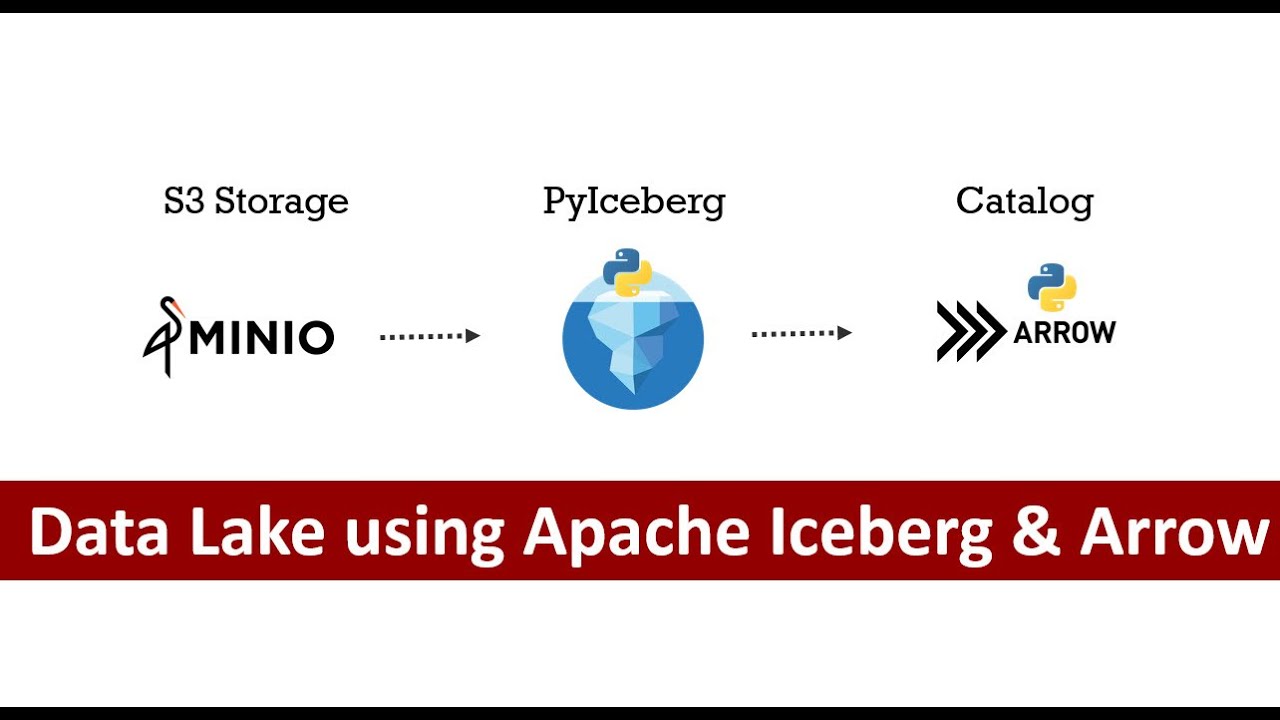

Build A Data Lake Apache Iceberg And Apache Arrow Build Data Lake Explore a collection of apache arrow recipes in c , java, python, r, and rust. To address these, we created a framework designed to significantly boost developer efficiency and ease the integration of data services, leveraging cutting edge technologies like apache arrow for maximal performance and reliability. We utilize apache iceberg and arrow python api and eliminate jvm from the equation. 🔗 tools you'll love: minio: high performance, s3 compatible object storage. pyiceberg: manage table formats. Together, apache iceberg, arrow, and polaris create a cohesive environment where data can be stored, processed, and accessed consistently and securely—regardless of the engine being used.

803 Data Exchange With Sql Databases Over Apache Arrow Heterodb Pg We utilize apache iceberg and arrow python api and eliminate jvm from the equation. 🔗 tools you'll love: minio: high performance, s3 compatible object storage. pyiceberg: manage table formats. Together, apache iceberg, arrow, and polaris create a cohesive environment where data can be stored, processed, and accessed consistently and securely—regardless of the engine being used. Not all data lakes are fast—because performance is about more than just where your data lives. when running analytics on a data lake, multiple technical choices can dramatically affect. If you need to write data to a csv file incrementally as you generate or retrieve the data and you don’t want to keep in memory the whole table to write it at once, it’s possible to use pyarrow.csv.csvwriter to write data incrementally. We walked through the core ideas behind apache arrow, looked at how it’s different from more traditional data formats, how to set it up, and how to work with it in python. A data engineer can use arrow to speed up data exchanges between spark and a data warehouse, using arrow’s columnar format to store intermediate results efficiently.

Building A Virtual Data Lake With Apache Arrow Pptx Not all data lakes are fast—because performance is about more than just where your data lives. when running analytics on a data lake, multiple technical choices can dramatically affect. If you need to write data to a csv file incrementally as you generate or retrieve the data and you don’t want to keep in memory the whole table to write it at once, it’s possible to use pyarrow.csv.csvwriter to write data incrementally. We walked through the core ideas behind apache arrow, looked at how it’s different from more traditional data formats, how to set it up, and how to work with it in python. A data engineer can use arrow to speed up data exchanges between spark and a data warehouse, using arrow’s columnar format to store intermediate results efficiently.

Comments are closed.