Github Tergelmunkhbat Concise Reasoning Code For Paper Called Self

Github Tergelmunkhbat Concise Reasoning Code For Paper Called Self Official repository for the paper self training elicits concise reasoning in large language models by tergel munkhbat, namgyu ho, seo hyun kim, yongjin yang, yujin kim, and se young yun. Code for paper called self training elicits concise reasoning in large language models concise reasoning readme.md at main · tergelmunkhbat concise reasoning.

Github Gulilil If2124 Tugasbesar Parsernodejs Tugas Ini Merupakan Beyond the code, i'm an avid runner, r, taekwondo practitioner, and music composer. you can feel my energy in my song, "the overexcited boy." self training elicits concise reasoning in large language models. enhancing the reasoning ability of large language models through meta reflection. By exploiting the fundamental stochasticity and in context learning capabilities of llms, our self training approach robustly elicits concise reasoning on a wide range of models, including those with extensive post training. By exploiting the fundamental stochasticity and in context learning capabilities of llms, our self training approach robustly elicits concise reasoning on a wide range of models, including those with extensive post training. code is available at github tergelmunkhbat concise reasoning. The paper "self training elicits concise reasoning in llms" by tergel munkhbat et al. addresses a prominent inefficiency in the reasoning capabilities of llms that employ chain of thought (cot) reasoning methodologies.

Group 3 Self Concept Motivation And Social Support Among Selected By exploiting the fundamental stochasticity and in context learning capabilities of llms, our self training approach robustly elicits concise reasoning on a wide range of models, including those with extensive post training. code is available at github tergelmunkhbat concise reasoning. The paper "self training elicits concise reasoning in llms" by tergel munkhbat et al. addresses a prominent inefficiency in the reasoning capabilities of llms that employ chain of thought (cot) reasoning methodologies. This paper proposes a fine tuning method that enhances the concise reasoning capabilities of large language models by leveraging self generated data, achieving an average reduction of 30% in output token length while maintaining accuracy across complex tasks. To facilitate rapid community engagement with the presented research, we have compiled an extensive index of accepted papers that have associated public code or data repositories. we list all of them in the following table. this index was generated using an automated extraction process. By exploiting the fundamental stochasticity and in context learning capabilities of llms, our self training approach robustly elicits concise reasoning on a wide range of models, including those with extensive post training. code is available at github tergelmunkhbat concise reasoning. By exploiting the fundamental stochasticity and in context learning capabilities of llms, our self training approach robustly elicits concise reasoning on a wide range of models, including those with extensive post training. code is available at github tergelmunkhbat concise reasoning.

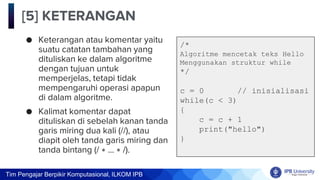

Computational Thinking Pseudocode Pdf This paper proposes a fine tuning method that enhances the concise reasoning capabilities of large language models by leveraging self generated data, achieving an average reduction of 30% in output token length while maintaining accuracy across complex tasks. To facilitate rapid community engagement with the presented research, we have compiled an extensive index of accepted papers that have associated public code or data repositories. we list all of them in the following table. this index was generated using an automated extraction process. By exploiting the fundamental stochasticity and in context learning capabilities of llms, our self training approach robustly elicits concise reasoning on a wide range of models, including those with extensive post training. code is available at github tergelmunkhbat concise reasoning. By exploiting the fundamental stochasticity and in context learning capabilities of llms, our self training approach robustly elicits concise reasoning on a wide range of models, including those with extensive post training. code is available at github tergelmunkhbat concise reasoning.

Img 20240718 0006 Pdf By exploiting the fundamental stochasticity and in context learning capabilities of llms, our self training approach robustly elicits concise reasoning on a wide range of models, including those with extensive post training. code is available at github tergelmunkhbat concise reasoning. By exploiting the fundamental stochasticity and in context learning capabilities of llms, our self training approach robustly elicits concise reasoning on a wide range of models, including those with extensive post training. code is available at github tergelmunkhbat concise reasoning.

Comments are closed.