Github Snap Research Video Synthesis Tutorial

Github Snap Research Video Synthesis Tutorial Contribute to snap research video synthesis tutorial development by creating an account on github. In this work, we build snap video a video first model that systematically addresses these challenges. to do that, we first extend the edm framework to take into account spatially and temporally redundant pixels and naturally support video generation.

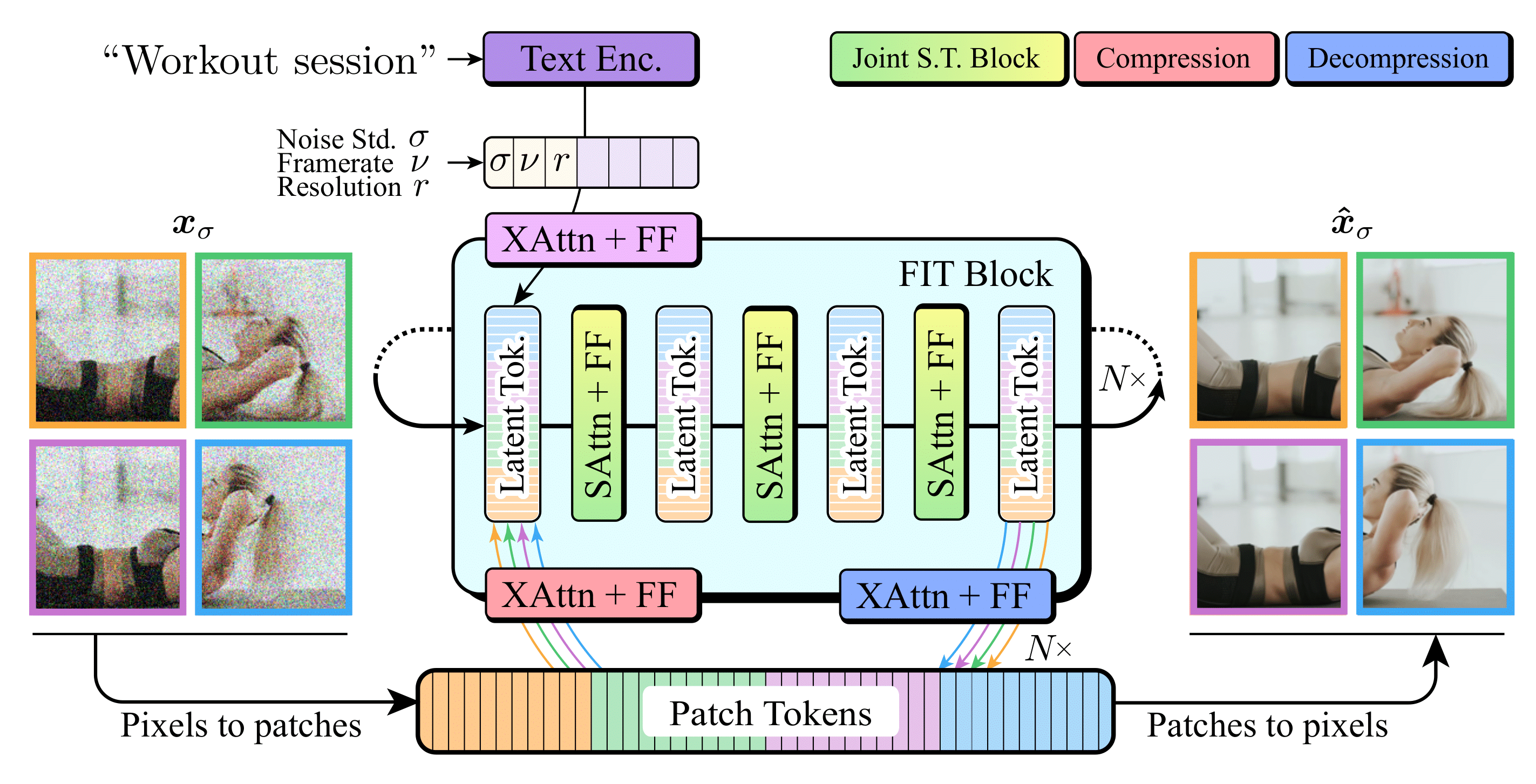

Eccv 22 Video Synthesis Early Days And New Developments In this work, we build snap video, a video first model that systematically addresses these challenges. to do that, we first extend the edm framework to take into account spatially and temporally redundant pixels and naturally support video generation. In this work, we build snap video, a video first model that systematically addresses these challenges. to do that, we first extend the edm framework to take into account spatially and temporally redundant pixels and naturally support video generation. In this work, we build snap video, a video first model that systematically addresses these chal lenges. to do that, we first extend the edm framework to take into account spatially and temporally redundant pix els and naturally support video generation. The framework systematically addresses the efficiency and quality limitations of prior video generation approaches, moving beyond adaptations of image models by introducing architectural and algorithmic innovations that enable scalable, high fidelity, and temporally consistent video synthesis.

Github Snap Research Locomo In this work, we build snap video, a video first model that systematically addresses these chal lenges. to do that, we first extend the edm framework to take into account spatially and temporally redundant pix els and naturally support video generation. The framework systematically addresses the efficiency and quality limitations of prior video generation approaches, moving beyond adaptations of image models by introducing architectural and algorithmic innovations that enable scalable, high fidelity, and temporally consistent video synthesis. In this work we build snap video a video first model that systematically addresses these challenges. to do that we first extend the edm framework to take into account spatially and temporally redundant pixels and naturally support video generation. Contribute to snap research video synthesis tutorial development by creating an account on github. Our tutorial will help them get the necessary knowledge, understand challenges and benchmarks, and choose a promising research direction. for practitioners, our tutorial will provide a detailed overview of the domain. We make use of an llm to generate a story plot, video prompts for different scenes, and scripts for audio narrations. we generate all video assets using our model while tuning the video prompts to obtain the desired visuals, and synthesize the audio narration.

Snap Video In this work we build snap video a video first model that systematically addresses these challenges. to do that we first extend the edm framework to take into account spatially and temporally redundant pixels and naturally support video generation. Contribute to snap research video synthesis tutorial development by creating an account on github. Our tutorial will help them get the necessary knowledge, understand challenges and benchmarks, and choose a promising research direction. for practitioners, our tutorial will provide a detailed overview of the domain. We make use of an llm to generate a story plot, video prompts for different scenes, and scripts for audio narrations. we generate all video assets using our model while tuning the video prompts to obtain the desired visuals, and synthesize the audio narration.

Comments are closed.