Github S19s98 Implementing Delta Lake Architecture By Batch Data

Github S19s98 Implementing Delta Lake Architecture By Batch Data To implement a delta lake architecture which consists of bronze layer (raw data), silver layer (grouping of logical columns from multiple tables of bronze layer) and gold layer (cleaned and enriched data ready for analytics and data scientist applications) using azure data factory. Delta lake architecture implementation. contribute to s19s98 implementing delta lake architecture by batch data ingestion using adf development by creating an account on github.

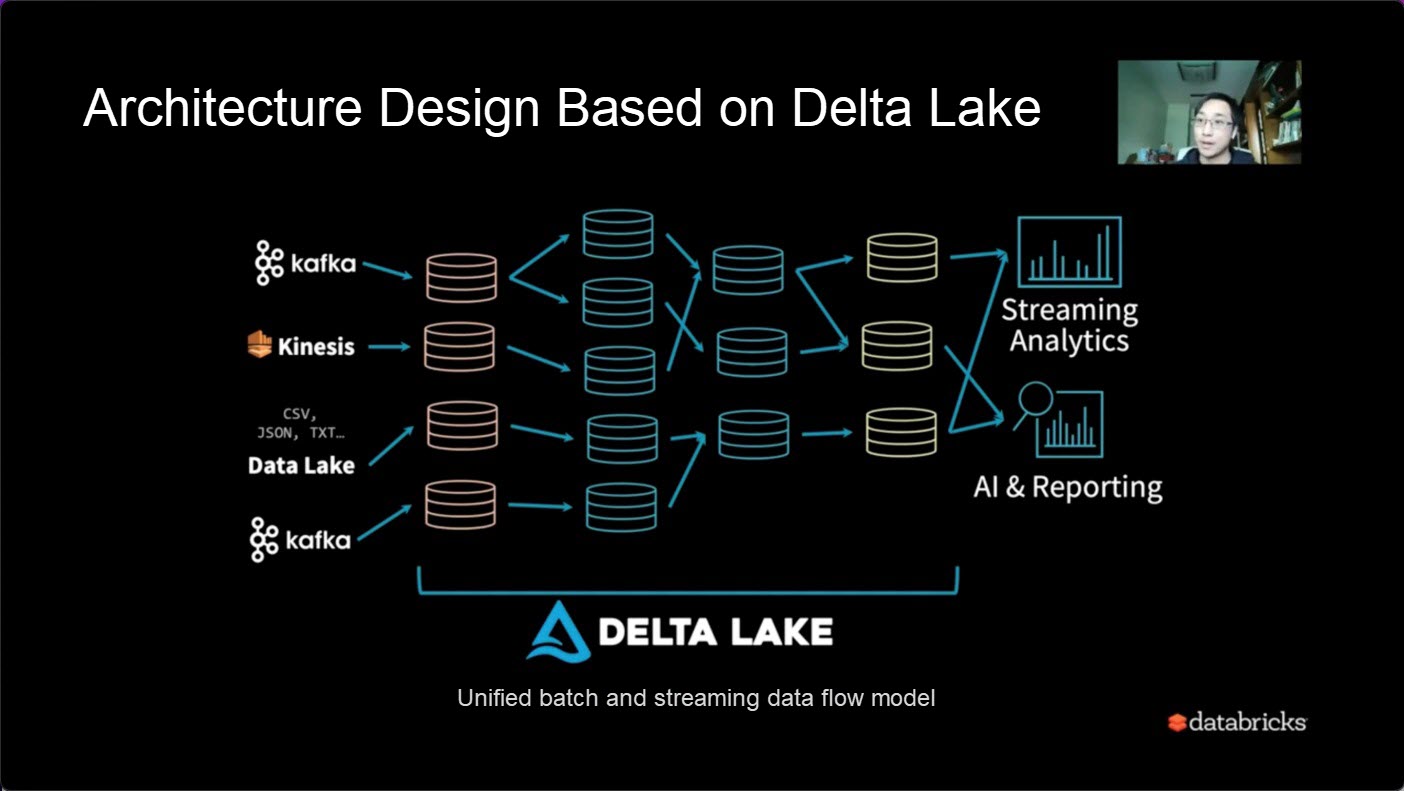

Github Harrydevforlife Delta Architecture Real Time Data Processing Delta lake is an open source project that enables building a lakehouse architecture on top of data lakes. delta lake provides acid transactions, scalable metadata handling, and unifies streaming and batch data processing on top of existing data lakes, such as s3, adls, gcs, and hdfs. Physically removing data from storage can be dangerous, especially if it's before a transaction is complete. we're now ready to look into delta lake acid transactions in more detail. Ready to implement delta lake in your organization? start with a pilot project using the examples above, and gradually expand to more complex use cases as your team gains experience with the. This configuration sets the foundation for our spark session to efficiently handle data processing, integration with delta lake, and implementation of the medallion architecture design in.

Databricks Delta Lake Architecture Build Reliable Data Lakehouse Ready to implement delta lake in your organization? start with a pilot project using the examples above, and gradually expand to more complex use cases as your team gains experience with the. This configuration sets the foundation for our spark session to efficiently handle data processing, integration with delta lake, and implementation of the medallion architecture design in. Create etl pipelines for batch and streaming data with azure databricks to simplify data lake ingestion at any scale. Delta lake is an open source data lake storage framework that helps you perform acid transactions, scale metadata handling, and unify streaming and batch data processing. this topic covers available features for using your data in aws glue when you transport or store your data in a delta lake table. Below, i will explain my process of implementing a simple data lakehouse system using open source software. this implementation can run with cloud data lakes like amazon s3, or on premises ones such as pure storage® flashblade® with s3. Delta lake is an open source project that enables building a lakehouse architecture on top of your existing storage systems such as s3, adls, gcs, and hdfs.

Databricks Data Insight Open Course How To Use Delta Lake To Build A Create etl pipelines for batch and streaming data with azure databricks to simplify data lake ingestion at any scale. Delta lake is an open source data lake storage framework that helps you perform acid transactions, scale metadata handling, and unify streaming and batch data processing. this topic covers available features for using your data in aws glue when you transport or store your data in a delta lake table. Below, i will explain my process of implementing a simple data lakehouse system using open source software. this implementation can run with cloud data lakes like amazon s3, or on premises ones such as pure storage® flashblade® with s3. Delta lake is an open source project that enables building a lakehouse architecture on top of your existing storage systems such as s3, adls, gcs, and hdfs.

Comments are closed.