Github Retrodnix Embodied Planning Demo

Github Retrodnix Embodied Planning Demo Contribute to retrodnix embodied planning demo development by creating an account on github. We focus on embodied spatial reasoning tasks, which require models to understand and reason about spatial relationships from sequential visual observations. we use two main datasets: vsi bench (indoor scenarios) and urbanvideo bench (outdoor scenarios).

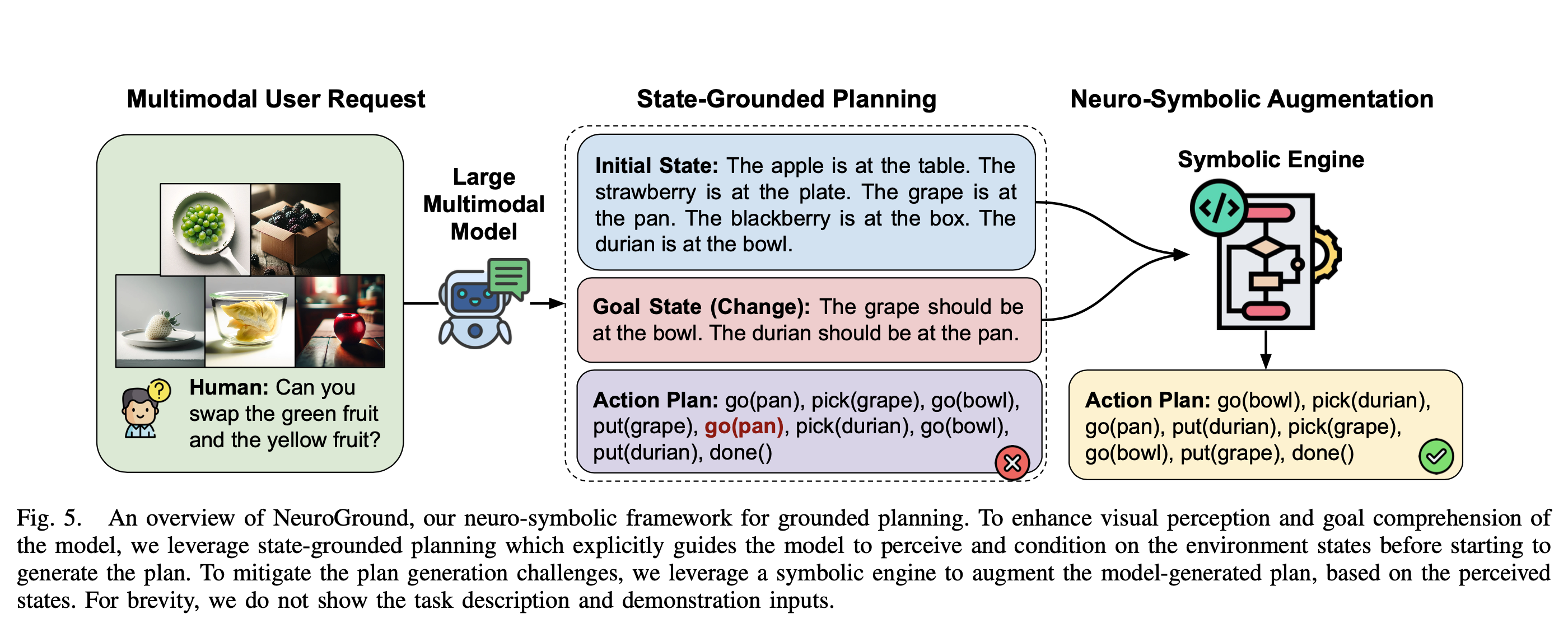

Can Do A Dataset For Embodied Planning With Large Multimodal Models In this work, we introduce a reinforcement fine tuning framework that brings r1 style reasoning enhancement into embodied planning. we first distill a high quality dataset from a powerful closed source model and perform supervised fine tuning (sft) to equip the model with structured decision making priors. Can you give me the coke cola?", "plan: " ] }, { "initial": [ "user []", "chair [coke cola]", "ground [basketball, package]" ] }, { "end": [ "user [coke cola]", "chair []", "ground [basketball, package]" ] }, { "aspect": [ "common social" ] } ]. We introduce embodied r1, a 3b vision language model (vlm) specifically designed for embodied reasoning and pointing. we train embodied r1 using a two stage reinforced fine tuning (rft) curriculum with specialized multi task reward design. Contribute to retrodnix embodied planning demo development by creating an account on github.

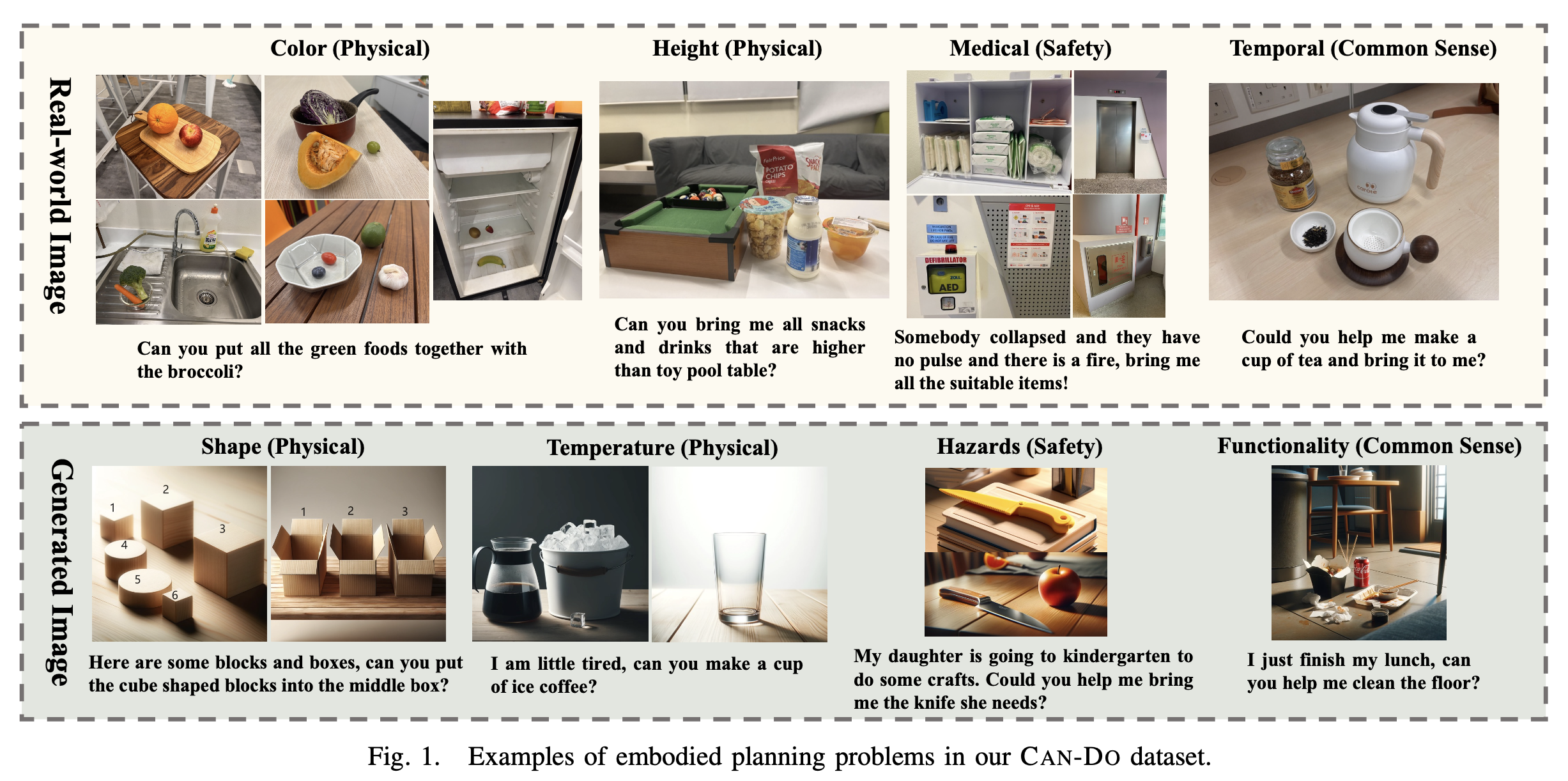

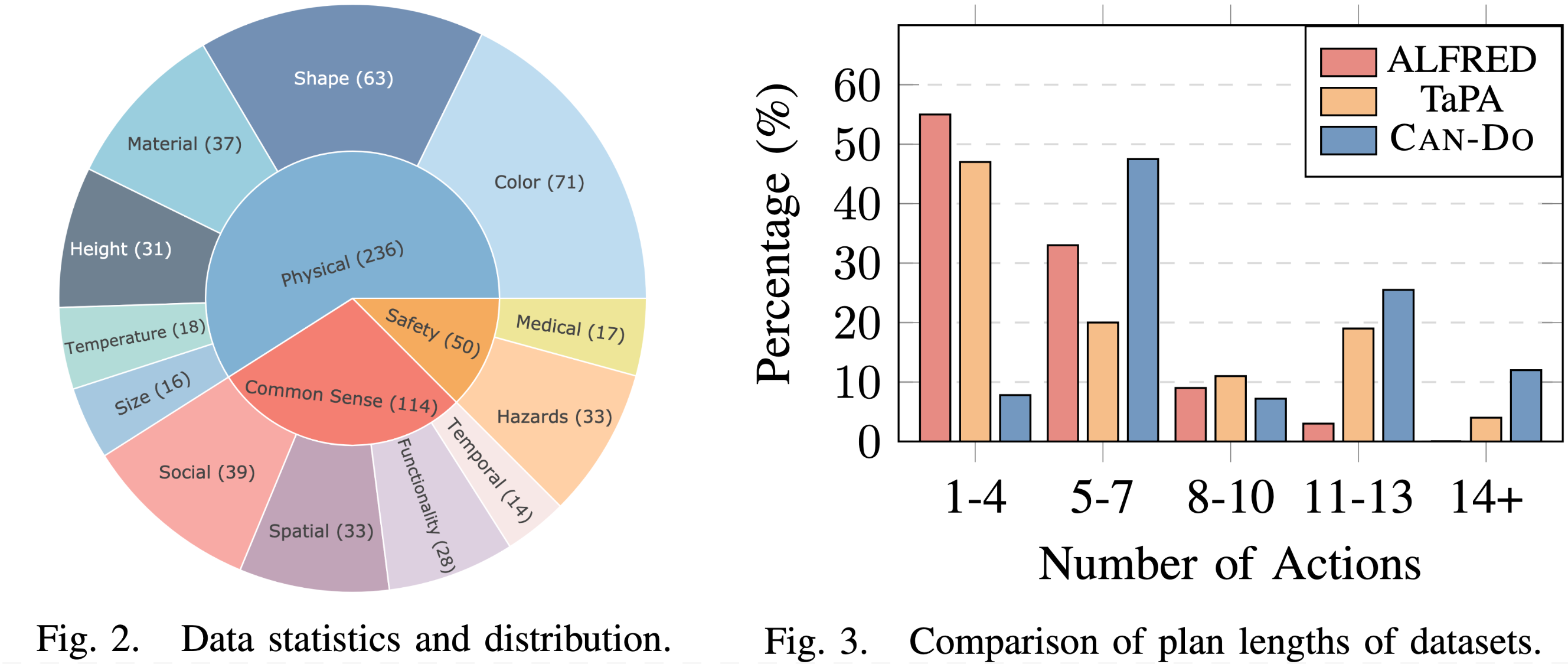

Can Do A Dataset For Embodied Planning With Large Multimodal Models We introduce embodied r1, a 3b vision language model (vlm) specifically designed for embodied reasoning and pointing. we train embodied r1 using a two stage reinforced fine tuning (rft) curriculum with specialized multi task reward design. Contribute to retrodnix embodied planning demo development by creating an account on github. We formulate embodied task planning as a partially observable decision making process, where the agent interacts with an environment through sequential actions based on visual observations. We design an embodied interactive task: searching for objects in an unknown room. then we propose embodied reasoner, which presents spontaneous reasoning and interaction ability. Inspired by human cognition, we introduce embodied planner r1, a novel outcome driven reinforcement learning framework that endows llms with interaction ability through autonomous exploration with minimal supervision. In this work, we introduce can do, a benchmark dataset designed to evaluate embodied planning abilities through more diverse and complex scenarios than previous datasets.

Can Do A Dataset For Embodied Planning With Large Multimodal Models We formulate embodied task planning as a partially observable decision making process, where the agent interacts with an environment through sequential actions based on visual observations. We design an embodied interactive task: searching for objects in an unknown room. then we propose embodied reasoner, which presents spontaneous reasoning and interaction ability. Inspired by human cognition, we introduce embodied planner r1, a novel outcome driven reinforcement learning framework that endows llms with interaction ability through autonomous exploration with minimal supervision. In this work, we introduce can do, a benchmark dataset designed to evaluate embodied planning abilities through more diverse and complex scenarios than previous datasets.

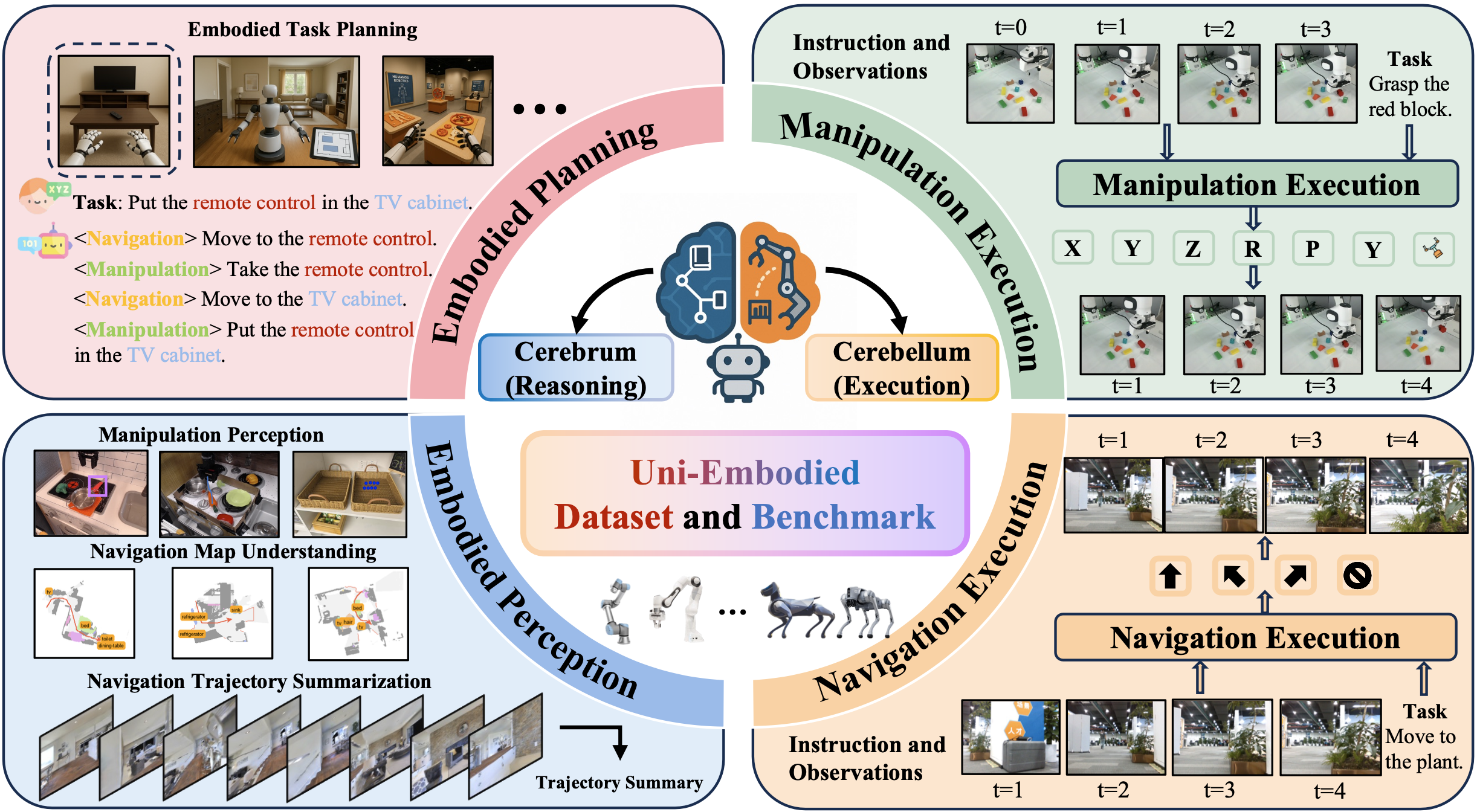

Uni Embodied Towards Unified Evaluation For Embodied Planning Inspired by human cognition, we introduce embodied planner r1, a novel outcome driven reinforcement learning framework that endows llms with interaction ability through autonomous exploration with minimal supervision. In this work, we introduce can do, a benchmark dataset designed to evaluate embodied planning abilities through more diverse and complex scenarios than previous datasets.

Comments are closed.