Github Minjieli6 Supervised Learning Classification Logistic Knn Svm

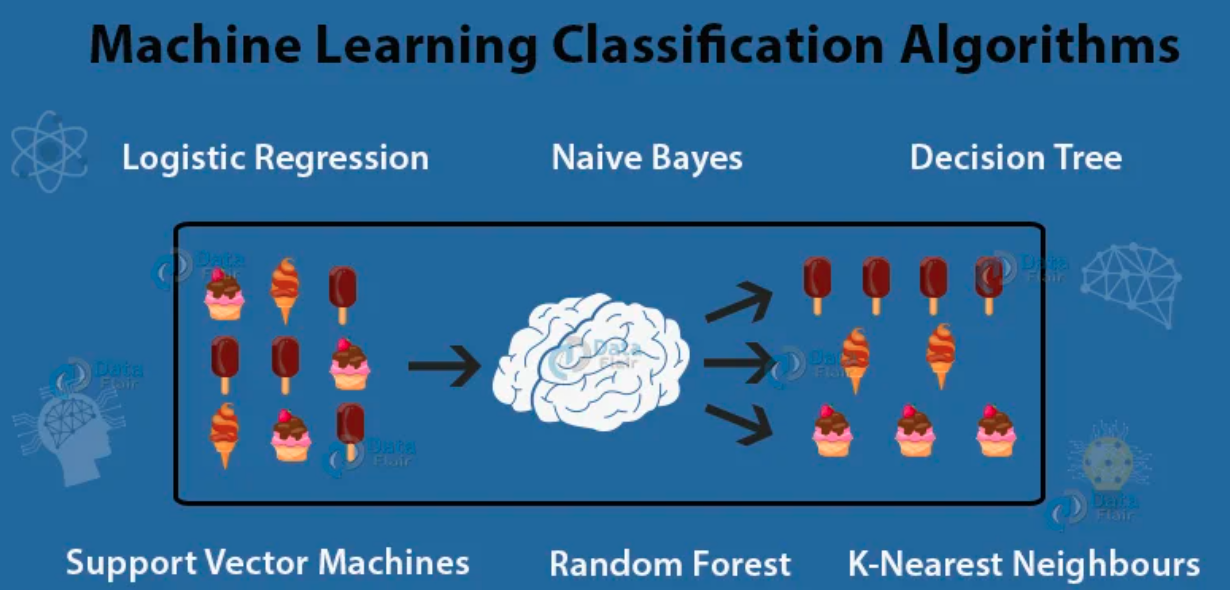

Github Minjieli6 Supervised Learning Classification Logistic Knn Svm Contribute to minjieli6 supervised learning classification logistic knn svm trees forests bagging boosting random fore development by creating an account on github. Learn classification in machine learning with an in depth explanation of logistic regression, knn, decision trees, and svm. includes real world examples, use cases, python code samples, faqs, and beginner friendly explanations.

Github Ngtrdung Rf Svm Logistic Knn Model Build Decision Tree Machine learning classification using knn, decision tree, svm, logistic regression in this article i will show you how to solve classification problem using machine learning algorithms. different …. Applying logistic regression and svm in this chapter you will learn the basics of applying logistic regression and support vector machines (svms) to classification problems. you’ll use the scikit learn library to fit classification models to real data. scikit learn refresher knn classification in this exercise you’ll explore a subset of the large movie review dataset. the variables x train. This research study involves transforming the original datasets and comparative model analysis of four logistic regression (lr), support vector machine (svm), k nearest neighbor (knn), and random. A support vector machine (svm) is a discriminative classifier formally defined by a separating hyperplane. in other words, given labeled training data (supervised learning), the algorithm outputs an optimal hyperplane which categorizes new examples.

Github Sajanabhiav Application Of Logistic Regression Svm And Knn This research study involves transforming the original datasets and comparative model analysis of four logistic regression (lr), support vector machine (svm), k nearest neighbor (knn), and random. A support vector machine (svm) is a discriminative classifier formally defined by a separating hyperplane. in other words, given labeled training data (supervised learning), the algorithm outputs an optimal hyperplane which categorizes new examples. Contribute to minjieli6 supervised learning classification logistic knn svm trees forests bagging boosting random fore development by creating an account on github. In this post, we dive into the world of supervised learning, comparing the performance of four popular algorithms: k nearest neighbors (knn), support vector machines (svm), neural networks (nn), and decision trees with boosting (specifically, adaboost). we’ll analyze their effectiveness on two distinct datasets, highlighting their strengths and weaknesses. Regression 1.5.3. online one class svm 1.5.4. stochastic gradient descent for sparse data 1.5.5. complexity 1.5.6. stopping criterion 1.5.7. tips on practical use 1.5.8. mathematical formulation 1.5.9. implementation details 1.6. nearest neighbors 1.6.1. unsupervised nearest neighbors 1.6.2. nearest neighbors classification 1.6.3. nearest. Neural network random forest linear regression machine learning algorithms naive bayes classifier supervised learning gaussian mixture models logistic regression kmeans decision trees knn principal component analysis dynamic time warping kmeans clustering em algorithm kmeans algorithm singular value decomposition knn classification gaussian.

Supervised Learning Classification Haesong Choi Contribute to minjieli6 supervised learning classification logistic knn svm trees forests bagging boosting random fore development by creating an account on github. In this post, we dive into the world of supervised learning, comparing the performance of four popular algorithms: k nearest neighbors (knn), support vector machines (svm), neural networks (nn), and decision trees with boosting (specifically, adaboost). we’ll analyze their effectiveness on two distinct datasets, highlighting their strengths and weaknesses. Regression 1.5.3. online one class svm 1.5.4. stochastic gradient descent for sparse data 1.5.5. complexity 1.5.6. stopping criterion 1.5.7. tips on practical use 1.5.8. mathematical formulation 1.5.9. implementation details 1.6. nearest neighbors 1.6.1. unsupervised nearest neighbors 1.6.2. nearest neighbors classification 1.6.3. nearest. Neural network random forest linear regression machine learning algorithms naive bayes classifier supervised learning gaussian mixture models logistic regression kmeans decision trees knn principal component analysis dynamic time warping kmeans clustering em algorithm kmeans algorithm singular value decomposition knn classification gaussian.

Github Vertta Supervised Machine Learning Challenge 19 Regression 1.5.3. online one class svm 1.5.4. stochastic gradient descent for sparse data 1.5.5. complexity 1.5.6. stopping criterion 1.5.7. tips on practical use 1.5.8. mathematical formulation 1.5.9. implementation details 1.6. nearest neighbors 1.6.1. unsupervised nearest neighbors 1.6.2. nearest neighbors classification 1.6.3. nearest. Neural network random forest linear regression machine learning algorithms naive bayes classifier supervised learning gaussian mixture models logistic regression kmeans decision trees knn principal component analysis dynamic time warping kmeans clustering em algorithm kmeans algorithm singular value decomposition knn classification gaussian.

Machine Learning Supervised Methods Svm And Knn Pdf Support

Comments are closed.