Github Kwonjunn01 Efficient Caption Refinement Networks For Dense

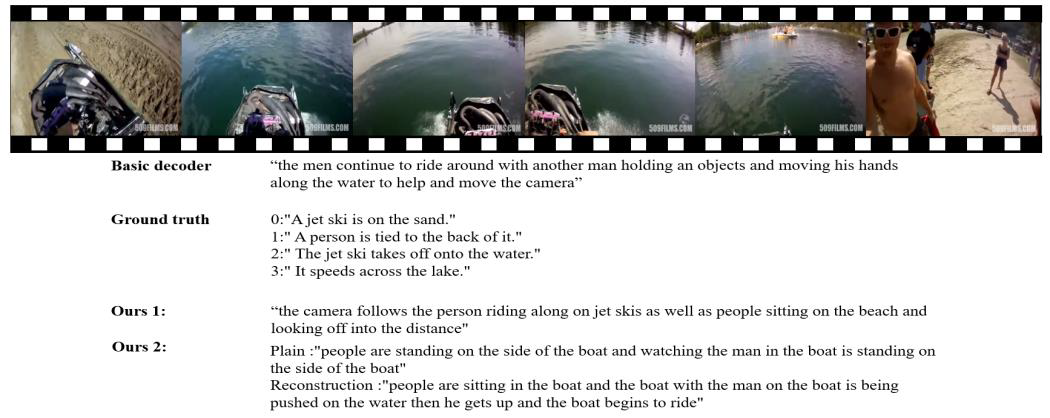

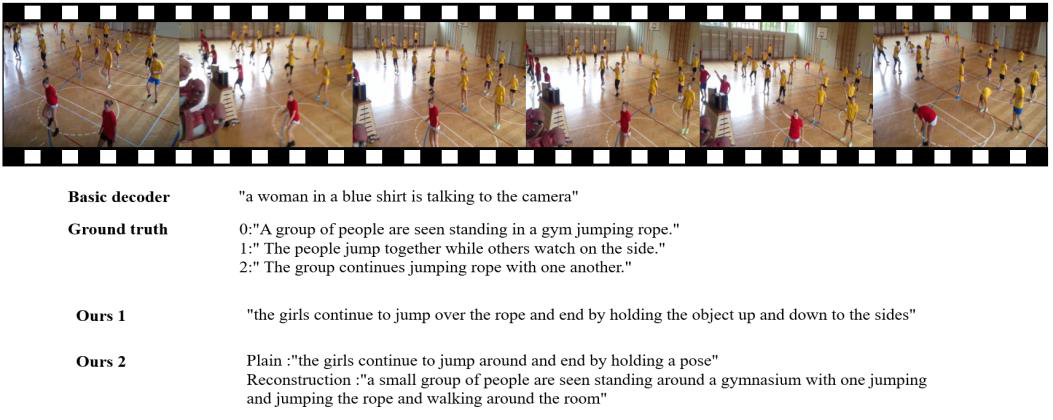

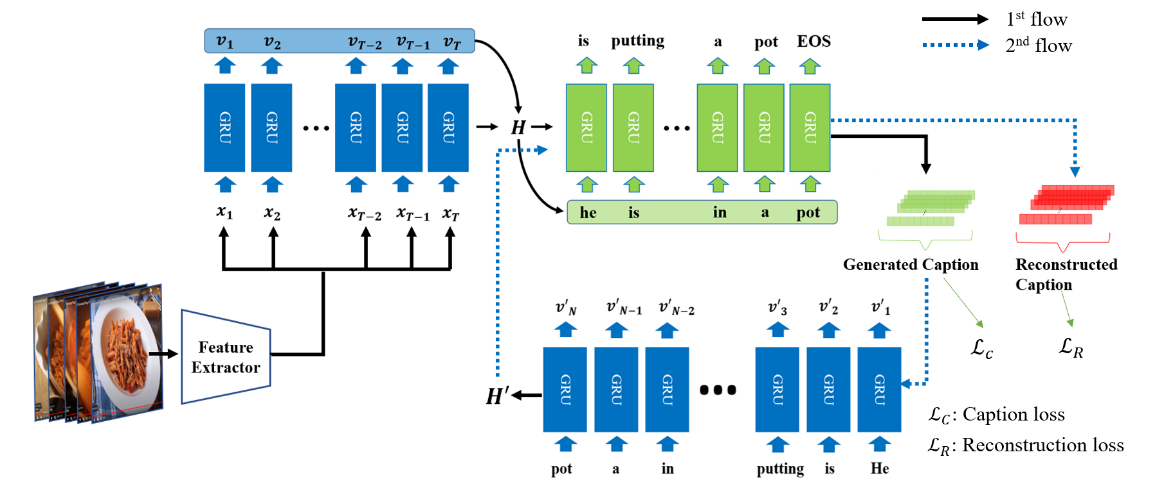

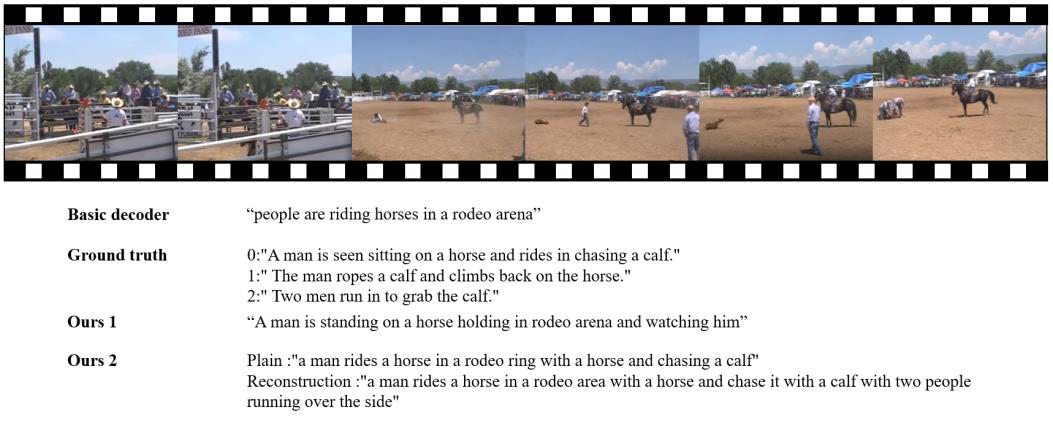

Github Kwonjunn01 Efficient Caption Refinement Networks For Dense Moreover, recent approaches have low memory efficiency by using a large number of layers or additional memory networks to create better captions. to this end, we propose a novel deep learning framework for dense video captioning. A simple, whitespace theme for academics. based on [*folio] ( github bogoli folio) design.

Github Kwonjunn01 Efficient Caption Refinement Networks For Dense Undergraduate thesis. contribute to kwonjunn01 efficient caption refinement networks for dense video captioning development by creating an account on github. Undergraduate thesis. contribute to kwonjunn01 efficient caption refinement networks for dense video captioning development by creating an account on github. In this paper, we propose a top down dense video cap tioning framework termed “sketch, ground, and refine” (sgr), which first generates a video level story and then grounds the story to video segments for further refinement. This paper focuses on a novel and challenging vision task, dense video captioning, which aims to automatically describe a video clip with multiple informative and diverse caption sentences.

Github Kwonjunn01 Efficient Caption Refinement Networks For Dense In this paper, we propose a top down dense video cap tioning framework termed “sketch, ground, and refine” (sgr), which first generates a video level story and then grounds the story to video segments for further refinement. This paper focuses on a novel and challenging vision task, dense video captioning, which aims to automatically describe a video clip with multiple informative and diverse caption sentences. In the first round, the prompt briefly introduces the differences between structured captions and uses the dense video frames as input to generate the short caption, main object caption, background caption, camera caption, and the detailed caption. The dense video captioning task aims to detect and describe a sequence of events in a video for detailed and coherent storytelling. previous works mainly adopt. We have proposed a caption and aesthetics guided framework for cropping images according to the user’s intention. our framework is the first to account for the user’s intention directly from the provided image caption. An reasoner. the extractor discovers environmental transition knowledge from multi agent interaction trajectories, while the reasoner deduces the preconditions of each action primitive based on the transition knowledge. by yin gu, qi liu, zhi li, kai zhang #arxiv knowpc: knowledge driven programmatic reinforcement learning for zero shot coordination Ágnes fülöp and 5 others 6 reactions · 3.

Github Kwonjunn01 Efficient Caption Refinement Networks For Dense In the first round, the prompt briefly introduces the differences between structured captions and uses the dense video frames as input to generate the short caption, main object caption, background caption, camera caption, and the detailed caption. The dense video captioning task aims to detect and describe a sequence of events in a video for detailed and coherent storytelling. previous works mainly adopt. We have proposed a caption and aesthetics guided framework for cropping images according to the user’s intention. our framework is the first to account for the user’s intention directly from the provided image caption. An reasoner. the extractor discovers environmental transition knowledge from multi agent interaction trajectories, while the reasoner deduces the preconditions of each action primitive based on the transition knowledge. by yin gu, qi liu, zhi li, kai zhang #arxiv knowpc: knowledge driven programmatic reinforcement learning for zero shot coordination Ágnes fülöp and 5 others 6 reactions · 3.

Comments are closed.