Github Jssprz Video Captioning Datasets Summary About Video To Text

Github Jssprz Video Captioning Datasets Summary About Video To Text In this repository, we organize the information about more that 25 datasets of (video, text) pairs that have been used for training and evaluating video captioning models. In this repository, we organize the information about more that 25 datasets of (video, text) pairs that have been used for training and evaluating video captioning models.

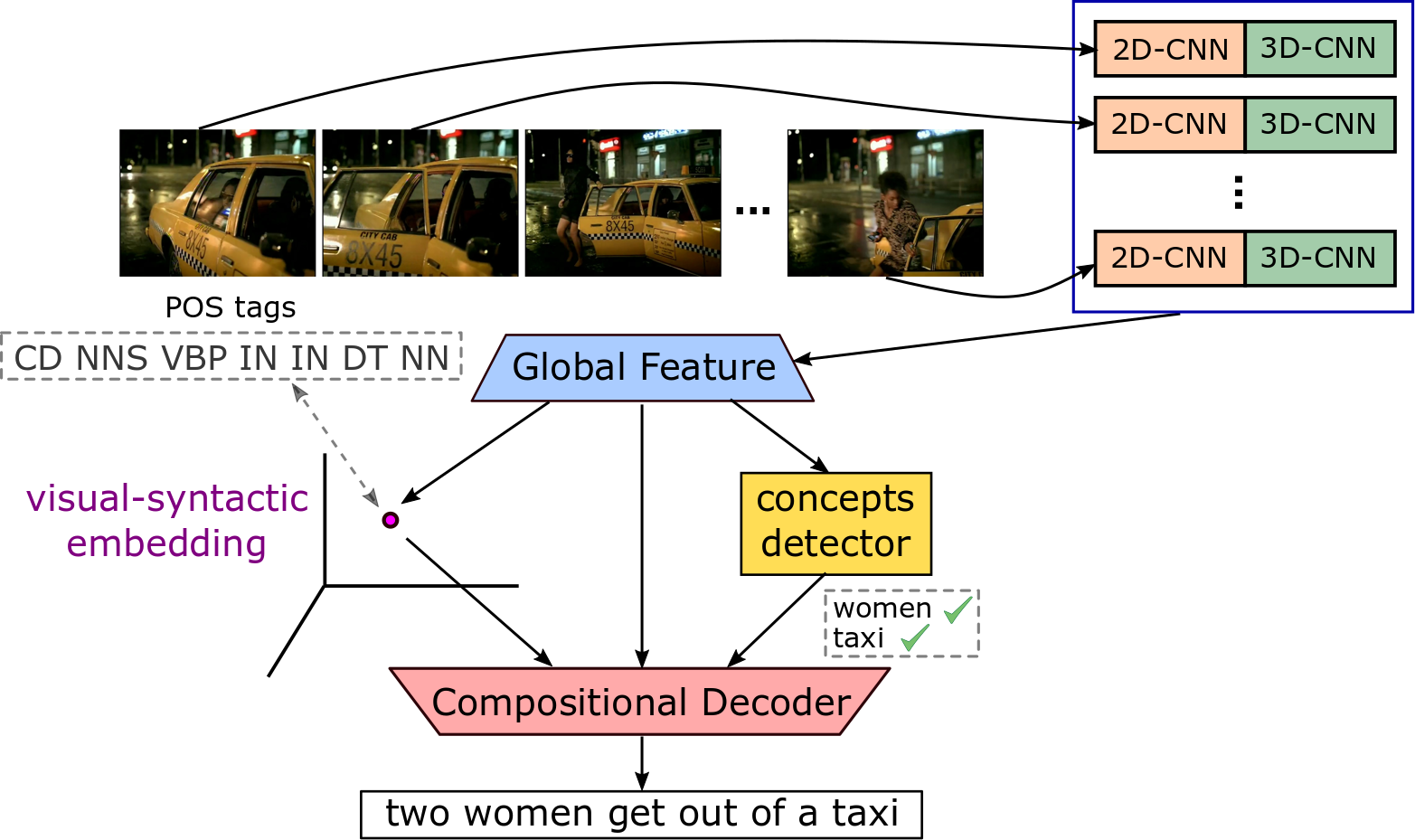

Github Jssprz Visual Syntactic Embedding Video Captioning Source Abstract—video captioning (vc) is a fast moving, cross disciplinary area of research that bridges work in the fields of computer vision, natural language processing (nlp), linguistics and human computer interaction. in essence, vc involves understanding a video and describing it with language. The dataset can be used for various downstream tasks, including video summarization, video captioning, and recipe generation. video summarization aims to shorten the original video by selecting and stitching together the most important segments. This article explains how video captioning datasets are annotated and why precise multimodal labeling is essential for building strong video language models. it covers segmentation, temporal grounding, object tracking, action identification, descriptive language generation, multimodal alignment and quality control. it also explores how video captioning datasets support video search. Dvc is divided into three sub tasks: (1) video feature extraction, (2) temporal event localization, and (3) dense caption generation. in this survey, we discuss all of the studies that claim to perform dvc along with its sub tasks and summarize their results.

Github Jssprz Visual Syntactic Embedding Video Captioning Source This article explains how video captioning datasets are annotated and why precise multimodal labeling is essential for building strong video language models. it covers segmentation, temporal grounding, object tracking, action identification, descriptive language generation, multimodal alignment and quality control. it also explores how video captioning datasets support video search. Dvc is divided into three sub tasks: (1) video feature extraction, (2) temporal event localization, and (3) dense caption generation. in this survey, we discuss all of the studies that claim to perform dvc along with its sub tasks and summarize their results. This paper aims to unify these efforts by introducing vicas, a new dataset containing thousands of challenging videos, each annotated with detailed, human written captions and temporally consistent, pixel accurate masks for multiple objects with phrase grounding. Generate a detailed, vivid caption for the video, covering all categories, ensuring it's engaging, informative, and rich enough for ai to recreate the video content. The datasets for video captioning are varied, and the majority of them are publicly available; they mainly belong to cooking or movie clips. this subsection is highlighted through the description and current dataset status regarding the availability and organization of their annotation files. Numerous approaches, datasets, and measurement metrics have been introduced in the literature, calling for a systematic survey to guide research efforts in this exciting new direction.

Github Adityarajkishan Videocaptioning This Is A Project To Upload This paper aims to unify these efforts by introducing vicas, a new dataset containing thousands of challenging videos, each annotated with detailed, human written captions and temporally consistent, pixel accurate masks for multiple objects with phrase grounding. Generate a detailed, vivid caption for the video, covering all categories, ensuring it's engaging, informative, and rich enough for ai to recreate the video content. The datasets for video captioning are varied, and the majority of them are publicly available; they mainly belong to cooking or movie clips. this subsection is highlighted through the description and current dataset status regarding the availability and organization of their annotation files. Numerous approaches, datasets, and measurement metrics have been introduced in the literature, calling for a systematic survey to guide research efforts in this exciting new direction.

Github Yelsky S Image Captioning Image Captioning Using Tensorflow The datasets for video captioning are varied, and the majority of them are publicly available; they mainly belong to cooking or movie clips. this subsection is highlighted through the description and current dataset status regarding the availability and organization of their annotation files. Numerous approaches, datasets, and measurement metrics have been introduced in the literature, calling for a systematic survey to guide research efforts in this exciting new direction.

Github Bhj2001 Video Captioning Scene Graph Based Video Captioning

Comments are closed.