Github Jiasenlu Hiecoattenvqa

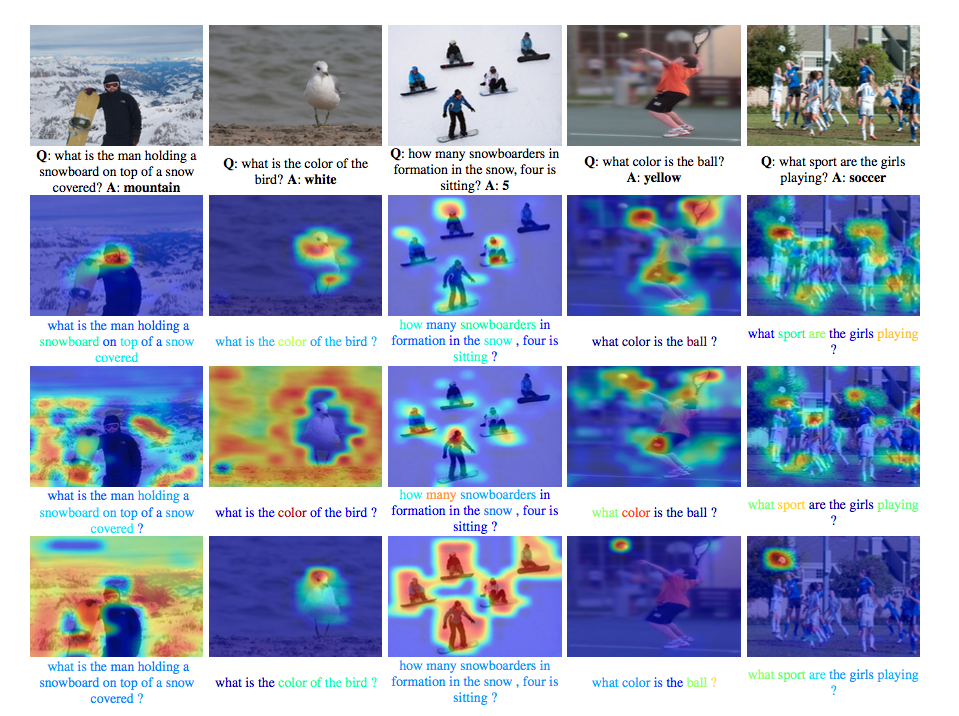

Github Jiasenlu Hiecoattenvqa Contribute to jiasenlu hiecoattenvqa development by creating an account on github. In this paper, we argue that in addition to modeling “where to look” or visual attention, it is equally important to model “what words to listen to” or question attention. we present a novel co attention model for vqa that jointly reasons about image and question attention.

Github Jiasenlu Hiecoattenvqa $ th prepro img vgg.lua input json data vqa data prepro.json image root home jiasenlu data cnn proto image model vgg ilsvrc 19 layers deploy.prototxt cnn model image model vgg ilsvrc 19 layers.caffemodel. Hi, i am currently a staff research scientist in apple. previously, i was a senior research scientist at prior at allen institute for ai, seattle, where i led multimodal llm initiatives, inclduing unified io, unified io 2 and molmo. my research interests span in computer vision, vision & language. Contribute to jiasenlu hiecoattenvqa development by creating an account on github. Contribute to jiasenlu hiecoattenvqa development by creating an account on github.

Welcome To My Homepage Jiasen Lu S Homepage Contribute to jiasenlu hiecoattenvqa development by creating an account on github. Contribute to jiasenlu hiecoattenvqa development by creating an account on github. Contribute to jiasenlu hiecoattenvqa development by creating an account on github. Contribute to jiasenlu hiecoattenvqa development by creating an account on github. Code: github jiasenlu hiecoattenvqa. related blog: 【ai前沿】机器阅读理解与问答·dynamic co attention networks. introduction: 本文提出了一种新的联合图像和文本特征的协同显著性的概念,使得两个不同模态的特征可以相互引导。 此外,作者也对输入的文本信息,从多个角度进行加权处理,构建多个不同层次的 image question co attention maps,即:word level,phrase level and question level。. We design and test multiple game inspired novel attention annotation interfaces that require the subject to sharpen regions of a blurred image to answer a question. thus, we introduce the vqa hat (human attention) dataset.

Welcome To My Homepage Jiasen Lu S Homepage Contribute to jiasenlu hiecoattenvqa development by creating an account on github. Contribute to jiasenlu hiecoattenvqa development by creating an account on github. Code: github jiasenlu hiecoattenvqa. related blog: 【ai前沿】机器阅读理解与问答·dynamic co attention networks. introduction: 本文提出了一种新的联合图像和文本特征的协同显著性的概念,使得两个不同模态的特征可以相互引导。 此外,作者也对输入的文本信息,从多个角度进行加权处理,构建多个不同层次的 image question co attention maps,即:word level,phrase level and question level。. We design and test multiple game inspired novel attention annotation interfaces that require the subject to sharpen regions of a blurred image to answer a question. thus, we introduce the vqa hat (human attention) dataset.

Welcome To My Homepage Jiasen Lu S Homepage Code: github jiasenlu hiecoattenvqa. related blog: 【ai前沿】机器阅读理解与问答·dynamic co attention networks. introduction: 本文提出了一种新的联合图像和文本特征的协同显著性的概念,使得两个不同模态的特征可以相互引导。 此外,作者也对输入的文本信息,从多个角度进行加权处理,构建多个不同层次的 image question co attention maps,即:word level,phrase level and question level。. We design and test multiple game inspired novel attention annotation interfaces that require the subject to sharpen regions of a blurred image to answer a question. thus, we introduce the vqa hat (human attention) dataset.

The Accuracy Is Weird Issue 3 Jiasenlu Hiecoattenvqa Github

Comments are closed.