Github J2kun Exp3 Python Code For The Post Adversarial Bandits And

Github Tienenkuo Python Multiple Arm Bandits Algorithm Implement Python code for the post "adversarial bandits and the exp3 algorithm" j2kun exp3. Python code for the post "adversarial bandits and the exp3 algorithm" exp3 readme.md at main · j2kun exp3.

Github Bgalbraith Bandits Python Library For Multi Armed Bandits Python code for the post "adversarial bandits and the exp3 algorithm" exp3 first example.txt at main · j2kun exp3. This post explores four algorithms for solving the multi armed bandit problem (epsilon greedy, exp3, bayesian ucb, and ucb1), with implementations in python and discussion of experimental results using the movielens 25m dataset. P regret bound of o tn is to use the tsallis entropy with mirror descent. this meets the tn regret lower bound for the problem (adversarial multi armed bandits). Exp3a is an average based implementation that builds on the classic exp3 algorithm (auer et al., 2002). in our experiments, we use the exp3 ix variant (neu, 2015) which achieves better empirical performance through implicit exploration rather than forced exploration.

Github Playtikaoss Pybandits Python Library For Multi Armed Bandits P regret bound of o tn is to use the tsallis entropy with mirror descent. this meets the tn regret lower bound for the problem (adversarial multi armed bandits). Exp3a is an average based implementation that builds on the classic exp3 algorithm (auer et al., 2002). in our experiments, we use the exp3 ix variant (neu, 2015) which achieves better empirical performance through implicit exploration rather than forced exploration. In this section, we define the setting of the adversarial bandit problem addressed in this paper and describe the details of the baseline algorithm exp3 along with its regret upper bound. We will sketch one proof of the exp3 algorithm, which reduces to the proof of the hedge algorithm from the last lecture, and follow the discussion on lattimore p.155 (1) to give a second proof with an improved bound. Except as otherwise noted, the content of this page is licensed under the creative commons attribution 4.0 license, and code samples are licensed under the apache 2.0 license. For the moment, we implemented two naive bandit strategies : the greedy strategy (or follow the leader, ftl) and a strategy that explores arms uniformly at random (uniformexploration). such.

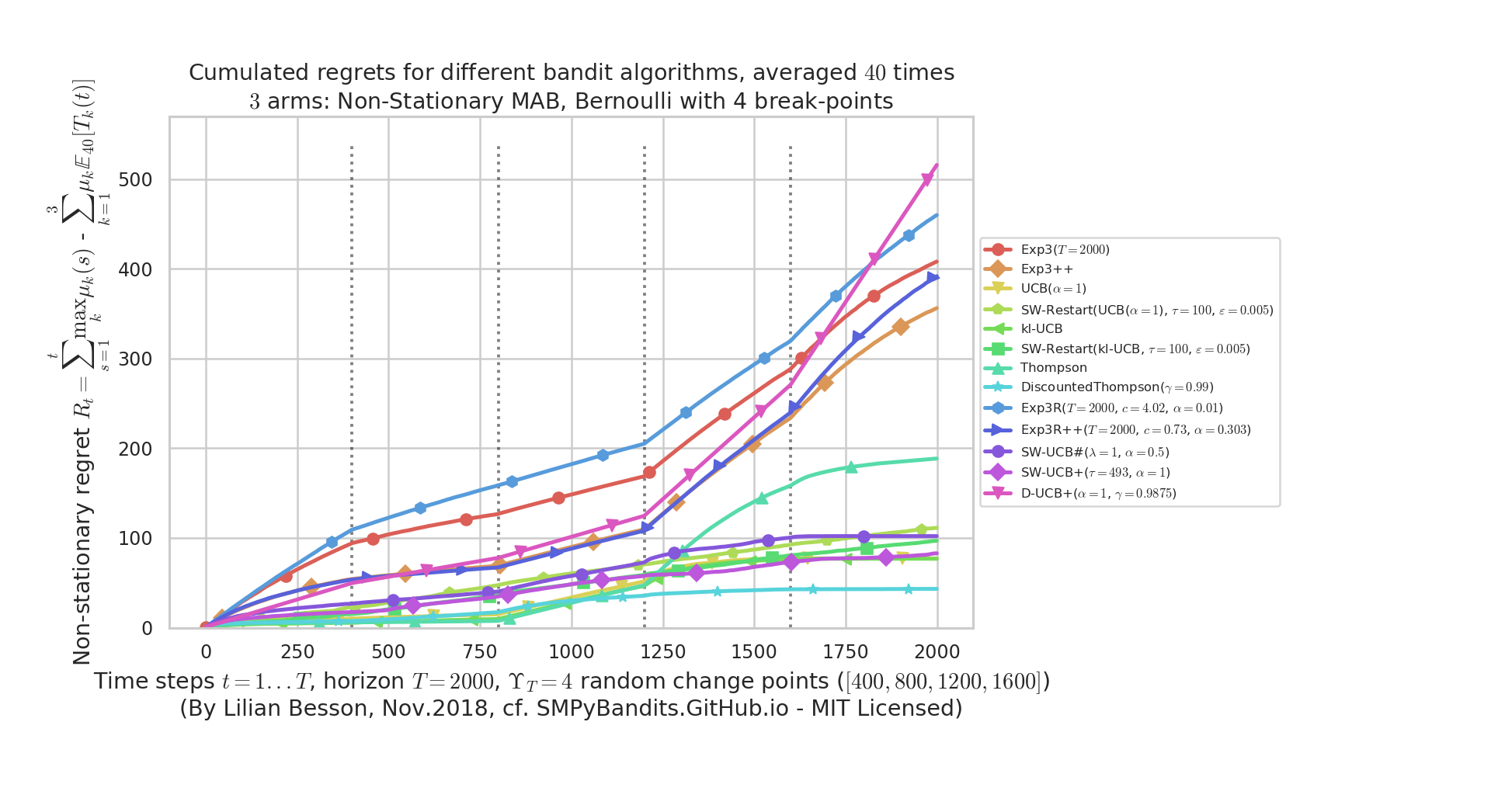

Implement Exp3 R To Tackle Switching Bandits Issue 100 In this section, we define the setting of the adversarial bandit problem addressed in this paper and describe the details of the baseline algorithm exp3 along with its regret upper bound. We will sketch one proof of the exp3 algorithm, which reduces to the proof of the hedge algorithm from the last lecture, and follow the discussion on lattimore p.155 (1) to give a second proof with an improved bound. Except as otherwise noted, the content of this page is licensed under the creative commons attribution 4.0 license, and code samples are licensed under the apache 2.0 license. For the moment, we implemented two naive bandit strategies : the greedy strategy (or follow the leader, ftl) and a strategy that explores arms uniformly at random (uniformexploration). such.

Bandit Simulations Python Contextual Bandits Notebooks Linucb Hybrid Except as otherwise noted, the content of this page is licensed under the creative commons attribution 4.0 license, and code samples are licensed under the apache 2.0 license. For the moment, we implemented two naive bandit strategies : the greedy strategy (or follow the leader, ftl) and a strategy that explores arms uniformly at random (uniformexploration). such.

Comments are closed.