Github Iamaaditya Pixel Deflection Deflecting Adversarial Attacks

Github Iamaaditya Pixel Deflection Deflecting Adversarial Attacks Deflecting adversarial attacks with pixel deflection iamaaditya pixel deflection. Use a class activation type map (r cam) to select the pixel to deflect. the less important the pixel for classification, the higher the chances that it will get deflected.

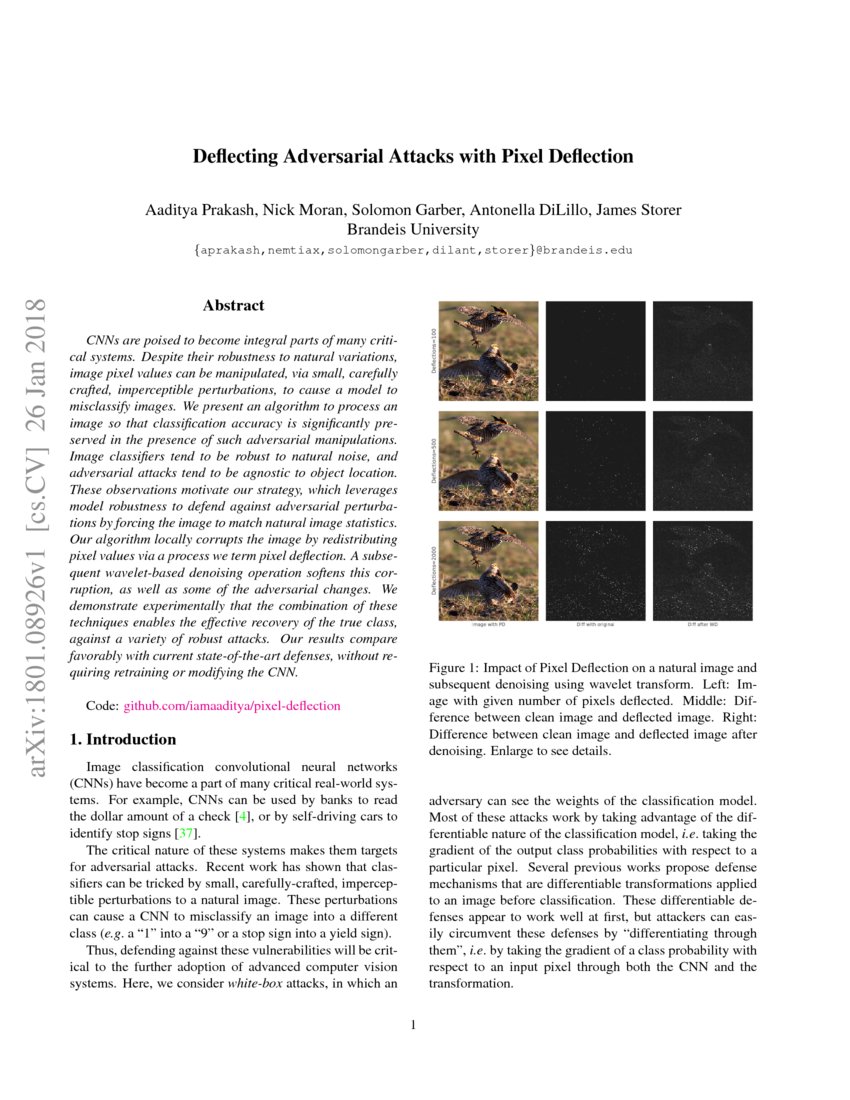

Github Iamaaditya Pixel Deflection Deflecting Adversarial Attacks Motivated by the robustness of cnns and the fragility of adversarial attacks, we have presented a technique which combines a computationally efficient image trans form, pixel deflection, with soft wavelet denoising. We present an algorithm to process an image so that classification accuracy is significantly preserved in the presence of such adversarial manipulations. image classifiers tend to be robust to natural noise, and adversarial attacks tend to be agnostic to object location. We present an algorithm to process an image so that classification accuracy is significantly preserved in the presence of such adversarial manipulations. image classifiers tend to be robust to. We present an algorithm to process an image so that classification accuracy is significantly preserved in the presence of such adversarial manipulations. image classifiers tend to be robust to natural noise, and adversarial attacks tend to be agnostic to object location.

Prediction Unchanged Issue 6 Iamaaditya Pixel Deflection Github We present an algorithm to process an image so that classification accuracy is significantly preserved in the presence of such adversarial manipulations. image classifiers tend to be robust to. We present an algorithm to process an image so that classification accuracy is significantly preserved in the presence of such adversarial manipulations. image classifiers tend to be robust to natural noise, and adversarial attacks tend to be agnostic to object location. Our algorithm locally corrupts the image by redistributing pixel values via a process we term pixel deflection. a subsequent wavelet based denoising operation softens this corruption, as well as some of the adversarial changes. Deflecting adversarial attack with pixel deflectionpaper: arxiv.org pdf 1801.08926.pdfdemo: iamaaditya.github.io 2018 02 demo for pixel defle.

Deflecting Adversarial Attacks With Pixel Deflection Deepai Our algorithm locally corrupts the image by redistributing pixel values via a process we term pixel deflection. a subsequent wavelet based denoising operation softens this corruption, as well as some of the adversarial changes. Deflecting adversarial attack with pixel deflectionpaper: arxiv.org pdf 1801.08926.pdfdemo: iamaaditya.github.io 2018 02 demo for pixel defle.

Github Zzdyyy Understanding Adversarial Attacks Mia More Information

Comments are closed.