Github Echen Unsupervised Language Identification An Unsupervised

Github Echen Unsupervised Language Identification An Unsupervised Given a set of strings from different languages, build a detector for the majority language (often, but not necessarily, english). more information on the algorithm here. An unsupervised language identification algorithm in ruby, built originally for detecting english language tweets. pulse · echen unsupervised language identification.

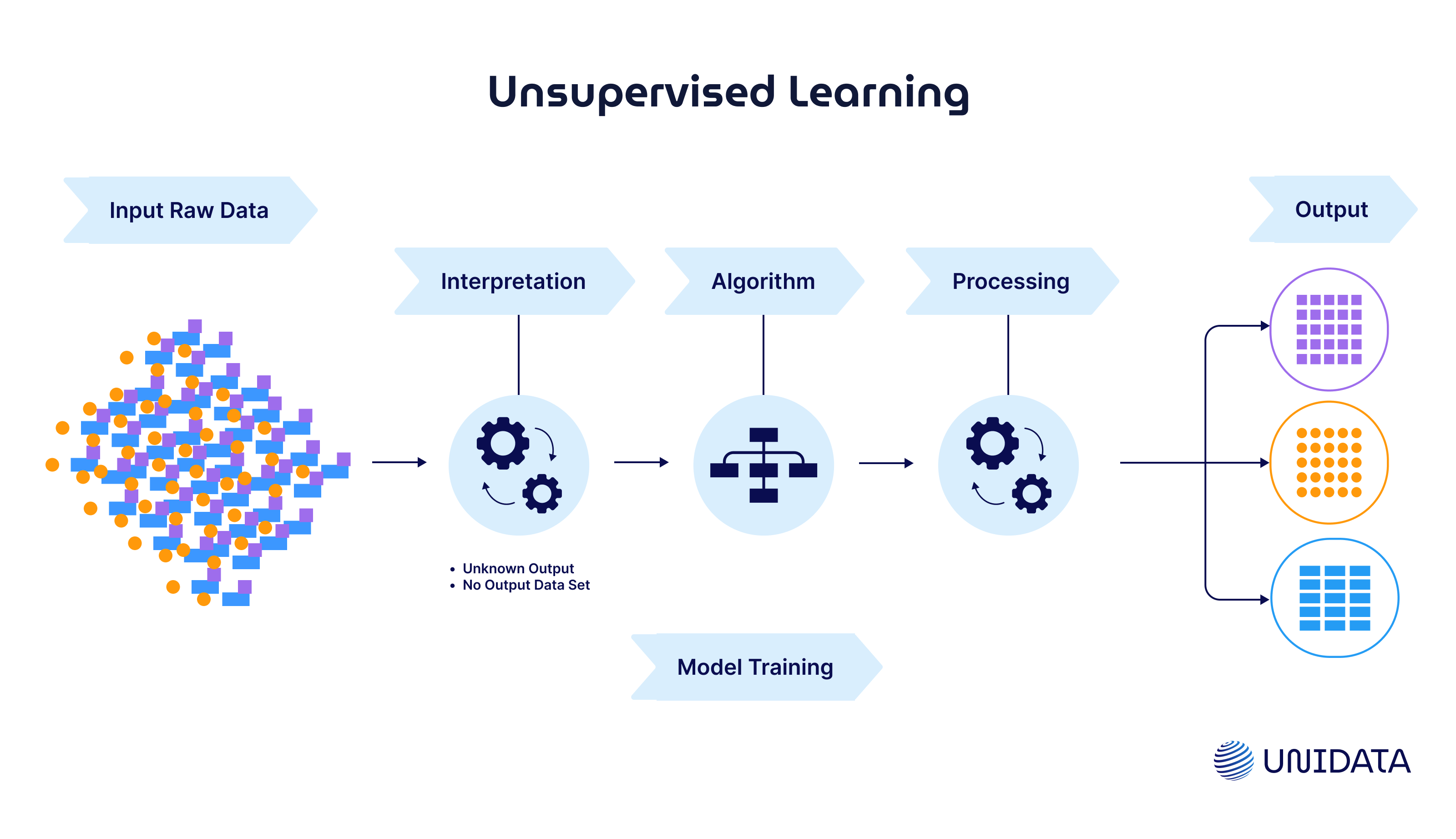

Supervised Vs Unsupervised Learning Complete Guide Unidata An unsupervised language identification algorithm in ruby, built originally for detecting english language tweets. releases · echen unsupervised language identification. Hodoscope, a tool that operationalizes unsupervised monitoring by drawing an analogy to unsupervised learning, is introduced and behavior descriptions discovered through hodoscope could improve the detection accuracy of llm based judges, demonstrating a path from unsupervised to supervised monitoring. existing approaches to monitoring ai agents rely on supervised evaluation: human written. The international conference on learning representations (iclr) is one of the top machine learning conferences in the world. the 2026 event will be held in rio de janeiro, brazil, starting at april 22nd. to facilitate rapid community engagement with the presented research, we have compiled an extensive index of accepted papers that have associated public code or data repositories. we list all. To address this challenge, we introduce a new unsupervised algorithm, internal coherence maximization (icm), to fine tune pretrained language models on their own generated labels, \emph {without external supervision}.

Supervised Versus Unsupervised Learning Explained The international conference on learning representations (iclr) is one of the top machine learning conferences in the world. the 2026 event will be held in rio de janeiro, brazil, starting at april 22nd. to facilitate rapid community engagement with the presented research, we have compiled an extensive index of accepted papers that have associated public code or data repositories. we list all. To address this challenge, we introduce a new unsupervised algorithm, internal coherence maximization (icm), to fine tune pretrained language models on their own generated labels, \emph {without external supervision}. Week 10 update: unsupervised ml techniques this week focused on uncovering hidden patterns in unlabeled data using unsupervised learning techniques like clustering and association rule mining. For an unsupervised machine learning model to identify patterns or structures within unlabeled data, it applies algorithms that discover inherent groupings, correlations, or low dimensional. Protein language models (plms) provide powerful sequence representations, yet their effectiveness for unsupervised viral clade assignment remains uncertain. in this study, we evaluated embeddings from prott5, protbert, carp, and several esm 2 variants on influenza a h3n2 hemagglutinin sequences. using dimensionality reduction (t sne, umap, pca, mds) and clustering with hdbscan, we compared plm. Abstract cross lingual speech emotion recognition (ser) is frequently hindered by speaker specific prosodic variations that obscure universal emotional cues. standard models often fail to generalize across languages due to the domain shift caused by differing acoustic standards. to address this, we present a novel ser approach that integrates unsupervised speaker adaptation directly at.

Comments are closed.