Github Deepai Comm Skills Regularized Task Decomposition For Multi

Github Deepai Comm Skills Regularized Task Decomposition For Multi Contribute to deepai comm skills regularized task decomposition for multi task offline reinforcement learning development by creating an account on github. We present a novel multi task offline rl model that enables the task decomposition into achievable subtasks through the quality aware joint learning on skills and tasks. the model ensures the robustness of learned policies upon the mixed configurations of different quality datasets.

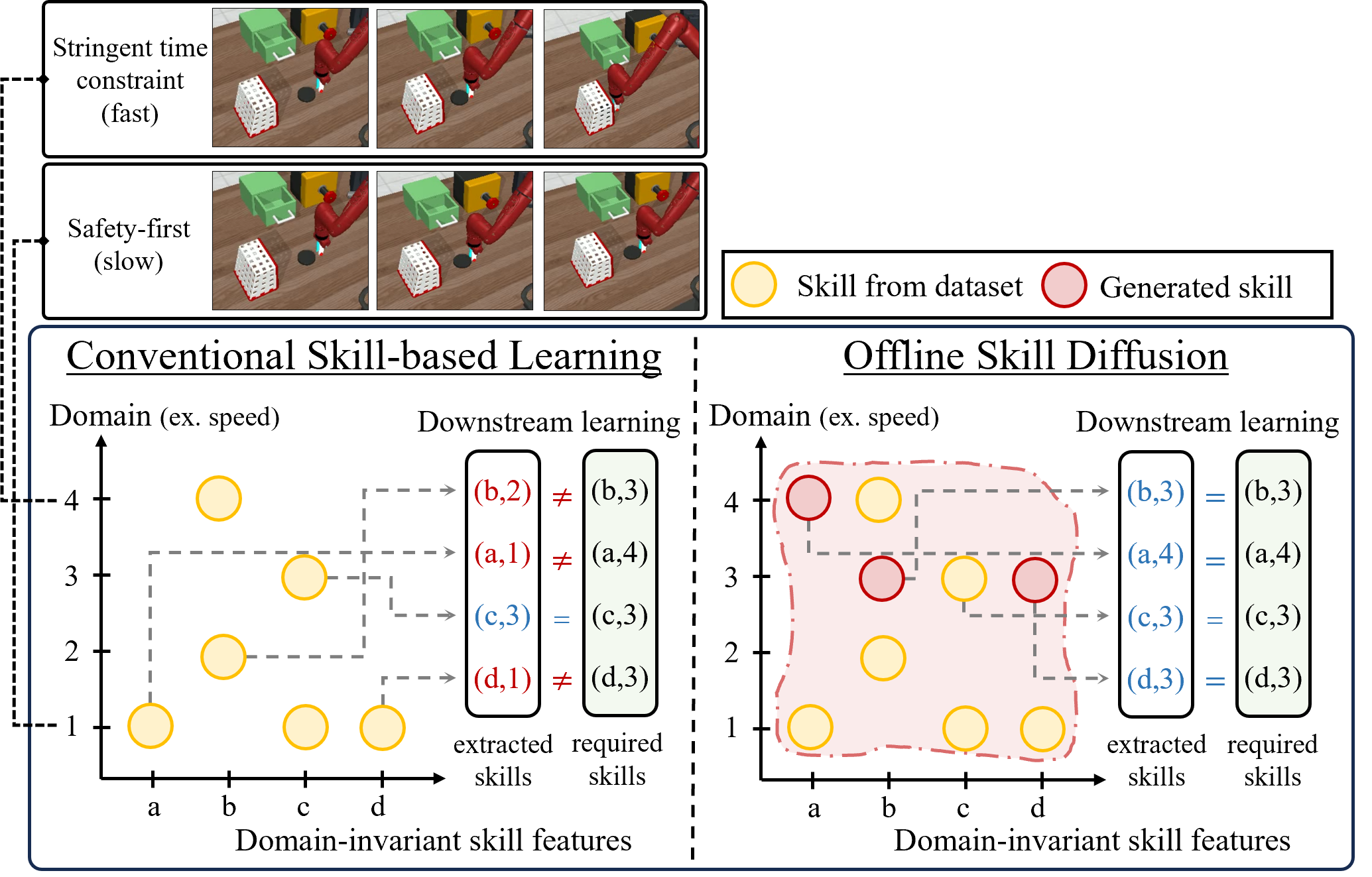

Paperreading Skills Regularized Task Decomposition For Multi Task To learn the shareable knowledge across those datasets effectively, we employ a task decomposition method for which common skills are jointly learned and used as guidance to reformulate a task in shared and achievable subtasks. When omitting the baseline term v q(s) and up to the constant, we also obtain that the weighted skill regularized loss in (4) renders subtask embeddings to be matched with high quality skills for a given task, thereby facilitating the task decomposition into shareable and achievable subtasks. To learn the shareable knowledge across those datasets effectively, we employ a task decomposition method for which common skills are jointly learned and used as guidance to reformulate a. This work develops a simple technique for data sharing in multi task offline rl that routes data based on the improvement over the task specific data and achieves the best or comparable performance compared to prior offline multi task rl methods and previous data sharing approaches.

Deepai Comm Github To learn the shareable knowledge across those datasets effectively, we employ a task decomposition method for which common skills are jointly learned and used as guidance to reformulate a. This work develops a simple technique for data sharing in multi task offline rl that routes data based on the improvement over the task specific data and achieves the best or comparable performance compared to prior offline multi task rl methods and previous data sharing approaches. To learn the shareable knowledge across those datasets effectively, we employ a task decomposition method for which common skills are jointly learned and used as guidance to reformulate a task in shared and achievable subtasks. The paper presents a novel method for multi task offline reinforcement learning (rl) called skills regularized task decomposition (srtd). srtd learns a set of reusable skills that can be efficiently combined to solve multiple tasks, improving sample efficiency. Contribute to deepai comm skills regularized task decomposition for multi task offline reinforcement learning development by creating an account on github.

Skills Regularized Task Decomposition For Multi Task Offline To learn the shareable knowledge across those datasets effectively, we employ a task decomposition method for which common skills are jointly learned and used as guidance to reformulate a task in shared and achievable subtasks. The paper presents a novel method for multi task offline reinforcement learning (rl) called skills regularized task decomposition (srtd). srtd learns a set of reusable skills that can be efficiently combined to solve multiple tasks, improving sample efficiency. Contribute to deepai comm skills regularized task decomposition for multi task offline reinforcement learning development by creating an account on github.

Comments are closed.