Getting Empty Buffers When Calling Createtflitesimdmodule Issue 717

Getting Empty Buffers When Calling Createtflitesimdmodule Issue 717 Providing solution here for the benefit of the community. the issue was resolved by updating the files, ref link. Have a question about this project? sign up for a free github account to open an issue and contact its maintainers and the community.

Getting Empty Buffers When Calling Createtflitesimdmodule Issue 717 For custom ops a configuration buffer will be provided, containing a flexbuffer that maps parameter names to their values. the buffer is empty for builtin ops because the interpreter has already parsed the op parameters. If after checking these points you’re still facing issues, it might be helpful to isolate the problem by simplifying the code to just the model loading, resizing, and a single inference step, and then gradually add complexity to identify where the issue lies. This page explains how tensorflow lite models are loaded and converted from flatbuffer format for inference on esp32 devices. it covers the process of transforming serialized model data into runtime structures that the tflite micro framework can use for execution. However, as with any complex software, users often encounter errors that can disrupt workflow and require troubleshooting. this guide provides a comprehensive overview of common tensorflow errors and offers tips for resolving them effectively.

Troubleshooting Modulenotfounderror For Tflite Runtime On Raspberry Pi This page explains how tensorflow lite models are loaded and converted from flatbuffer format for inference on esp32 devices. it covers the process of transforming serialized model data into runtime structures that the tflite micro framework can use for execution. However, as with any complex software, users often encounter errors that can disrupt workflow and require troubleshooting. this guide provides a comprehensive overview of common tensorflow errors and offers tips for resolving them effectively. That memory planner looks at the entire graph of a model and tries to reuse as many buffers as possible to create the smallest length for the head. the tensor buffers for this section can be accessed via a tfliteevaltensor or tflitetensor instance on the tflite::microinterpreter. With tflite runtime we don't manage automatically the use of external ram. that said you can generate the code and modify the linker script to place the activation buffer in external sdram (with of course an impact on the performance) regards. Is there any chance we can fix this issue at the root cause? we found the same problem when saving a keras model with tf.saved model.save(model) and then converting the savedmodel to tflite. After downloading the jitsi open source react project, i ran it like this. i analyzed the source code, and it was implementing the virtual background using the file below. import createtflitesimdmodule from '. vendor tflite tflite simd'; however, when i moved it to another react project and ran it, the following error occurred.

Trying To Load A Tflite Model Fails With Java Io Filenotfoundexception That memory planner looks at the entire graph of a model and tries to reuse as many buffers as possible to create the smallest length for the head. the tensor buffers for this section can be accessed via a tfliteevaltensor or tflitetensor instance on the tflite::microinterpreter. With tflite runtime we don't manage automatically the use of external ram. that said you can generate the code and modify the linker script to place the activation buffer in external sdram (with of course an impact on the performance) regards. Is there any chance we can fix this issue at the root cause? we found the same problem when saving a keras model with tf.saved model.save(model) and then converting the savedmodel to tflite. After downloading the jitsi open source react project, i ran it like this. i analyzed the source code, and it was implementing the virtual background using the file below. import createtflitesimdmodule from '. vendor tflite tflite simd'; however, when i moved it to another react project and ran it, the following error occurred.

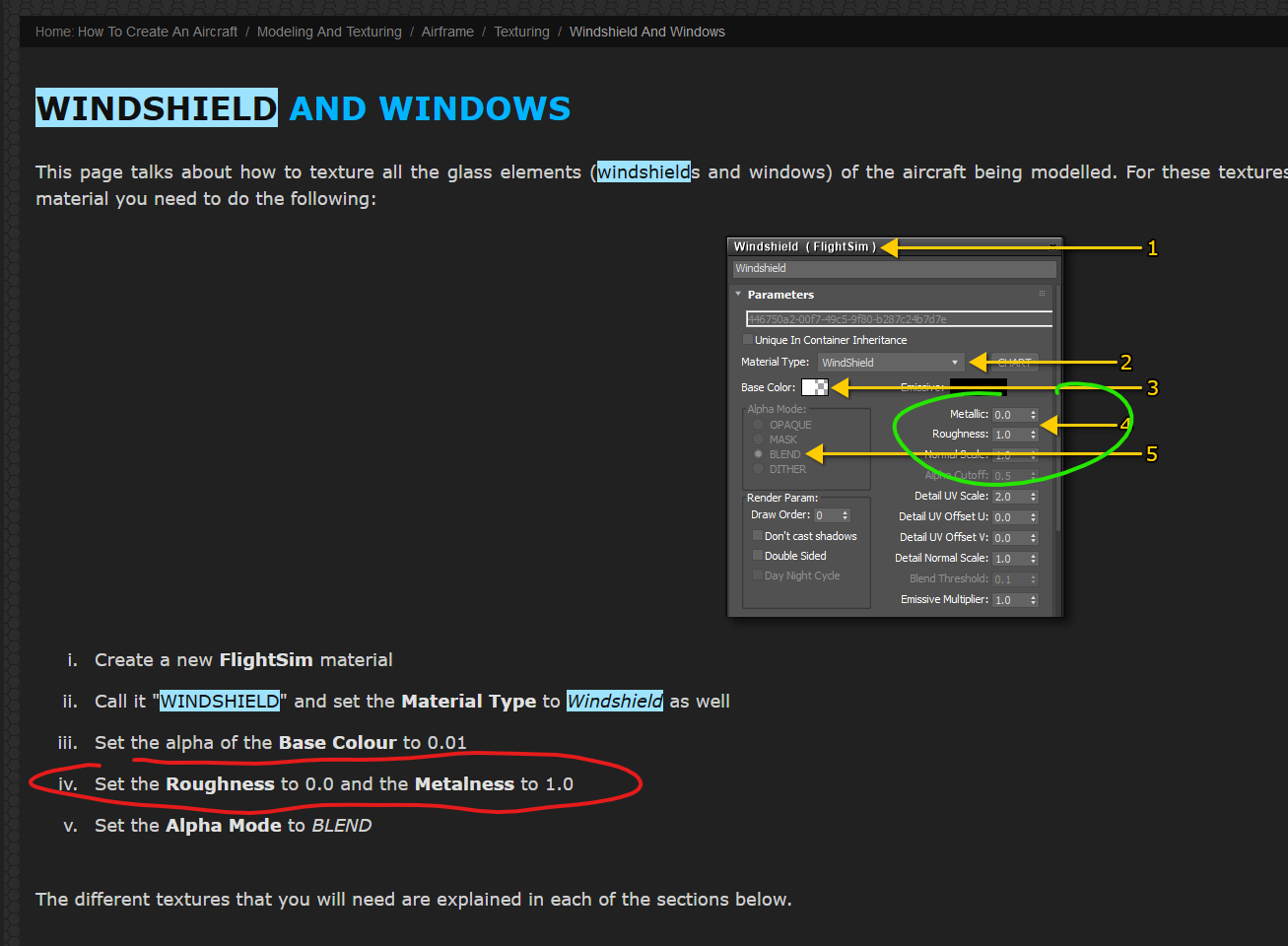

Topic To Report Documentation Errors Sdk Tools Samples Documentation Is there any chance we can fix this issue at the root cause? we found the same problem when saving a keras model with tf.saved model.save(model) and then converting the savedmodel to tflite. After downloading the jitsi open source react project, i ran it like this. i analyzed the source code, and it was implementing the virtual background using the file below. import createtflitesimdmodule from '. vendor tflite tflite simd'; however, when i moved it to another react project and ran it, the following error occurred.

Comments are closed.