Geoeval Benchmark For Evaluating Llms And Multi Modal Models On Geometry Problem Solvingdescription

Geoeval Benchmark For Evaluating Llms And Multi Modal Models On To address this gap, we introduce the geoeval benchmark, a comprehensive collection that includes a main subset of 2,000 problems, a 750 problems subset focusing on backward reasoning, an augmented subset of 2,000 problems, and a hard subset of 300 problems. To address this gap, we introduce the geoeval benchmark, a comprehensive collection that includes a main subset of 2,000 problems, a 750 problems subset focusing on backward reasoning, an augmented sub set of 2,000 problems, and a hard subset of 300 problems.

Geoeval Benchmark For Evaluating Llms And Multi Modal Models On The geoeval benchmark is specifically designed for assessing the ability of models in resolving geometric math problems. this benchmark features five characteristics: comprehensive variety, varied problems, dual inputs, diverse challenges, and complexity ratings. The geoeval benchmark is specifically designed for assessing the ability of models in resolving geometric math problems. this benchmark features five characteristics: comprehensive variety, varied problems, dual inputs, diverse challenges, and complexity ratings. This work constructs a new largescale benchmark, geometry3k, consisting of 3,002 geometry problems with dense annotation in formal language, and proposes a novel geometry solving approach with formal language and symbolic reasoning, called interpretable geometry problem solver (intergps). In this work, we convert diagrams into basic textual clauses to describe diagram features effectively, and propose a new neural solver called pgpsnet to fuse multi modal information efficiently.

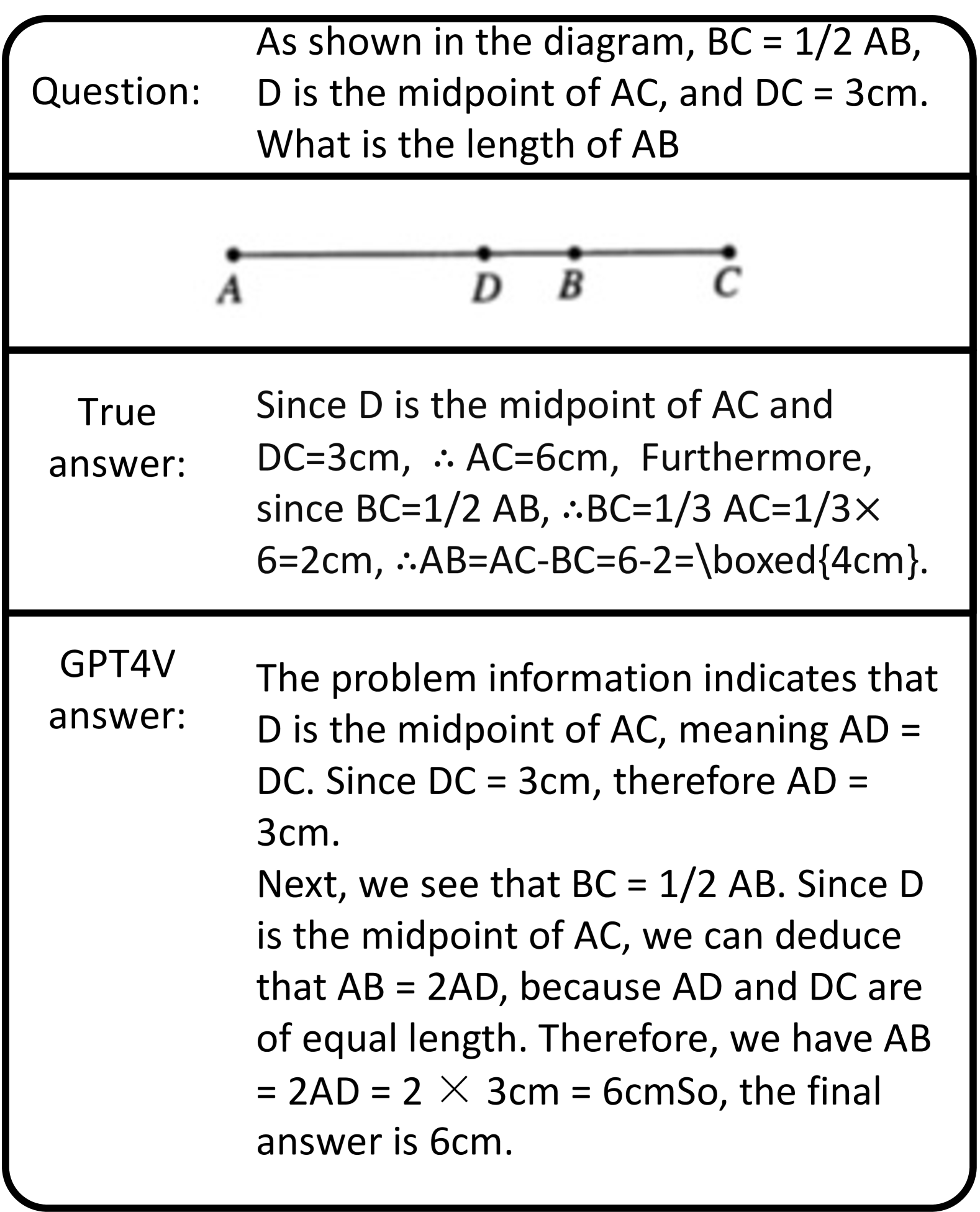

Pdf Geoeval Benchmark For Evaluating Llms And Multi Modal Models On This work constructs a new largescale benchmark, geometry3k, consisting of 3,002 geometry problems with dense annotation in formal language, and proposes a novel geometry solving approach with formal language and symbolic reasoning, called interpretable geometry problem solver (intergps). In this work, we convert diagrams into basic textual clauses to describe diagram features effectively, and propose a new neural solver called pgpsnet to fuse multi modal information efficiently. The geoeval benchmark is specifically designed for assessing the ability of models in resolving geometric math problems. this benchmark features five characteristics: comprehensive variety, varied problems, dual inputs, diverse challenges, and complexity ratings. Abstract: we present noregeo, a novel benchmark designed to evaluate the intrinsic geometric understanding of large language models (llms) without relying on reasoning or algebraic computation. This study, while providing significant insights into the capabilities of large language models (llms) and multi modal models (mms) in solving geome try problems, has several limitations. The remarkable progress of multi modal large language models (mllms) has garnered unparalleled attention, due to their superior performance in visual contexts. however, their capabilities in visual math problem solving remain insufficiently evaluated and understood.

Comments are closed.