Gaze Mapping For Mobile Video Based Eye Tracking Lincoln Centre For

Gaze Mapping For Mobile Video Based Eye Tracking Lincoln Centre For In this project, you are going to develop a computer vision based system that maps the gaze location along a sequence of images extracted from eye tracking footage recorded with a mobile device (glasses) in a dynamic scene. To assist in preprocessing your data, this repository includes tools that will preprocess raw data from a select number of mobile eye tracking devices. you can find them in the preprocessing directory.

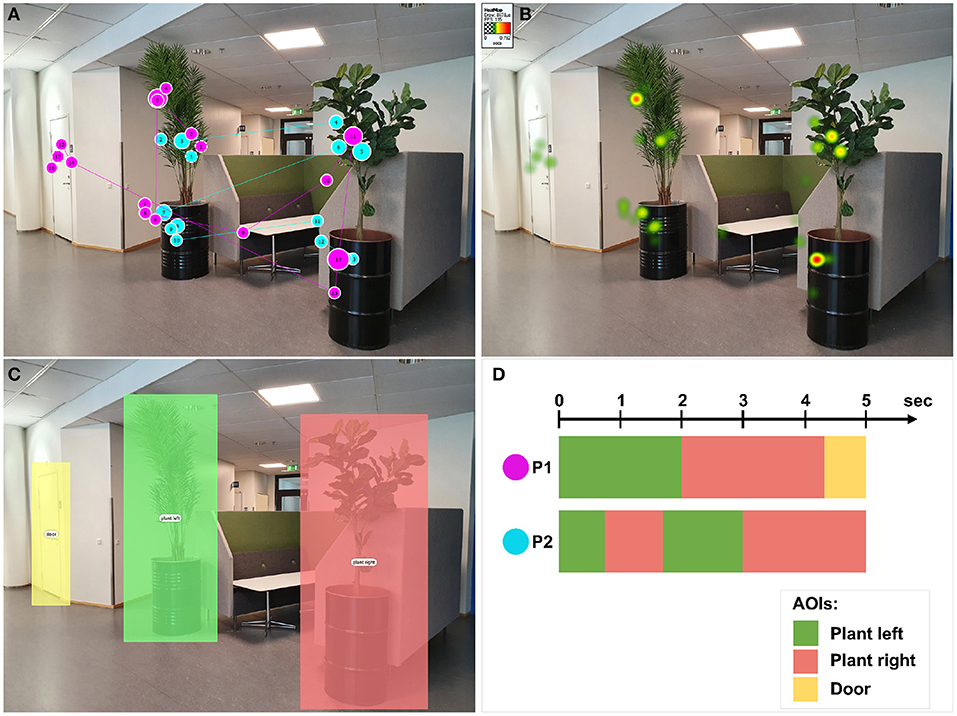

The Eye Tracking Video Gaze Point Download Scientific Diagram Automated methods to map eye tracking data to a world centered reference frame (e.g., screens and tabletops) are available. these methods usually make use of fiducial markers. however, such mapping methods may be difficult to implement, expensive, and eye tracker specific. By importing the data from all respondents into imotions, the gaze data can be mapped onto a defined image frame from the recording. the result is something like what is shown below. the respondent’s view is shown on the right, and the gaze is superimposed onto the image on the left. There are two major approaches to video camera based eye tracking: model based and appearance based. model based approaches calculate the point of gaze using a 3d model of the eye and the reflected infrared patterns on the cornea. Eye tracking, a fundamental process in gaze analysis, involves measuring the point of gaze or eye motion. it is crucial in numerous applications, including human–computer interaction (hci), education, health care, and virtual reality.

Figure S5 Gaze Decoding Evaluated Using Camera Based Eye Tracking For There are two major approaches to video camera based eye tracking: model based and appearance based. model based approaches calculate the point of gaze using a 3d model of the eye and the reflected infrared patterns on the cornea. Eye tracking, a fundamental process in gaze analysis, involves measuring the point of gaze or eye motion. it is crucial in numerous applications, including human–computer interaction (hci), education, health care, and virtual reality. Eye tracking is the process of measuring where one is looking (point of gaze) or the motion of an eye relative to the head. researchers have developed different algorithms and techniques to automatically track the gaze position and direction, which are helpful in different applications. This toolkit addresses this challenge by automatically identifying the target stimulus on every frame of the recording and mapping the gaze positions to a fixed representation of the stimulus. it does this by identifying matching keypoints between the reference stimulus and each frame of the video. We describe (and make freely available) an automated analysis pipeline for mapping gaze data from an egocentric coordinate system (i.e. the wearable eye tracker) to a fixed reference coordinate system (i.e. a target stimulus in the environment). The pervasiveness of handheld devices with powerful cameras have now made it possible to have high quality eye tracking right in our pockets! the paper implemented here reports an error of 0.6–1° at a viewing distance of 25–40cm for a smartphone.

Pdf An Appearance Based Method For Eye Gaze Tracking Eye tracking is the process of measuring where one is looking (point of gaze) or the motion of an eye relative to the head. researchers have developed different algorithms and techniques to automatically track the gaze position and direction, which are helpful in different applications. This toolkit addresses this challenge by automatically identifying the target stimulus on every frame of the recording and mapping the gaze positions to a fixed representation of the stimulus. it does this by identifying matching keypoints between the reference stimulus and each frame of the video. We describe (and make freely available) an automated analysis pipeline for mapping gaze data from an egocentric coordinate system (i.e. the wearable eye tracker) to a fixed reference coordinate system (i.e. a target stimulus in the environment). The pervasiveness of handheld devices with powerful cameras have now made it possible to have high quality eye tracking right in our pockets! the paper implemented here reports an error of 0.6–1° at a viewing distance of 25–40cm for a smartphone.

Comments are closed.