Flexible Latent Variable Models For Multi Task Learning

Flexible Latent Variable Models For Multi Task Learning Our framework not only generalizes standard single task learning methods but also supports a set of flexible latent variable models. the rest of the paper is organized as follows. Our experiments on both simulated datasets and real world classification datasets show the effectiveness of the proposed models in two evaluation settings: a standard multi task learning setting and a transfer learning setting.

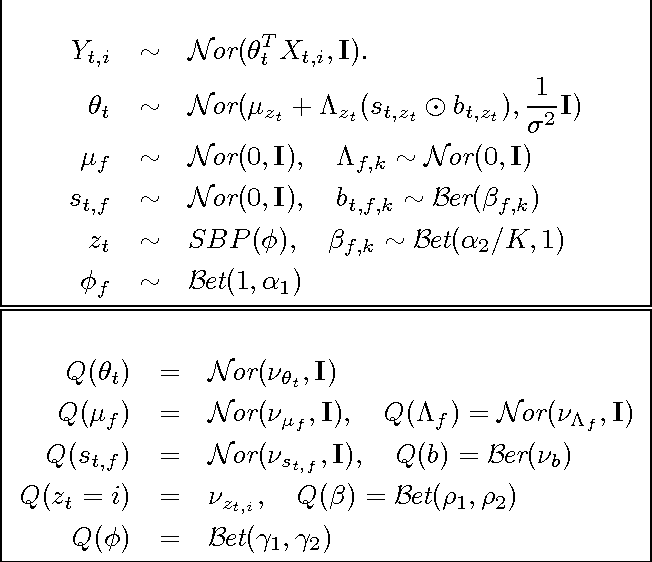

Flexible Modeling Of Latent Task Structures In Multitask Learning In this paper we propose a probabilistic framework which can support a set of latent variable models for different multi task learning scenarios. Issue title special issue on inductive transfer learning author (monograph) silver, daniel l (editor) 1 ; bennett, kristin p (editor) 2 [1] jodrey school of computer science, acadia university, wolfville, ns b4p 5r6, canada [2] department of mathematical sciences, rensselaer polytechnic institute, troy, ny 12180 3590, united states source. Abstract multitask learning algorithms are typically designed assuming some fixed, a priori known latent structure shared by all the tasks. however, it is usually unclear what type of latent task structure is the most ap propriate for a given multitask learning prob lem. Leveraging the generalization power of diffusion models, we extend the partial learning setup to a zero shot setting, training a multi task model on multiple synthetic datasets, each labeled for only a subset of tasks. our method, stablemtl, repurposes image generators for latent regression.

Time Contrastive Learning For Latent Variable Models Abstract multitask learning algorithms are typically designed assuming some fixed, a priori known latent structure shared by all the tasks. however, it is usually unclear what type of latent task structure is the most ap propriate for a given multitask learning prob lem. Leveraging the generalization power of diffusion models, we extend the partial learning setup to a zero shot setting, training a multi task model on multiple synthetic datasets, each labeled for only a subset of tasks. our method, stablemtl, repurposes image generators for latent regression. Machine learning is an international forum focusing on computational approaches to learning. reports substantive results on a wide range of learning methods.

Nonparametric Estimation Of Multi View Latent Variable Models Deepai Machine learning is an international forum focusing on computational approaches to learning. reports substantive results on a wide range of learning methods.

Comments are closed.