Fine Tuning Large Language Models Deploying And Serving

Fine Tuning And Deploying Large Language Models Over Edges Issues And In this work, we provide a comprehensive overview of prevalent memory efficient fine tuning methods for deployment at the network edge. we also review state of the art literature on model compression, offering insights into the deployment of llms at network edges. This blog will teach you how to deploy and serve your fine tuned large language model using different methods, such as cloud platforms, containers, and apis. you will also learn about the pros and cons of each method, and some best practices and tips to optimize your model performance and efficiency.

Fine Tuning Large Language Models Llms In 2024 Since the invention of gpt2 1.5b in 2019, large language models (llms) have transitioned from specialized models to versatile foundation models. the llms exhibit impressive zero shot ability, however, require fine tuning on local datasets and significant resources for deployment. Since the invention of gpt2 1.5b in 2019, large language models (llms) have transitioned from specialized models to versatile foundation models. the llms exhibit impressive zero shot. Deploying and fine tuning these models can be complex but rewarding. this blog post will guide you through the deployment process and fine tuning techniques to optimize llm performance. Fine tuning large language models (llms) has become increasingly practical within enterprise settings. recent advancements in both the training procedures and serving infrastructure have dramatically lowered the barriers to creating domain specific ai solutions.

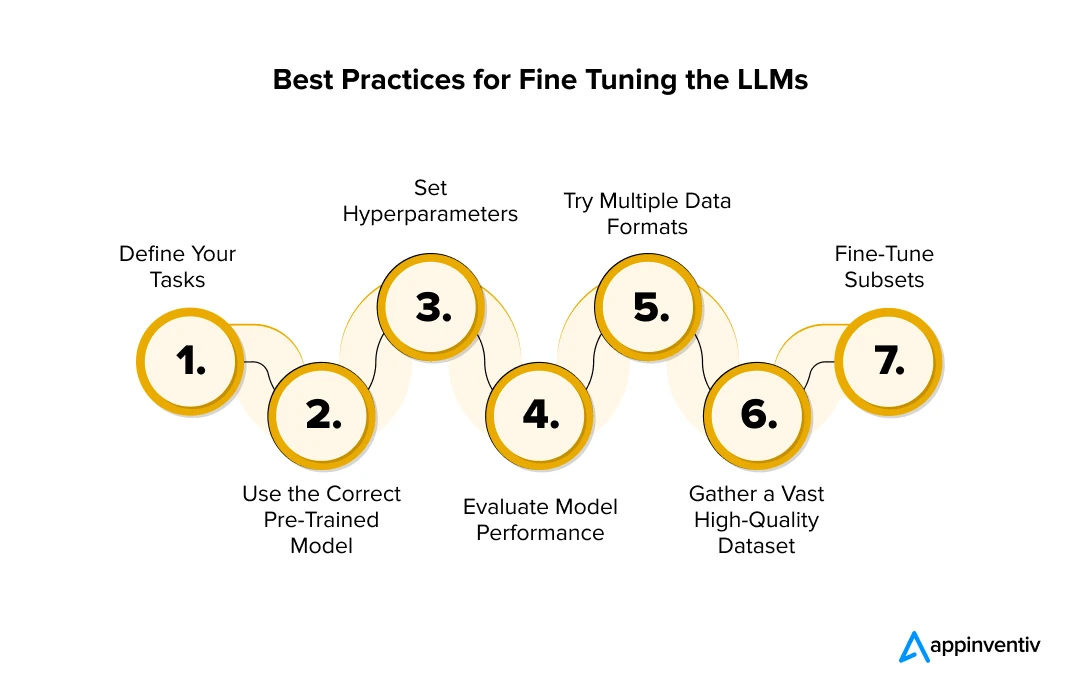

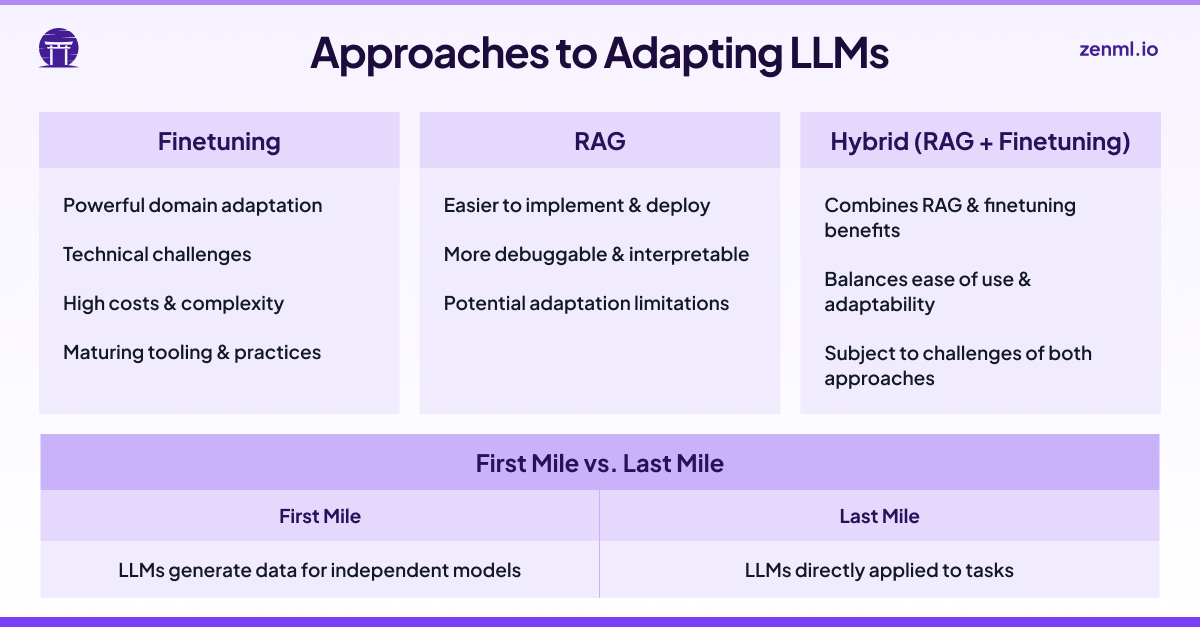

Fine Tuning Large Language Models Deploying And Serving Deploying and fine tuning these models can be complex but rewarding. this blog post will guide you through the deployment process and fine tuning techniques to optimize llm performance. Fine tuning large language models (llms) has become increasingly practical within enterprise settings. recent advancements in both the training procedures and serving infrastructure have dramatically lowered the barriers to creating domain specific ai solutions. Fine tuning is a pivotal phase in the development of large language models (llms). after a model undergoes the pre training stage — where it learns a wide range of language patterns and. We systematically review parameter efficient fine tuning techniques that lower training and deployment costs, domain and cross lingual adaptation methods for both encoder and decoder models, and model specialization strategies. Enterprise llm deployment now achieves viable roi through parameter efficient fine tuning and hardware aware optimizations, yet fundamental challenges around context limitations and temporal drift remain. Abstract: since the invention of gpt2 1.5b in 2019, llms have transitioned from specialized models to versatile foundation models. the llms exhibit impressive zero shot ability, however, require fine tuning on local datasets and significant resources for deployment.

Challenges Of Finetuning Large Language Models In Production Fine tuning is a pivotal phase in the development of large language models (llms). after a model undergoes the pre training stage — where it learns a wide range of language patterns and. We systematically review parameter efficient fine tuning techniques that lower training and deployment costs, domain and cross lingual adaptation methods for both encoder and decoder models, and model specialization strategies. Enterprise llm deployment now achieves viable roi through parameter efficient fine tuning and hardware aware optimizations, yet fundamental challenges around context limitations and temporal drift remain. Abstract: since the invention of gpt2 1.5b in 2019, llms have transitioned from specialized models to versatile foundation models. the llms exhibit impressive zero shot ability, however, require fine tuning on local datasets and significant resources for deployment.

Finetuning Large Language Models Bens Bites Enterprise llm deployment now achieves viable roi through parameter efficient fine tuning and hardware aware optimizations, yet fundamental challenges around context limitations and temporal drift remain. Abstract: since the invention of gpt2 1.5b in 2019, llms have transitioned from specialized models to versatile foundation models. the llms exhibit impressive zero shot ability, however, require fine tuning on local datasets and significant resources for deployment.

Comments are closed.