Figure 10 From Ddp Diffusion Model For Dense Visual Prediction

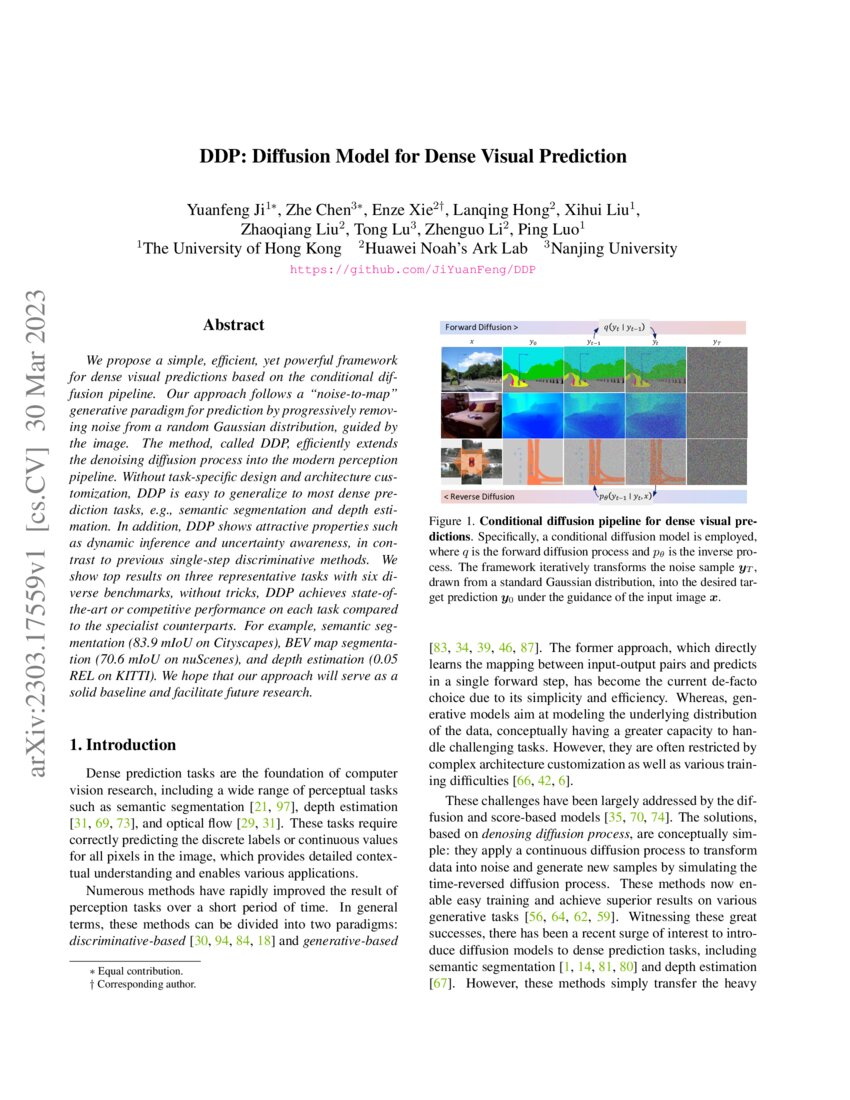

Ddp Diffusion Model For Dense Visual Prediction Deepai We propose a simple, efficient, yet powerful framework for dense visual predictions based on the conditional diffusion pipeline. our approach follows a "noise to map" generative paradigm for prediction by progressively removing noise from a random gaussian distribution, guided by the image. We propose a simple, eficient, yet powerful framework for dense visual predictions based on the conditional dif fusion pipeline. our approach follows a “noise to map” generative paradigm for prediction by progressively remov ing noise from a random gaussian distribution, guided by the image.

Ddp Diffusion Model For Dense Visual Prediction We propose a simple, efficient, yet powerful framework for dense visual predictions based on the conditional diffusion pipeline. our approach follows a "noise t. We propose a simple, efficient, yet powerful framework for dense visual predictions based on the conditional diffusion pipeline. our approach follows a "noise to map" generative paradigm for prediction by progressively removing noise from a random gaussian distribution, guided by the image. We propose a simple, efficient, yet powerful framework for dense visual predictions based on the conditional diffusion pipeline. our approach follows a "noise to map" generative paradigm. Figure 10: control stable diffusion with semantic map, the uniformer unpernet, and ddp segmentation models are used to predict segmentation maps as condition input.

Ddp Diffusion Model For Dense Visual Prediction We propose a simple, efficient, yet powerful framework for dense visual predictions based on the conditional diffusion pipeline. our approach follows a "noise to map" generative paradigm. Figure 10: control stable diffusion with semantic map, the uniformer unpernet, and ddp segmentation models are used to predict segmentation maps as condition input. We propose a simple, efficient, yet powerful framework for dense visual predictions based on the conditional diffusion pipeline. our approach follows a "noise to map" generative paradigm for prediction by progressively removing noise from a random gaussian distribution, guided by the image. Github jiyuanfeng ddp abstract we propose a simple, efficient, yet powerful framework for dense visual predictions ba. ed on the conditional dif fusion pipeline. our approach follows a “noise to map” generative paradigm for prediction by progressively remov ing noise from a random . We propose a simple, efficient, yet powerful framework for dense visual predictions based on the conditional diffusion pipeline. our approach follows a "noise to map" generative paradigm for prediction by progressively removing noise from a random gaussian distribution, guided by the image. We propose a simple, efficient, yet powerful framework for dense visual predictions based on the conditional diffusion pipeline. our approach follows a.

Comments are closed.