Feature Request Add Blip2 Model To Preprocess Images Issue

Blip2 Qformer Is Not In The Pipelines Registry Group Image Text This python code defines a class blip2 which is used to generate captions for images using a pre trained model. the code uses the pytorch library and relies on a separate module called lavis.models. Blip 2 can be used for conditional text generation given an image and an optional text prompt. at inference time, it’s recommended to use the generate method. one can use blip2processor to prepare images for the model, and decode the predicted tokens id’s back to text.

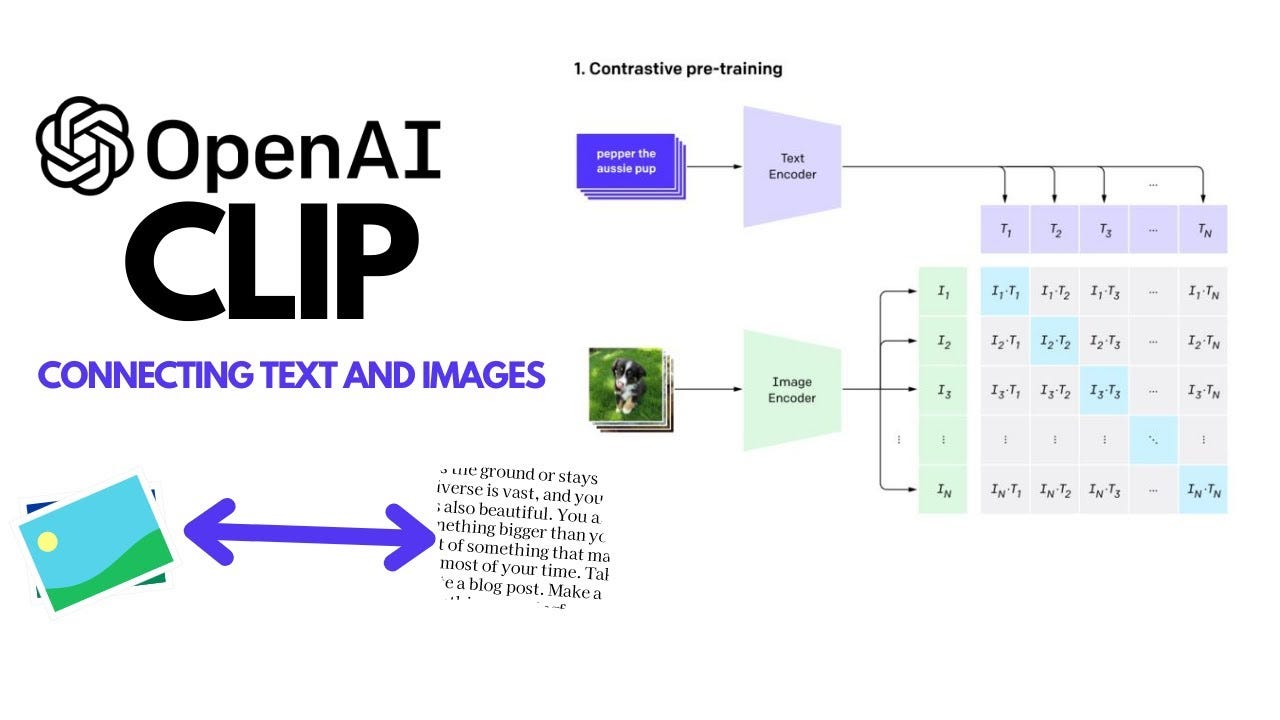

Newih Blip2 Finetuning Model Example Hugging Face This paper proposes blip 2, a generic and efficient pre training strategy that bootstraps vision language pre training from off the shelf frozen pre trained image encoders and frozen large language models. By means of llms and vit, blip and blip 2 obtain very impressive results on vision language tasks such as image captioning, visual question answering and image text retrieval. they are. This document covers the implementation of image captioning using salesforce's blip 2 (bootstrapping language image pre training) model through hugging face transformers. Blip (bootstrapping language image pre training) is an advanced multimodal model from hugging face, designed to merge natural language processing (nlp) and computer vision (cv).

Sezenkarakus Image Blip2 Description Model V1 Hugging Face This document covers the implementation of image captioning using salesforce's blip 2 (bootstrapping language image pre training) model through hugging face transformers. Blip (bootstrapping language image pre training) is an advanced multimodal model from hugging face, designed to merge natural language processing (nlp) and computer vision (cv). Large ram is required to load the larger models. running on gpu can optimize inference speed. print('running in colab.') # we associate a model with its preprocessors to make it easier for. Requests with the same image prompt table input tokens will reuse the kv cache, which will help reduce latency. the specific performance improvement depends on the length of reuse. you can set the max num images to the max number of images per request. This study aims to bridge this gap by analyzing and reconstructing images generated by midjourney using advanced ai models, specifically blip2 and clip, to capture and reproduce their key features. This paper proposes blip 2, a generic and efficient pretraining strategy that bootstraps vision language pre training from off the shelf frozen pre trained image encoders and frozen large language models.

Building An Image Captioning Model Using Salesforce S Blip Model By Large ram is required to load the larger models. running on gpu can optimize inference speed. print('running in colab.') # we associate a model with its preprocessors to make it easier for. Requests with the same image prompt table input tokens will reuse the kv cache, which will help reduce latency. the specific performance improvement depends on the length of reuse. you can set the max num images to the max number of images per request. This study aims to bridge this gap by analyzing and reconstructing images generated by midjourney using advanced ai models, specifically blip2 and clip, to capture and reproduce their key features. This paper proposes blip 2, a generic and efficient pretraining strategy that bootstraps vision language pre training from off the shelf frozen pre trained image encoders and frozen large language models.

Comments are closed.