Computer Vision Study Group Session On Blip 2

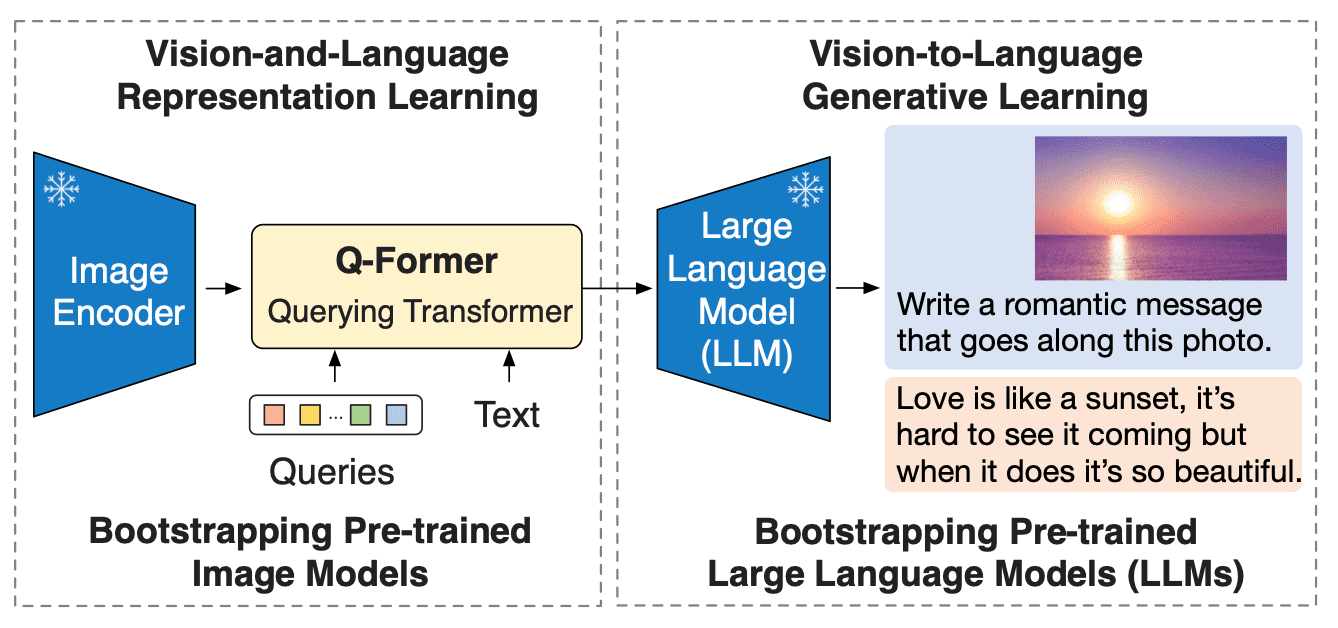

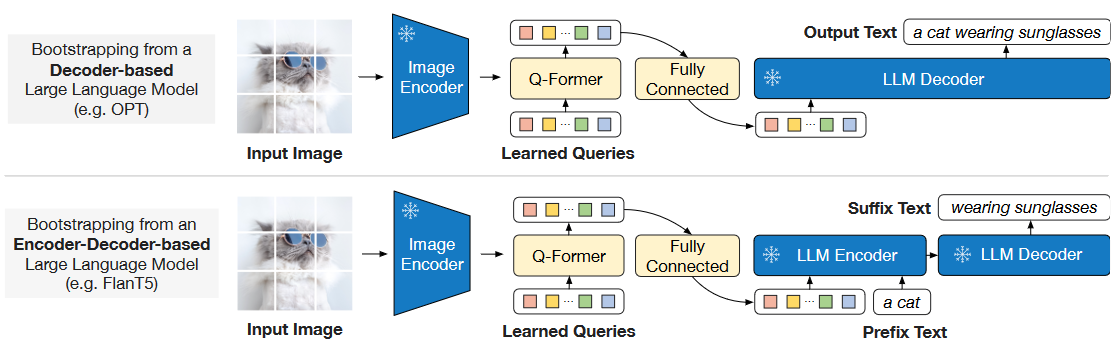

Blip 2 Ai Image Captioning Feature Extraction Online Demo In this session of computer vision study group, johannes walks us through the paper blip 2: bootstrapping language image pre training with frozen image encoders and large language. This paper proposes blip 2, a generic and efficient pre training strategy that bootstraps vision language pre training from off the shelf frozen pre trained image encoders and frozen large language models.

Blip 2 Ai Image Captioning Feature Extraction Online Demo In this paper, we propose a method for monocular depth estimation us ing blip 2. our approach draws inspiration from depthclip’s use of language guided models to comprehend depth information, leveraging the q former module for modality fusion. Share your videos with friends, family, and the world. In this notebook, we will demonstrate how to create a labeled dataset using blip 2 and push it to the hugging face hub. However, applying blip 2 to more complex quantized target tasks, such as monocular depth estimation, presents challenges. in this paper, we propose a method for monocular depth estimation using blip 2.

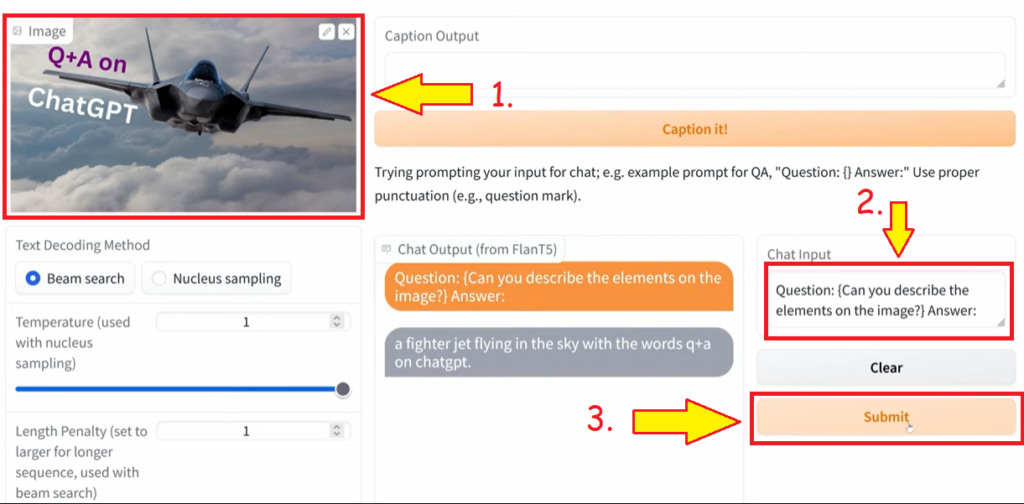

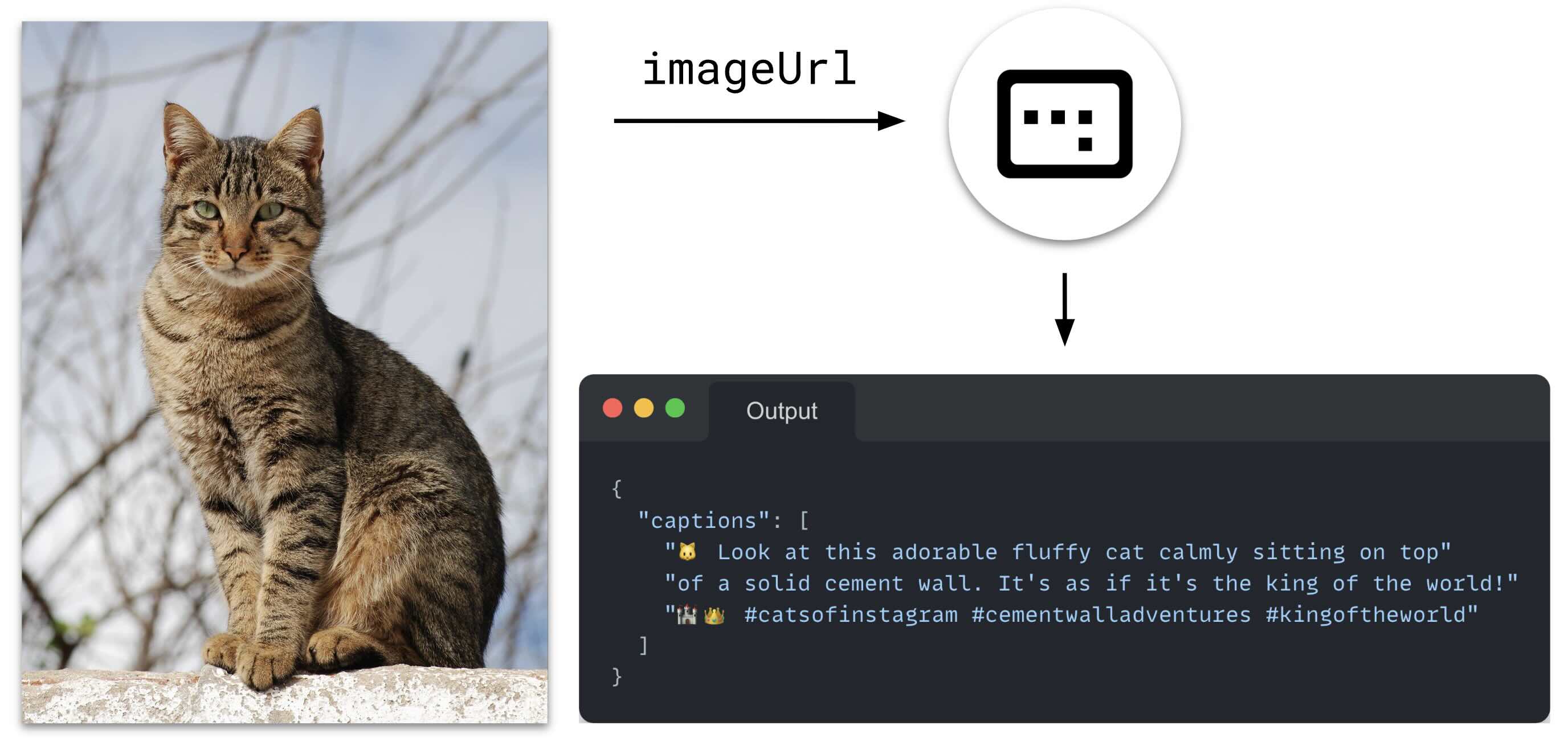

Blip 2 Ai Image Captioning Feature Extraction Online Demo In this notebook, we will demonstrate how to create a labeled dataset using blip 2 and push it to the hugging face hub. However, applying blip 2 to more complex quantized target tasks, such as monocular depth estimation, presents challenges. in this paper, we propose a method for monocular depth estimation using blip 2. This code snippet illustrates the application of blip 2 for visual question answering. experiment with more complex queries or explore this functionality further using the provided gradio app:. Demo notebooks for blip 2 for image captioning, visual question answering (vqa) and chat like conversations can be found here. if you’re interested in submitting a resource to be included here, please feel free to open a pull request and we’ll review it!. This paper proposes blip 2, a generic and efficient pretraining strategy that bootstraps vision language pre training from off the shelf frozen pretrained image encoders and frozen large language models. Blip 2 leverages frozen pre trained image encoders and large language models (llms) by training a lightweight, 12 layer transformer encoder in between them, achieving state of the art performance on various vision language tasks.

Zero Shot Image To Text Generation With Blip 2 This code snippet illustrates the application of blip 2 for visual question answering. experiment with more complex queries or explore this functionality further using the provided gradio app:. Demo notebooks for blip 2 for image captioning, visual question answering (vqa) and chat like conversations can be found here. if you’re interested in submitting a resource to be included here, please feel free to open a pull request and we’ll review it!. This paper proposes blip 2, a generic and efficient pretraining strategy that bootstraps vision language pre training from off the shelf frozen pretrained image encoders and frozen large language models. Blip 2 leverages frozen pre trained image encoders and large language models (llms) by training a lightweight, 12 layer transformer encoder in between them, achieving state of the art performance on various vision language tasks.

Using The Blip 2 Model For Image Captioning This paper proposes blip 2, a generic and efficient pretraining strategy that bootstraps vision language pre training from off the shelf frozen pretrained image encoders and frozen large language models. Blip 2 leverages frozen pre trained image encoders and large language models (llms) by training a lightweight, 12 layer transformer encoder in between them, achieving state of the art performance on various vision language tasks.

Blip 2 Mmpretrain 1 2 0 Documentation

Comments are closed.