Fairness In Natural Language Processing

Bridging Fairness And Environmental Sustainability In Natural Language We argue that achieving fairness in nlp requires not only technical interventions but also socio technical approaches that integrate community participation, transparency, and governance. In this survey, we analyze the origins of biases, the definitions of fairness, and how different subfields of nlp mitigate bias. we finally discuss how future studies can work towards.

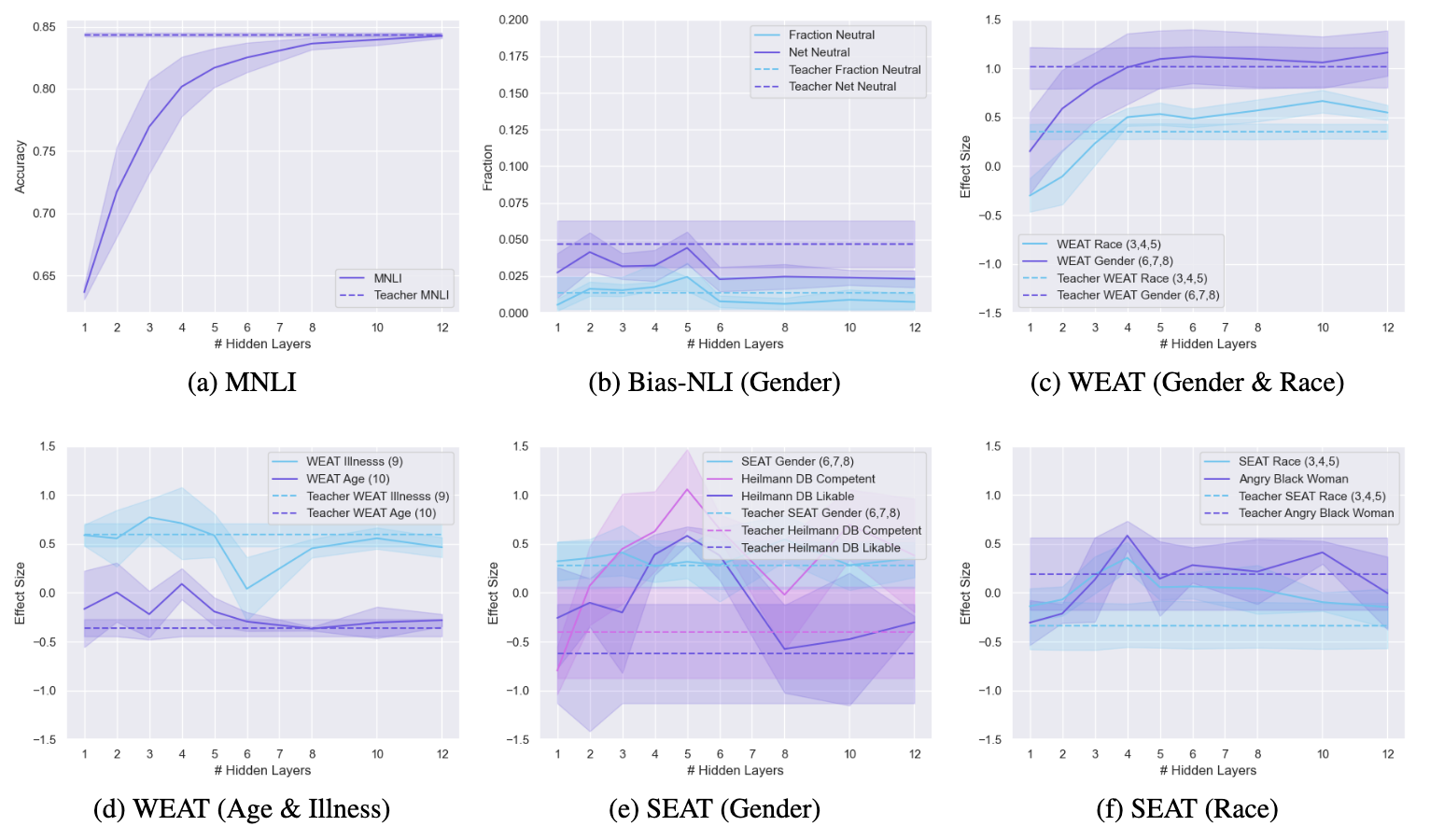

Fairlangproc Package Consolidates Fairness Tools For Natural Language Fairness in natural language processing (nlp) pertains to the just and equal treatment of all individuals and groups without discrimination. this means that an nlp model should not amplify or perpetuate existing biases, stereotypes, or assumptions about certain groups. In what follows, i first provide a brief background on bias and fairness in nlp applications and explain the root causes and potential consequences of biases on models’ predictions. We first consolidate, formalize, and expand notions of social bias and fairness in natural language processing, defining distinct facets of harm and introducing several desiderata to operationalize fairness for llms. This section presents the results of applying fairness libraries to computer vision (cv) and natural language processing (nlp) models. the primary objective of this analysis is to improve fairness metrics while maintaining or enhancing performance metrics.

Challenges Of Natural Language Processing Natural Language Processing We first consolidate, formalize, and expand notions of social bias and fairness in natural language processing, defining distinct facets of harm and introducing several desiderata to operationalize fairness for llms. This section presents the results of applying fairness libraries to computer vision (cv) and natural language processing (nlp) models. the primary objective of this analysis is to improve fairness metrics while maintaining or enhancing performance metrics. In this survey, we analyze the origins of biases, the definitions of fairness, and how different subfields of nlp mitigate bias. we finally discuss how future studies can work towards eradicating pernicious biases from nlp algorithms. In this paper, we provide a comprehensive review of bias in nlp, from its sources, and societal impacts to the current approaches to mitigating it. we look at recent studies of data and algorithmic biases that persist and have a disproportionate impact on marginalized communities. This survey also organizes commonly used nlp fairness metrics into different categories to present advantages, disadvantages, and correlations with general fairness metrics common in machine learning. Therefore, machine learning algorithms risk potentially encouraging unfair and discriminatory decision making and raise serious privacy concerns.

Advancing Fairness In Natural Language Processing From Traditional In this survey, we analyze the origins of biases, the definitions of fairness, and how different subfields of nlp mitigate bias. we finally discuss how future studies can work towards eradicating pernicious biases from nlp algorithms. In this paper, we provide a comprehensive review of bias in nlp, from its sources, and societal impacts to the current approaches to mitigating it. we look at recent studies of data and algorithmic biases that persist and have a disproportionate impact on marginalized communities. This survey also organizes commonly used nlp fairness metrics into different categories to present advantages, disadvantages, and correlations with general fairness metrics common in machine learning. Therefore, machine learning algorithms risk potentially encouraging unfair and discriminatory decision making and raise serious privacy concerns.

Comments are closed.