Ez Mmla Toolkit Tools

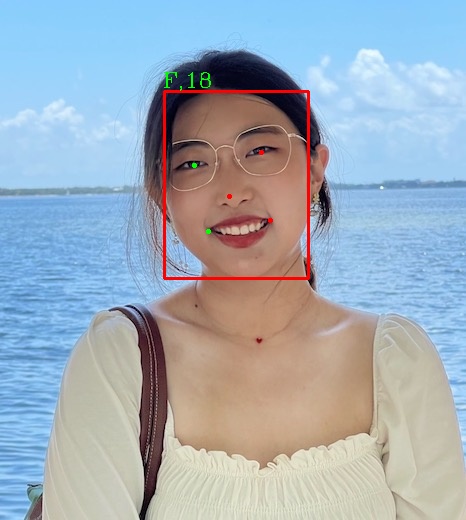

Ez Mmla Toolkit Speechbrain all in one speech toolkit based on pytorch py. The ez mmla toolkit uses machine learning models written in javascript to detect data via video and audio streams that run entirely within the web browser. a significant advantage of such models is that the computation required to train and run them is offloaded to the user’s device.

Ez Mmla Toolkit This toolkit can run from any browser and does not require special hardware or programming experience. we compare this toolkit with traditional methods and describe a case study where the ez mmla toolkit was used in a classroom context. We compare this toolkit with traditional methods and describe a case study where the ez mmla toolkit was used by aspiring educational researchers in a classroom context. This toolkit can run from any browser and does not require dedicated hardware or programming experience. we compare this toolkit with traditional methods and describe a case study where the ez mmla toolkit was used by aspiring educational researchers in a classroom context. This toolkit can run from any browser and does not require special hardware or programming experience. we compare this toolkit with traditional methods and describe a case study where the ez mmla toolkit was used in a classroom context.

Ez Mmla Toolkit This toolkit can run from any browser and does not require dedicated hardware or programming experience. we compare this toolkit with traditional methods and describe a case study where the ez mmla toolkit was used by aspiring educational researchers in a classroom context. This toolkit can run from any browser and does not require special hardware or programming experience. we compare this toolkit with traditional methods and describe a case study where the ez mmla toolkit was used in a classroom context. This toolkit can run from any browser and does not require dedicated hardware or programming experience. we compare this toolkit with traditional methods and describe a case study where the ez mmla toolkit was used by aspiring educational researchers in a classroom context. Ezmmla has 3 repositories available. follow their code on github. This paper presents the ez mmla toolkit, a website that provides easy access to the latest machine learning algorithms for collecting a variety of data streams from webcams: attention, physiological states, body posture, hand gestures, emotions, and lower level computer vision algorithms. Multimodal data collection made easy: the ez mmla toolkit: a data collection website that provides educators and researchers with easy access to multimodal data streams.

Ez Mmla Toolkit This toolkit can run from any browser and does not require dedicated hardware or programming experience. we compare this toolkit with traditional methods and describe a case study where the ez mmla toolkit was used by aspiring educational researchers in a classroom context. Ezmmla has 3 repositories available. follow their code on github. This paper presents the ez mmla toolkit, a website that provides easy access to the latest machine learning algorithms for collecting a variety of data streams from webcams: attention, physiological states, body posture, hand gestures, emotions, and lower level computer vision algorithms. Multimodal data collection made easy: the ez mmla toolkit: a data collection website that provides educators and researchers with easy access to multimodal data streams.

Ez Mmla Toolkit This paper presents the ez mmla toolkit, a website that provides easy access to the latest machine learning algorithms for collecting a variety of data streams from webcams: attention, physiological states, body posture, hand gestures, emotions, and lower level computer vision algorithms. Multimodal data collection made easy: the ez mmla toolkit: a data collection website that provides educators and researchers with easy access to multimodal data streams.

Comments are closed.