Exploring Transformer Backbones For Image Diffusion Models Deepai

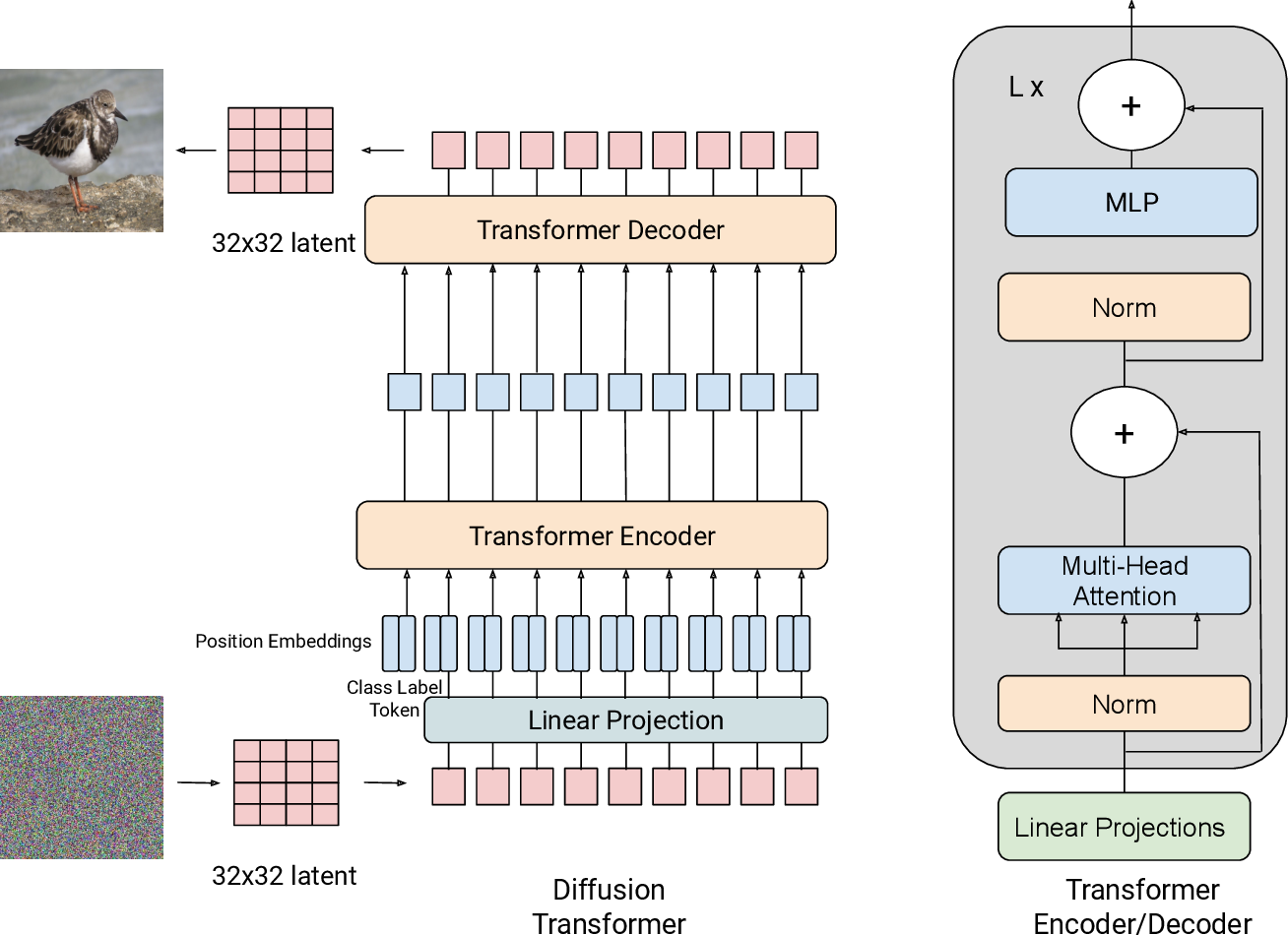

Exploring Transformer Backbones For Image Diffusion Models Deepai We present an end to end transformer based latent diffusion model for image synthesis. on the imagenet class conditioned generation task we show that a transformer based latent diffusion model achieves a 14.1fid which is comparable to the 13.1fid score of a unet based architecture. We present an end to end transformer based latent diffusion model for image synthesis. on the imagenet class conditioned generation task we show that a transformer based latent diffusion model achieves a 14.1fid which is comparable to the 13.1fid score of a unet based architecture.

Scalable Diffusion Models With Transformers Deepai We train latent diffusion models of images, replacing the commonly used u net backbone with a transformer that operates on latent patches. we analyze the scalability of our diffusion transformers (dits) through the lens of forward pass complexity as measured by gflops. This work presents imagen, a text to image diffusion model with an unprecedented degree of photorealism and a deep level of language understanding, and finds that human raters prefer imagen over other models in side by side comparisons, both in terms of sample quality and image text alignment. We present an end to end transformer based latent diffusion model for image synthesis. on the imagenet class conditioned generation task we show that a transformer based latent diffusion model achieves a 14.1fid which is comparable to the 13.1fid score of a unet based architecture. Exploring transformer backbones for image diffusion models: paper and code. we present an end to end transformer based latent diffusion model for image synthesis.

Dreamteacher Pretraining Image Backbones With Deep Generative Models We present an end to end transformer based latent diffusion model for image synthesis. on the imagenet class conditioned generation task we show that a transformer based latent diffusion model achieves a 14.1fid which is comparable to the 13.1fid score of a unet based architecture. Exploring transformer backbones for image diffusion models: paper and code. we present an end to end transformer based latent diffusion model for image synthesis. Abstract: we present an end to end transformer based latent diffusion model for image synthesis. on the imagenet class conditioned generation task we show that a transformer based latent diffusion model achieves a 14.1fid which is comparable to the 13.1fid score of a unet based architecture. 3d dit is a neural generative architecture that combines transformer based backbones with diffusion probabilistic models to synthesize diverse 3d data formats such as voxel grids, triplanes, and point clouds. it employs a 3d specific tokenization scheme—including volumetric patch embeddings, triplane representations, and point tokens—to capture long range dependencies using non local self. Article "exploring transformer backbones for image diffusion models" detailed information of the j global is an information service managed by the japan science and technology agency (hereinafter referred to as "jst"). We explore a new class of diffusion models based on the transformer architecture. we train latent diffusion models of images, replacing the commonly used u net backbone with a transformer that operates on latent patches.

Dit 3d Exploring Plain Diffusion Transformers For 3d Shape Generation Abstract: we present an end to end transformer based latent diffusion model for image synthesis. on the imagenet class conditioned generation task we show that a transformer based latent diffusion model achieves a 14.1fid which is comparable to the 13.1fid score of a unet based architecture. 3d dit is a neural generative architecture that combines transformer based backbones with diffusion probabilistic models to synthesize diverse 3d data formats such as voxel grids, triplanes, and point clouds. it employs a 3d specific tokenization scheme—including volumetric patch embeddings, triplane representations, and point tokens—to capture long range dependencies using non local self. Article "exploring transformer backbones for image diffusion models" detailed information of the j global is an information service managed by the japan science and technology agency (hereinafter referred to as "jst"). We explore a new class of diffusion models based on the transformer architecture. we train latent diffusion models of images, replacing the commonly used u net backbone with a transformer that operates on latent patches.

Figure 3 From Exploring Transformer Backbones For Image Diffusion Article "exploring transformer backbones for image diffusion models" detailed information of the j global is an information service managed by the japan science and technology agency (hereinafter referred to as "jst"). We explore a new class of diffusion models based on the transformer architecture. we train latent diffusion models of images, replacing the commonly used u net backbone with a transformer that operates on latent patches.

Comments are closed.