Explainable Automated Debugging Via Large Language Model Driven

Explainable Automated Debugging Via Large Language Model Driven In this paper, we seek to emulate the scientific debugging process via large language models (llms). we believe llms are capable of emulating scientific debugging for the following reasons. By aligning the reasoning of automated debugging more closely with that of human developers, we aim to produce intelligible explanations of how a specific patch has been generated, with the hope that the explanation will lead to more efficient and accurate developer decisions.

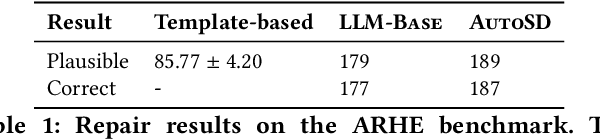

Pdf Explainable Automated Debugging Via Large Language Model Driven Our empirical analysis on three program repair benchmarks shows that autosd performs competitively with other program repair baselines, and that it can indicate when it is confident in its results. furthermore, we perform a human study with 20 participants to evaluate autosd generated explanations. Inspired by the way developers interact with code when debugging, we propose automated scientific debugging (autosd), a technique that prompts large language models to automatically. The overall goal of this research is to investigate how developers use and benefit from automated debugging tools through a set of human studies by providing initial evidence that several assumptions made by automated debugging techniques do not hold in practice. Large language models (llms) have shown impressive effectiveness in various software engineering tasks, including automated program repair (apr). in this study, we take a deep dive into automated bug fixing utilizing llms.

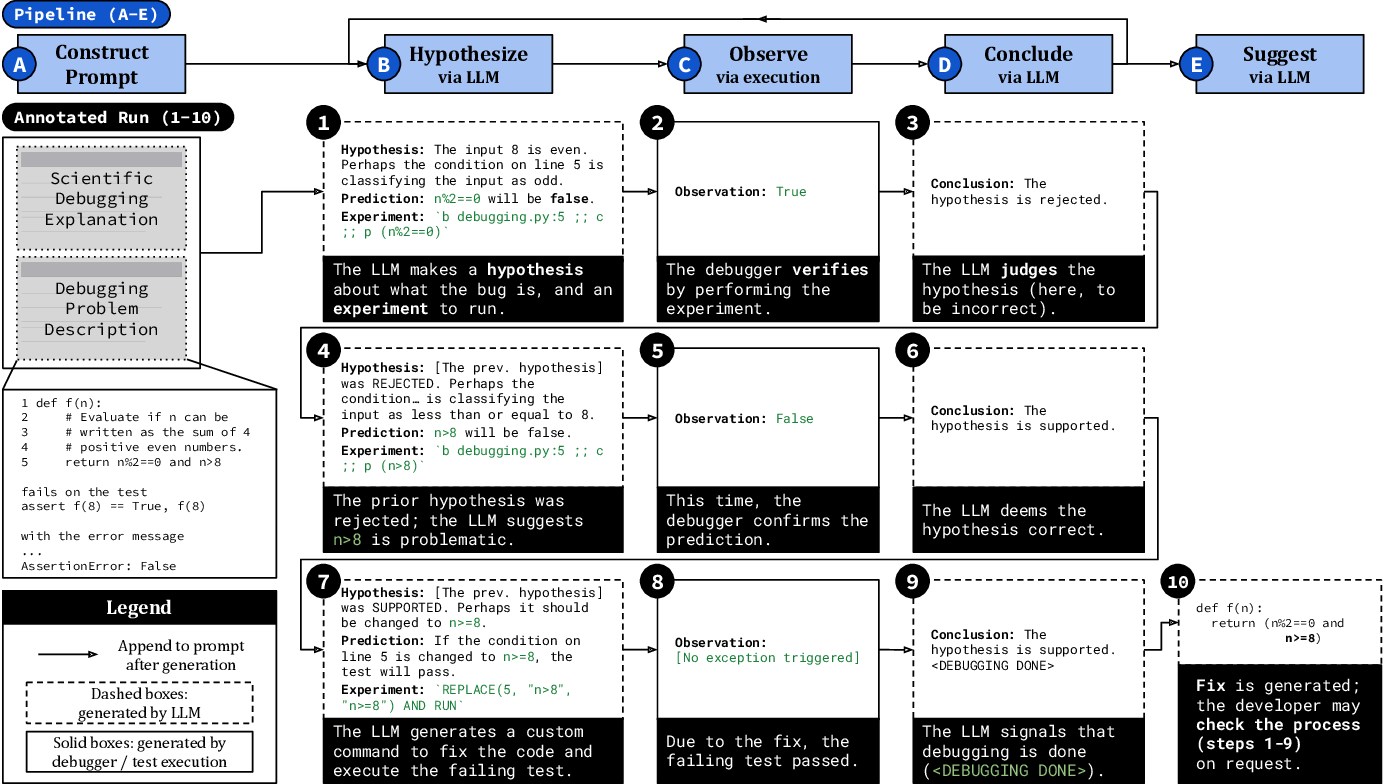

Figure 1 From Explainable Automated Debugging Via Large Language Model The overall goal of this research is to investigate how developers use and benefit from automated debugging tools through a set of human studies by providing initial evidence that several assumptions made by automated debugging techniques do not hold in practice. Large language models (llms) have shown impressive effectiveness in various software engineering tasks, including automated program repair (apr). in this study, we take a deep dive into automated bug fixing utilizing llms. Tl;dr: a survey of large language models for nl2code finds that large size, premium data, and expert tuning are key factors for success. the survey includes a comprehensive overview of existing models, benchmarks, and metrics. Inspired by the way developers interact with code when debugging, we propose automated scientific debugging (autosd), a technique that prompts large language models to automatically generate hypotheses, uses debuggers to interact with buggy code, and thus automatically reach conclusions prior to patch generation.

논문 리뷰 Synergy Of Large Language Model And Model Driven Engineering Tl;dr: a survey of large language models for nl2code finds that large size, premium data, and expert tuning are key factors for success. the survey includes a comprehensive overview of existing models, benchmarks, and metrics. Inspired by the way developers interact with code when debugging, we propose automated scientific debugging (autosd), a technique that prompts large language models to automatically generate hypotheses, uses debuggers to interact with buggy code, and thus automatically reach conclusions prior to patch generation.

Table 1 From Explainable Automated Debugging Via Large Language Model

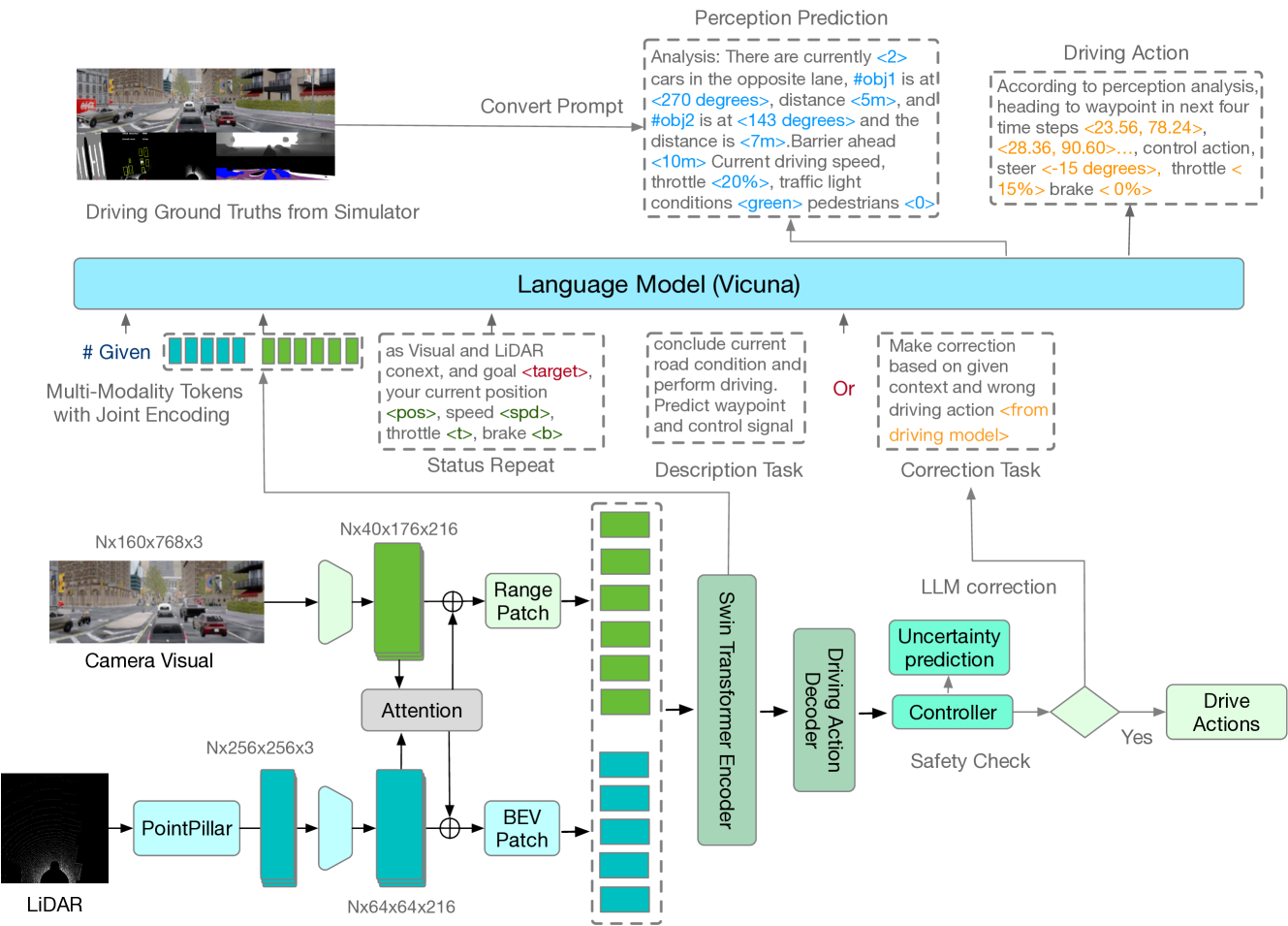

Synergy Of Large Language Model And Model Driven Engineering For

Comments are closed.