Evaluating Llm Agents In Healthcare A Practical Guide With The Adk

Evaluating The Effectiveness Of Llm Evaluators Aka Llm As Judge Pdf By leveraging the adk’s built in evaluation tools and coupling them with human expert oversight, you can confidently deploy llm agents that add real value to medical workflows without. Learn how to generate golden datasets and run evaluations to ensure your ai agents are trustworthy.

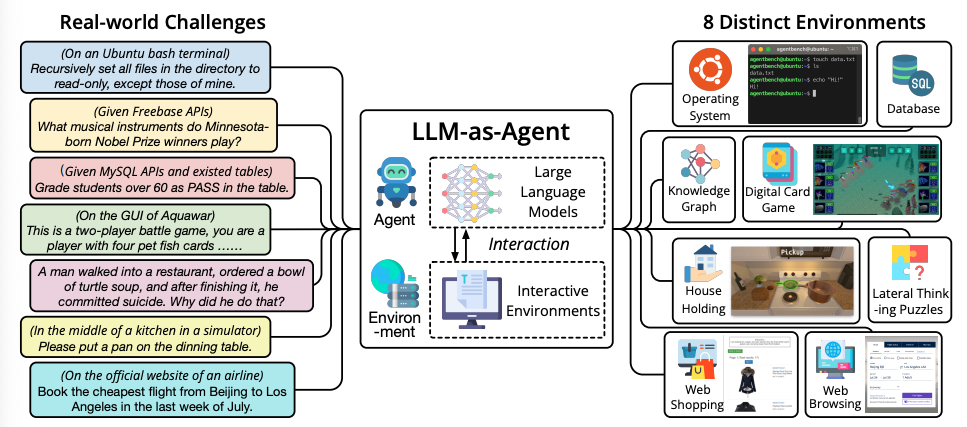

Llm Agents And Tool Use Pdf Cognitive Science Computing That’s when we turned to agent evaluation, a feature built into google adk that allows you to automate agent testing through similarity metrics and tool trajectory validation. The llmagent (often aliased simply as agent) is a core component in adk, acting as the "thinking" part of your application. it leverages the power of a large language model (llm) for reasoning, understanding natural language, making decisions, generating responses, and interacting with tools. The adk provides a comprehensive set of tools for evaluating agents, contributing to the project, and leveraging the api to build sophisticated agent applications. Dengan memanfaatkan alat penilaian terbina dalam adk dan menggandingkannya dengan pengawasan pakar manusia, anda boleh menggunakan ejen llm dengan yakin yang menambah nilai sebenar kepada.

Evaluating Llm Agents In Healthcare A Practical Guide With The Adk The adk provides a comprehensive set of tools for evaluating agents, contributing to the project, and leveraging the api to build sophisticated agent applications. Dengan memanfaatkan alat penilaian terbina dalam adk dan menggandingkannya dengan pengawasan pakar manusia, anda boleh menggunakan ejen llm dengan yakin yang menambah nilai sebenar kepada. This lab focuses on using the agent development kit (adk) to trace and evaluate agent decisions. you will learn how to define specific evaluation criteria for your agent's reasoning. Learn how to effectively evaluate ai agents with a full stack approach, covering key metrics, measurement methods, and a 5 step evaluation loop using the agent development kit (adk) and. This article is part of a series dedicated to exploring the various aspects of agent development using google’s agent development kit (adk). Adk provides several built in criteria for evaluating agent performance, ranging from tool trajectory matching to llm based response quality assessment. for a detailed list of available criteria and guidance on when to use them, please see evaluation criteria.

Llm Agents Agent Development Kit This lab focuses on using the agent development kit (adk) to trace and evaluate agent decisions. you will learn how to define specific evaluation criteria for your agent's reasoning. Learn how to effectively evaluate ai agents with a full stack approach, covering key metrics, measurement methods, and a 5 step evaluation loop using the agent development kit (adk) and. This article is part of a series dedicated to exploring the various aspects of agent development using google’s agent development kit (adk). Adk provides several built in criteria for evaluating agent performance, ranging from tool trajectory matching to llm based response quality assessment. for a detailed list of available criteria and guidance on when to use them, please see evaluation criteria.

Llm Agents Prompt Engineering Guide This article is part of a series dedicated to exploring the various aspects of agent development using google’s agent development kit (adk). Adk provides several built in criteria for evaluating agent performance, ranging from tool trajectory matching to llm based response quality assessment. for a detailed list of available criteria and guidance on when to use them, please see evaluation criteria.

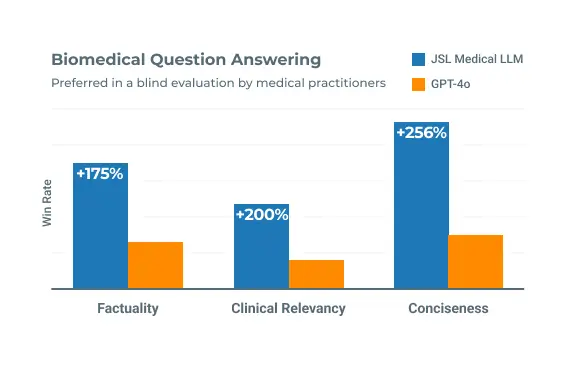

Healthcare Large Language Models Medical Llm Biomedical Clinical Llm

Comments are closed.