Evaluating Large Language Models Nextbigfuture

Evaluating Large Language Models Nextbigfuture There are four major aspects of llms pre training, adaptation tuning, utilization, and capacity evaluation. here is one of the new summaries of the available resources for developing llms and issues for future directions. To effectively capitalize on llm capacities as well as ensure their safe and beneficial development, it is critical to conduct a rigorous and comprehensive evaluation of llms. this survey endeavors to offer a panoramic perspective on the evaluation of llms.

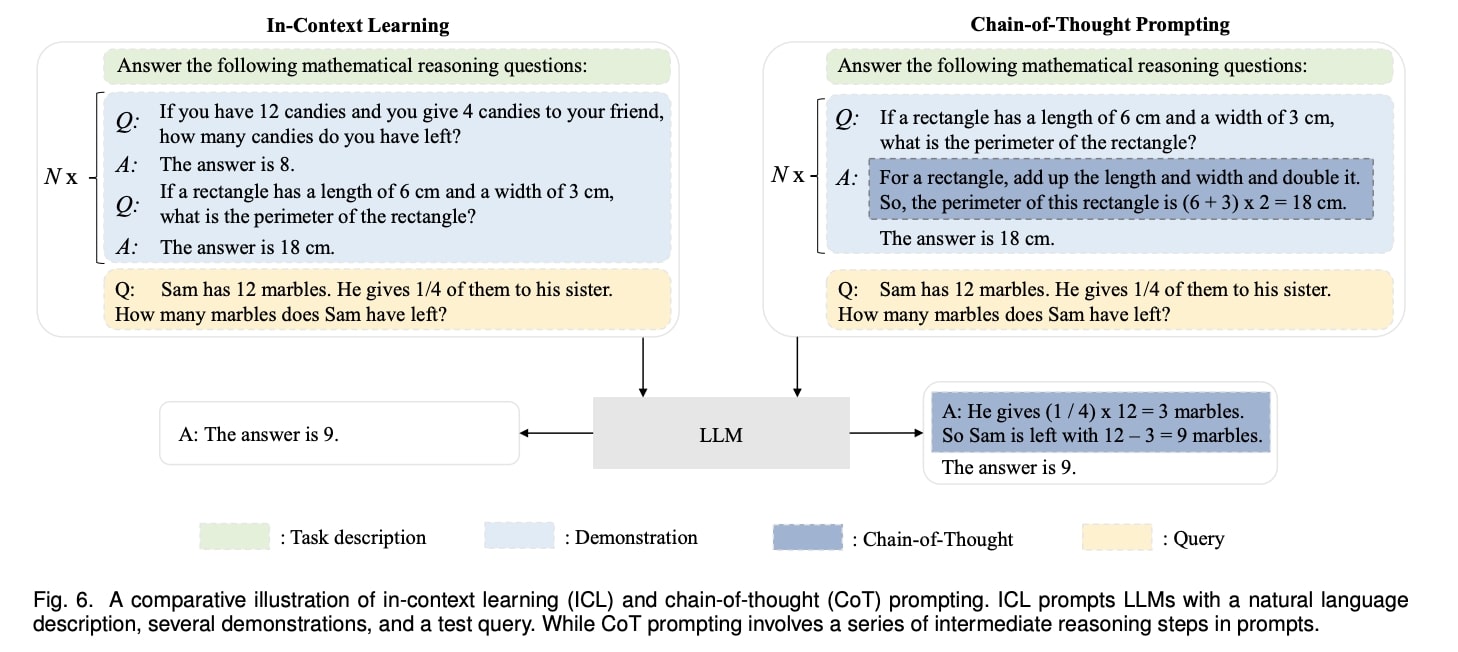

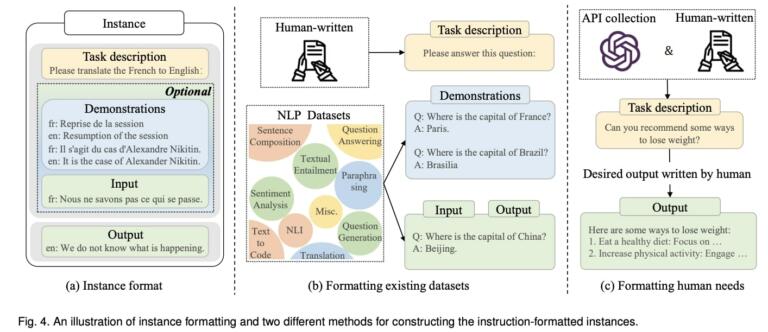

Evaluating Large Language Models Nextbigfuture In this systematic literature review, we explore each of these aspects in depth. finally, we conclude with insights and future directions for advancing the efficiency and applicability of large language models. Large language models (llms) have significantly revolutionized natural language processing tasks across various domains; however, understanding how to effectively evaluate and adapt them to specific application contexts remains an open challenge. Large language models (llms) have transformed natural language processing (nlp) by providing previously unheard of capabilities in text production, translation,. Over the past years, significant efforts have been made to examine llms from various perspectives. this paper presents a comprehensive review of these evaluation methods for llms, focusing on three key dimensions: what to evaluate, where to evaluate, and how to evaluate.

Evaluating Large Language Models Nextbigfuture Large language models (llms) have transformed natural language processing (nlp) by providing previously unheard of capabilities in text production, translation,. Over the past years, significant efforts have been made to examine llms from various perspectives. this paper presents a comprehensive review of these evaluation methods for llms, focusing on three key dimensions: what to evaluate, where to evaluate, and how to evaluate. Large language models (llms) have recently gained significant attention due to their remarkable capabilities in performing diverse tasks across various domains. By identifying the gaps in these current methodologies, the paper proposes a hybrid, multi layered evaluation framework designed to address the limitations of isolated metrics and offer a more. Automatic evaluation is the holy grail, but still a work in progress. without it, engineers are left with eye balling results and testing on a limited set of examples, and having a 1 day delay to know metrics. the model eval was the key to success in order to put a llm in production. Abstract the rapid advancement of large language models (llms) has revolutionized various fields, yet their deployment presents unique evaluation challenges. this whitepaper details the.

Evaluating Large Language Models Nextbigfuture Large language models (llms) have recently gained significant attention due to their remarkable capabilities in performing diverse tasks across various domains. By identifying the gaps in these current methodologies, the paper proposes a hybrid, multi layered evaluation framework designed to address the limitations of isolated metrics and offer a more. Automatic evaluation is the holy grail, but still a work in progress. without it, engineers are left with eye balling results and testing on a limited set of examples, and having a 1 day delay to know metrics. the model eval was the key to success in order to put a llm in production. Abstract the rapid advancement of large language models (llms) has revolutionized various fields, yet their deployment presents unique evaluation challenges. this whitepaper details the.

Comments are closed.