Evaluating Language Identification Performance

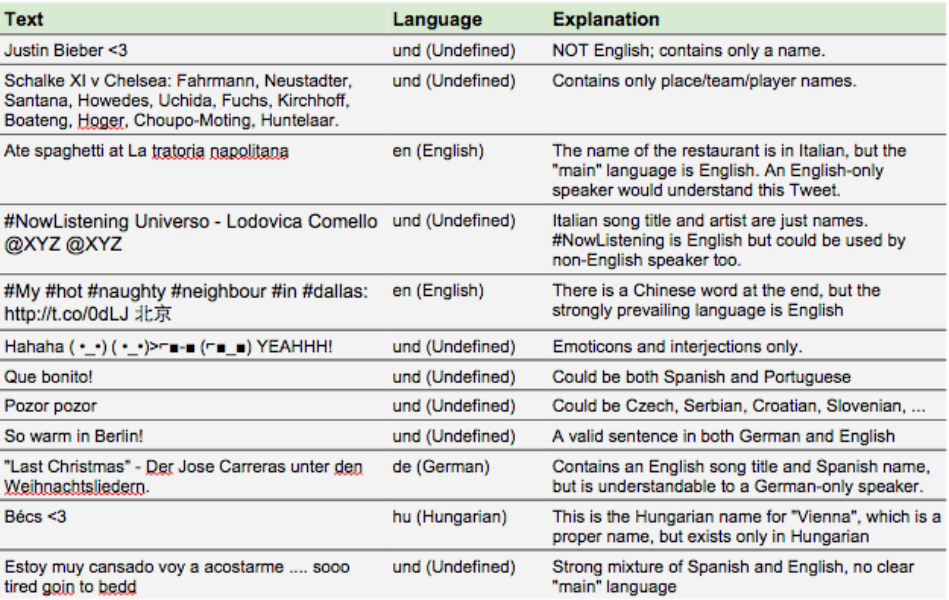

Language Learning And Identification Pdf Identity Social Science In this paper, we introduce commonlid, a community driven, human annotated lid benchmark for the web domain, covering 109 languages. many of the included languages have been previously under served, making commonlid a key resource for developing more representative high quality text corpora. There are many steps involved in a typical natural language processing pipeline, but one of the first and most fundamental steps is language identification — determining the language in which a piece of text is written. this is generally not a hard problem.

Evaluating Language Identification Performance This study presents an in depth anal ysis of cross domain language identification methods, evaluating their performance, capa bilities, and inherent limitations. Automatic speech recognition, speaker verification, and language identification are compared. what distinguishes different spoken languages is discussed. utility of methods is noted in terms of performance, using accuracy, complexity, and cost as measures. We present a lid model which achieves a macro average f1 score of 0.93 and a false positive rate of 0.033% across 201 languages, outperforming previous work. we achieve this by training on a curated dataset of monolingual data, the reliability of which we ensure by auditing a sample from each source and each language manually. This study compares the performance of five algorithms (naïve bayes, k nearest neighbors (knn), least squares, kullback leibler divergence, and kolmogorov smirnov test) to determine the most accurate and efficient method for language identification.

Github Abdullohndm Language Identification A Language Detection We present a lid model which achieves a macro average f1 score of 0.93 and a false positive rate of 0.033% across 201 languages, outperforming previous work. we achieve this by training on a curated dataset of monolingual data, the reliability of which we ensure by auditing a sample from each source and each language manually. This study compares the performance of five algorithms (naïve bayes, k nearest neighbors (knn), least squares, kullback leibler divergence, and kolmogorov smirnov test) to determine the most accurate and efficient method for language identification. In this section, we introduce some of the evaluation metrics and the evaluation setups for language identification. unsurprisingly, these metrics and setups have strong overlap with other text classification tasks. In this paper, we present the evaluation of seven language identification methods that was done in tests between 285 languages with an out of domain test set. the evaluated methods are, furthermore, described using unified notation. In this paper, we introduce commonlid, a community driven, human annotated lid benchmark for the web domain, covering 109 languages. many of the included languages have been previously. As part of this, one of our long term research goals has been to improve the language coverage of our crawls. we have been exploring several approaches towards this aim, but the most important for this blog post are our efforts to improve automatic language identification.

Language Evaluation Indicators Pdf Linguistics Reading Comprehension In this section, we introduce some of the evaluation metrics and the evaluation setups for language identification. unsurprisingly, these metrics and setups have strong overlap with other text classification tasks. In this paper, we present the evaluation of seven language identification methods that was done in tests between 285 languages with an out of domain test set. the evaluated methods are, furthermore, described using unified notation. In this paper, we introduce commonlid, a community driven, human annotated lid benchmark for the web domain, covering 109 languages. many of the included languages have been previously. As part of this, one of our long term research goals has been to improve the language coverage of our crawls. we have been exploring several approaches towards this aim, but the most important for this blog post are our efforts to improve automatic language identification.

Language Identification Comparison A Hugging Face Space By Kargaranamir In this paper, we introduce commonlid, a community driven, human annotated lid benchmark for the web domain, covering 109 languages. many of the included languages have been previously. As part of this, one of our long term research goals has been to improve the language coverage of our crawls. we have been exploring several approaches towards this aim, but the most important for this blog post are our efforts to improve automatic language identification.

Github Caiselvas Language Identification An Nlp Project Leveraging

Comments are closed.