Encoder Decoder Architecture For Seq2seq Models Lstm Based Seq2seq Explained

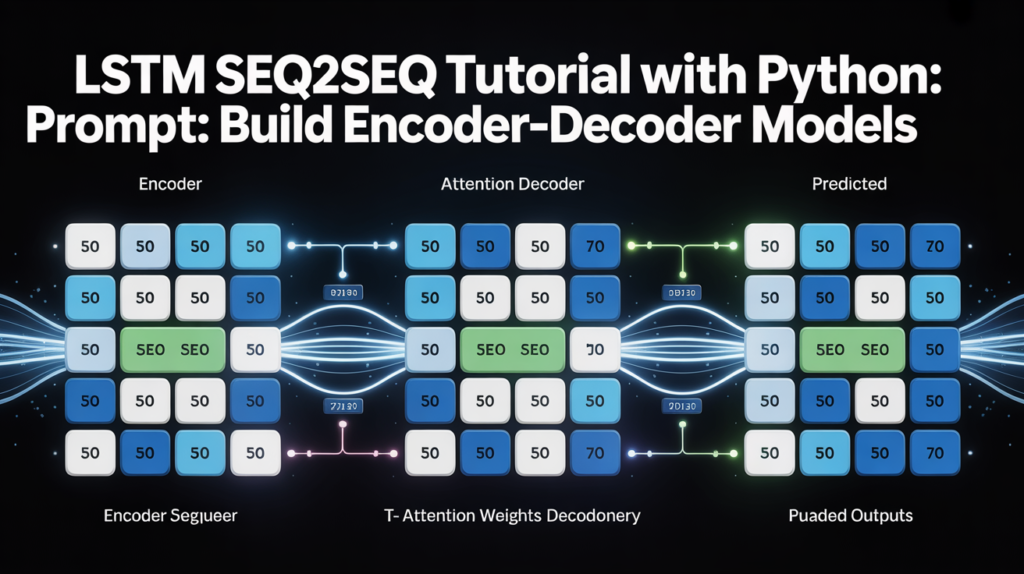

Lstm Seq2seq Tutorial Build Encoder Decoder Models Neural Brain Sequence‑to‑sequence (seq2seq) models are neural networks designed to transform one sequence into another, even when the input and output lengths differ and are built using encoder‑decoder architecture. it processes an input sequence and generates a corresponding output sequence. By using an lstm based encoder to capture the essence of the input sequence and an lstm based decoder to generate the output sequence, the seq2seq model overcomes the limitations of standard lstms.

The Seq2seq Model Has An Encoder Decoder Architecture With Lstm Cells A clear and practical explanation of the encoder–decoder (seq2seq) architecture, including training, backpropagation, prediction, teacher forcing, and lstm improvements. This blog post will delve into the intuition behind encoder decoder models, explain why they are essential for solving sequence to sequence problems, detail their architecture, and. In this post, you will reuse the same dataset and build a seq2seq model for the same task. the seq2seq model consists of two main components: an encoder and a decoder. the encoder processes the input sequence (french sentences) and generates a fixed size representation, known as the context vector. So the sequence to sequence (seq2seq) model in this post uses an encoder decoder architecture, which uses a type of rnn called lstm (long short term memory), where the encoder neural.

The Seq2seq Model Has An Encoder Decoder Architecture With Lstm Cells In this post, you will reuse the same dataset and build a seq2seq model for the same task. the seq2seq model consists of two main components: an encoder and a decoder. the encoder processes the input sequence (french sentences) and generates a fixed size representation, known as the context vector. So the sequence to sequence (seq2seq) model in this post uses an encoder decoder architecture, which uses a type of rnn called lstm (long short term memory), where the encoder neural. Understand the encoder decoder framework for sequence to sequence learning. covers context vectors, teacher forcing, bleu evaluation, and a complete pytorch implementation. So the sequence to sequence (seq2seq) model in this post uses an encoder decoder architecture, which uses a type of rnn called lstm (long short term memory), where the encoder neural network encodes the input language sequence into a single vector, also called as a context vector. We explore the encoder decoder architecture using lstms, tracing exactly how data flows from an input sequence, through a bottleneck context vector, and into a generated output. To address this, the sequence to sequence (seq2seq) framework was developed, primarily using recurrent architectures like lstms or grus. the core idea is to use two separate rnns: one to process the input sequence (the encoder) and another to generate the output sequence (the decoder).

Comments are closed.