Embedding Models English A Compendiumlabs Collection

Embedding Models Ai Glossary By Posium Compendiumlabs 's collections compendiumlabs bge small en v1.5 gguf compendiumlabs bge base en v1.5 gguf compendiumlabs bge large en v1.5 gguf. We’re on a journey to advance and democratize artificial intelligence through open source and open science.

Embedding Models Comparison A practical guide to the best embedding models in 2026. compare features, performance, and use cases for building scalable ai systems. Explore embed models for text classification and embedding generation in english and multiple languages, with details on dimensions and endpoints. The amazon titan embedding text v2 model is optimized for english, with multilingual support for the following languages. cross language queries (such as providing a knowledge base in korean and querying it in german) will return sub optimal results. Compare the top code embedding models for semantic code search, code completion, and repository analysis.

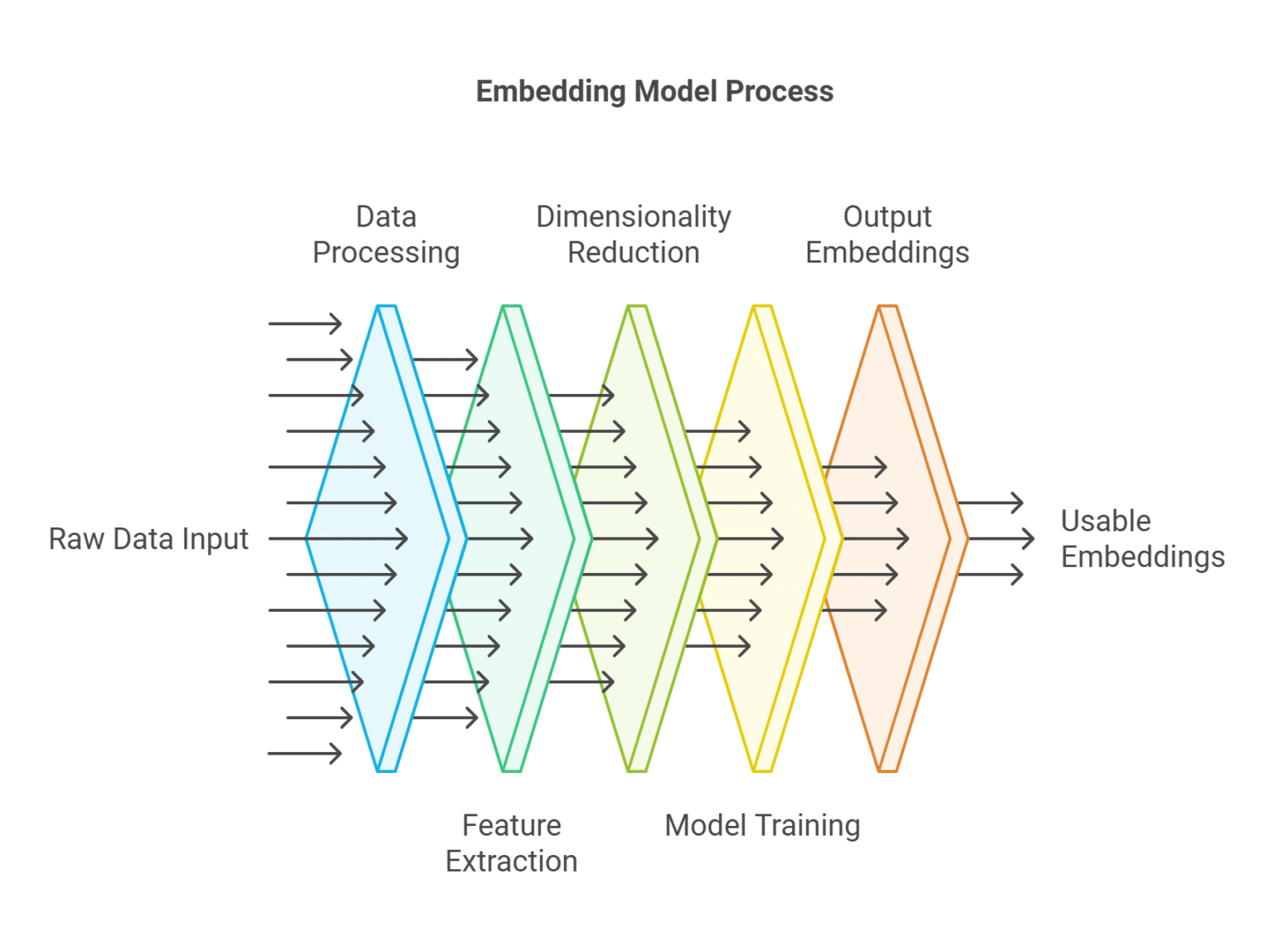

What Are Embedding Models An Overview The Couchbase Blog The amazon titan embedding text v2 model is optimized for english, with multilingual support for the following languages. cross language queries (such as providing a knowledge base in korean and querying it in german) will return sub optimal results. Compare the top code embedding models for semantic code search, code completion, and repository analysis. The ibm granite embedding 30m and 278m models models are text only dense biencoder embedding models, with 30m available in english only and 278m serving multilingual use cases. Embedding, quantization, and vector indexing. designed for speed. compendiumlabs ziggy. This is a multilingual sentence embedding model that can map sentences and paragraphs to a 768 dimensional vector space, suitable for tasks such as clustering and semantic search. We compared 11 open source embedding models by benchmarking their performance for rag.

Comments are closed.