Eigenvector And Eigenvalue And Pca Overview

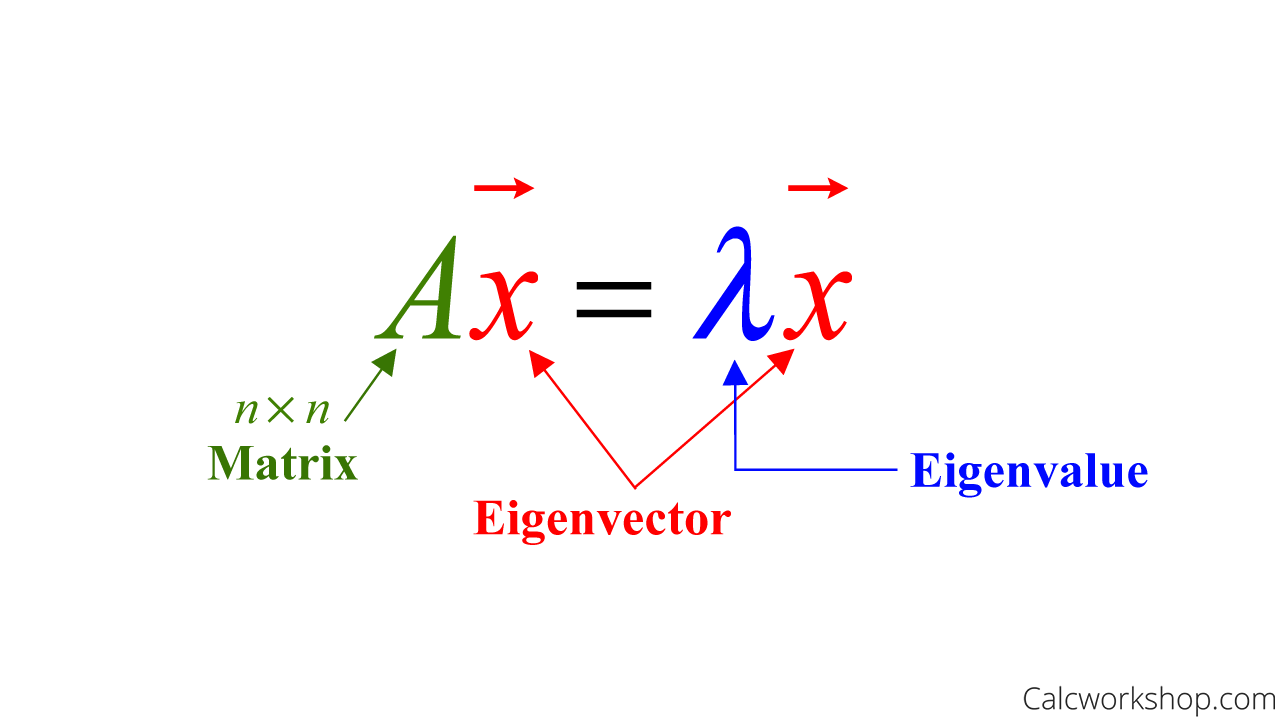

Eigenvalues Eigenvectors Pca Rishi Eigenvectors are the vectors indicating the direction of the axes along which the data varies the most. each eigenvector has a corresponding eigenvalue, quantifying the amount of variance captured along its direction. pca involves selecting eigenvectors with the largest eigenvalues. Eigenvalues and eigenvectors are fundamental concepts in linear algebra, used in various applications such as matrix diagonalization, stability analysis, and data analysis (e.g., principal component analysis). they are associated with a square matrix and provide insights into its properties.

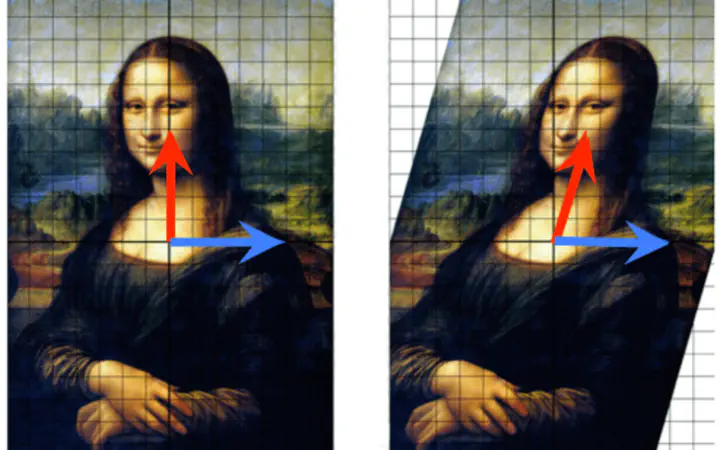

Eigenvector Definition Principal component analysis (pca) principal component analysis uses the power of eigenvectors and eigenvalues to reduce the number of features in our data, while keeping most of the variance (and therefore most of the information). An eigenvector is a direction, not just a vector. that means that if you multiply an eigenvector by any scalar, you get the same eigenvector: if ⃗8 = 8 ⃗8, then it’s also true that ⃗8 = 8 ⃗8 for any scalar . notice that only square matrices can have eigenvectors. In this article, we will cover one of the most fundamentals concepts in linear algebra: eigenvector and eigenvalues, and we will see how this concept was applied in the construction of the. Principal component analysis (pca) stands as one of the most fundamental techniques in machine learning for dimensionality reduction, data visualization, and feature extraction. at its mathematical core lies a powerful concept from linear algebra: eigenvalues and eigenvectors.

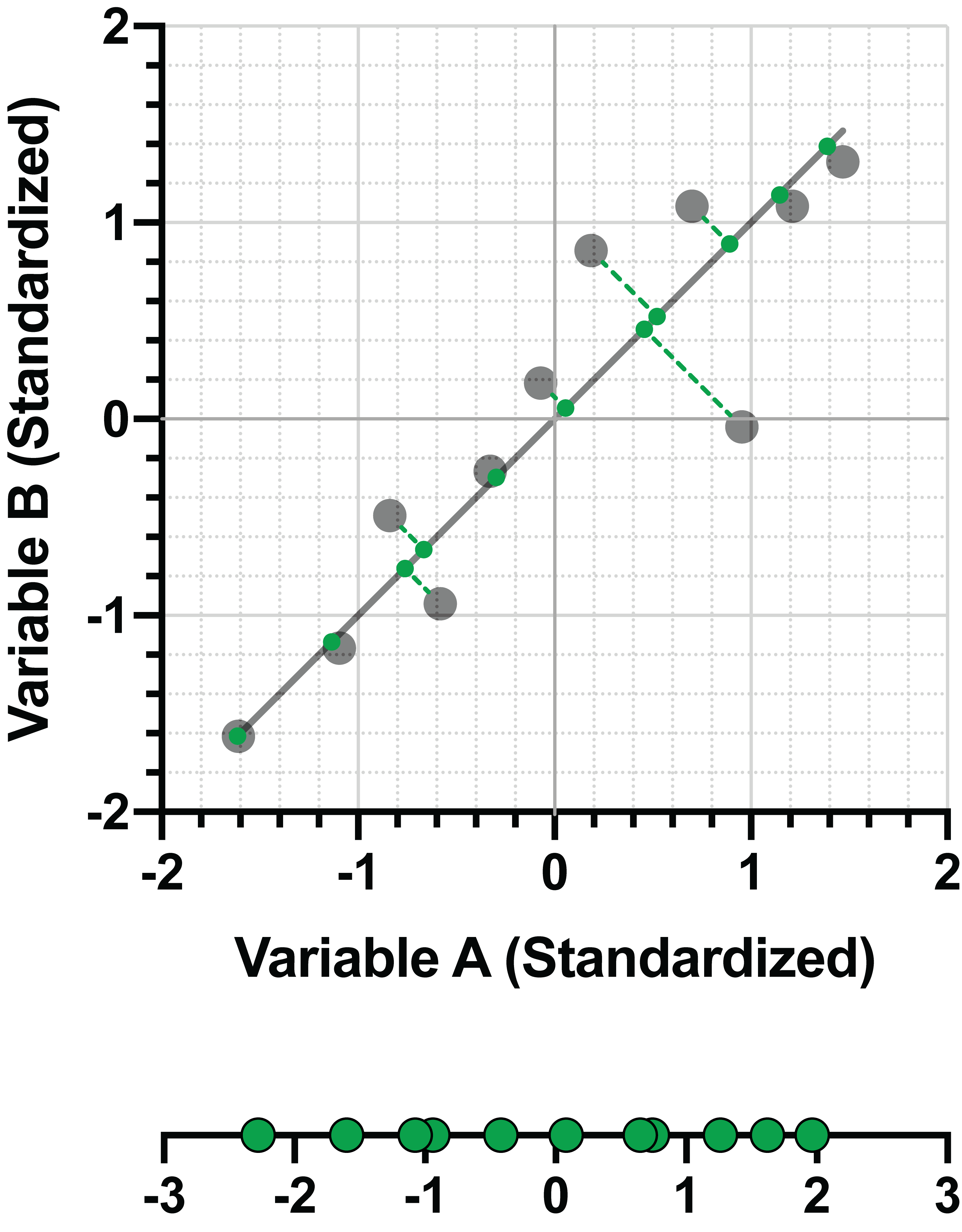

Graphpad Prism 10 Statistics Guide Eigenvalues And Eigenvectors In this article, we will cover one of the most fundamentals concepts in linear algebra: eigenvector and eigenvalues, and we will see how this concept was applied in the construction of the. Principal component analysis (pca) stands as one of the most fundamental techniques in machine learning for dimensionality reduction, data visualization, and feature extraction. at its mathematical core lies a powerful concept from linear algebra: eigenvalues and eigenvectors. To get to pca, we’re going to quickly define some basic statistical ideas – mean, standard deviation, variance and covariance – so we can weave them together later. In today's pattern recognition class my professor talked about pca, eigenvectors and eigenvalues. i understood the mathematics of it. if i'm asked to find eigenvalues etc. i'll do it correctly li. The lesson provides an insightful exploration into eigenvectors, eigenvalues, and the covariance matrix—key concepts underpinning the principal component analysis (pca) technique for dimensionality reduction. When we separate the input into eigenvectors,each eigenvectorjust goes its own way. the eigenvalues are the growth factors in anx = λnx. if all |λi|< 1 then anwill eventually approach zero. if any |λi|> 1 then aneventually grows. if λ = 1 then anx never changes (a steady state).

Eigenvector Values Of Variables In The Principal Components Pca And To get to pca, we’re going to quickly define some basic statistical ideas – mean, standard deviation, variance and covariance – so we can weave them together later. In today's pattern recognition class my professor talked about pca, eigenvectors and eigenvalues. i understood the mathematics of it. if i'm asked to find eigenvalues etc. i'll do it correctly li. The lesson provides an insightful exploration into eigenvectors, eigenvalues, and the covariance matrix—key concepts underpinning the principal component analysis (pca) technique for dimensionality reduction. When we separate the input into eigenvectors,each eigenvectorjust goes its own way. the eigenvalues are the growth factors in anx = λnx. if all |λi|< 1 then anwill eventually approach zero. if any |λi|> 1 then aneventually grows. if λ = 1 then anx never changes (a steady state).

Comments are closed.