Dreamfusionlab Github

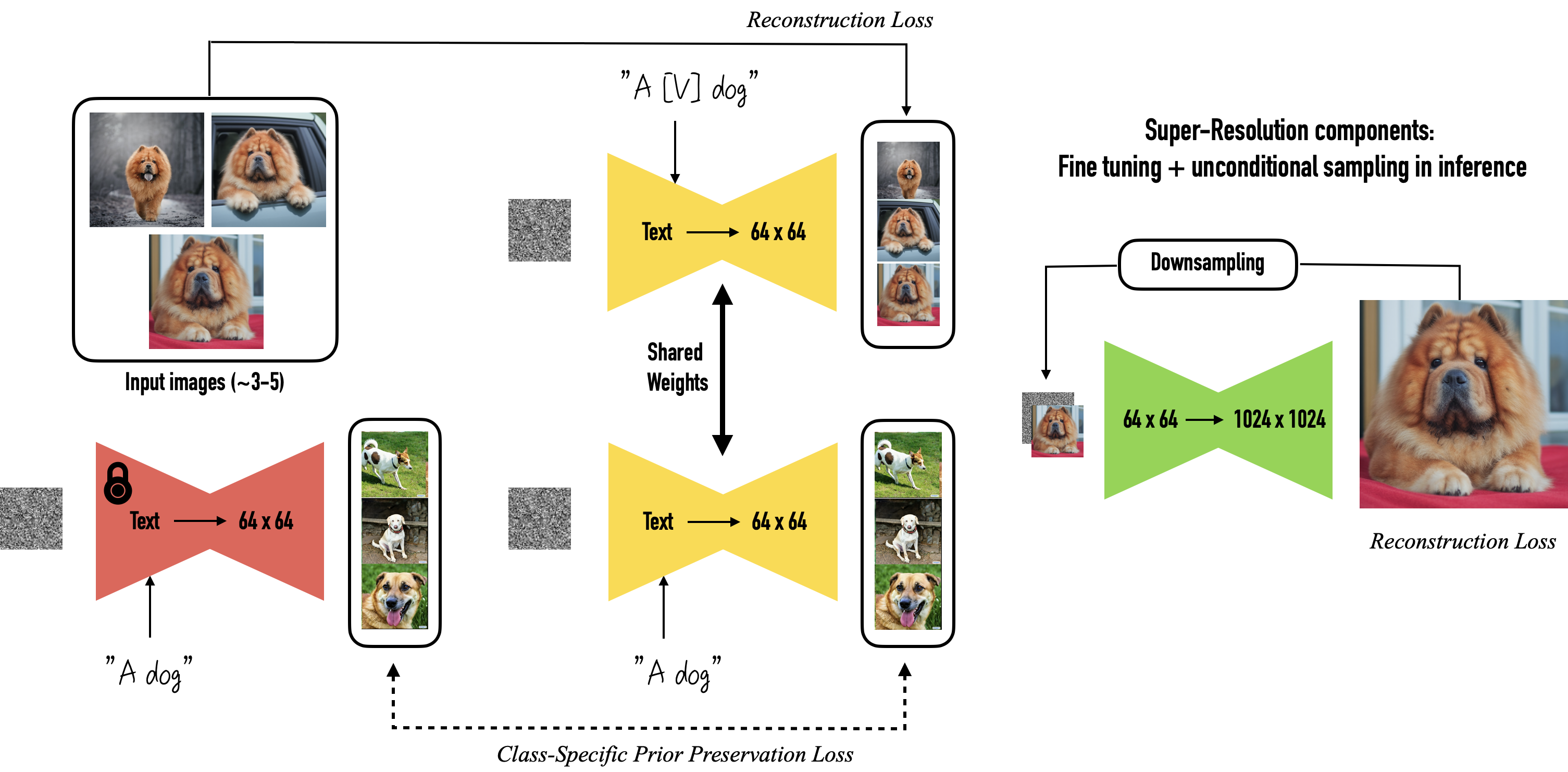

Dreambooth Github is where dreamfusionlab builds software. We introduce a loss based on probability density distillation that enables the use of a 2d diffusion model as a prior for optimization of a parametric image generator.

Brain3d Currently, 10000 training steps take about 3 hours to train on a v100. we use the multi resolution grid encoder to implement the nerf backbone (implementation from torch ngp), which enables much faster rendering (~10fps at 800x800). we use the adam optimizer with a larger initial learning rate. alleviate the multi face janus problem. Github ashawkey stable dreamfusion: text to 3d & image to 3d & mesh exportation with nerf diffusion. · github. improve commands. a pytorch implementation of the text to 3d model dreamfusion, powered by the stable diffusion text to 2d model. We use the multi resolution grid encoder to implement the nerf backbone (implementation from torch ngp), which enables much faster rendering (~10fps at 800x800). the vanilla nerf backbone is also supported now, but the mip nerf backbone as the paper is still not implemented. we use the adan optimizer as default. the multi face janus problem. To associate your repository with the dreamfusion topic, visit your repo's landing page and select "manage topics." github is where people build software. more than 150 million people use github to discover, fork, and contribute to over 420 million projects.

Dream Fx Github We use the multi resolution grid encoder to implement the nerf backbone (implementation from torch ngp), which enables much faster rendering (~10fps at 800x800). the vanilla nerf backbone is also supported now, but the mip nerf backbone as the paper is still not implemented. we use the adan optimizer as default. the multi face janus problem. To associate your repository with the dreamfusion topic, visit your repo's landing page and select "manage topics." github is where people build software. more than 150 million people use github to discover, fork, and contribute to over 420 million projects. We introduce a loss based on probability density distillation that enables the use of a 2d diffusion model as a prior for optimization of a parametric image generator. This is a minimal pytorch implementation of dreamfusion and its variant magic3d, where we utilize instant ngp as the neural renderer and stable diffusion deepfloyd if as the guidance. it takes ~30min to train with stable diffusion and ~40min with deepfloyd if on a single 3090. A: if you are interested in contributing to the development of dreamfusion, you can visit the project's github repository and explore the contribution guidelines. Contribute to smvorwerk stable dreamfusion 3d development by creating an account on github.

Comments are closed.