Distributed Deep Neural Network Training Using Mpi On Python

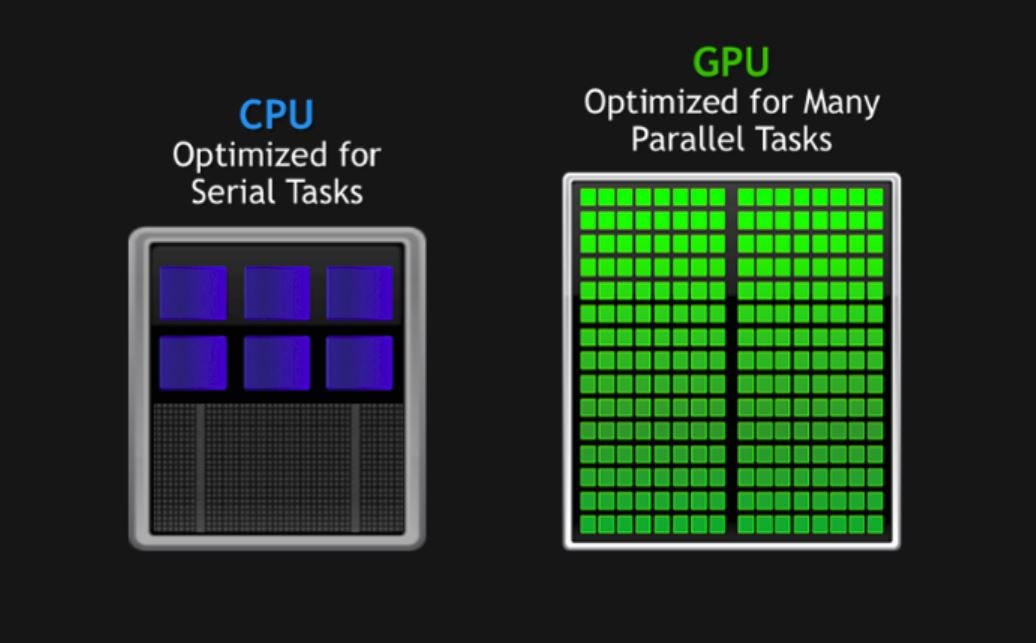

Distributed Deep Neural Network Training Using Mpi On Python Arpan Training of dl models remains a challenge as it requires a huge amount of time and computational resources. we will discuss the distributed training of the deep neural network using the mpi across multiple gpus or cpus. Abstract—we present a lightweight python framework for distributed training of neural networks on multiple gpus or cpus. the framework is built on the popular keras machine learning library.

Deep Learning With Python Pdf Deep Learning Artificial Neural Network It is possible to communicate data across different nodes in a distributed system using mpi communicate and receive functions, which speeds up the training and optimization of neural. Theano mpi is a python framework for distributed training of deep learning models built in theano. it implements data parallelism in serveral ways, e.g., bulk synchronous parallel, elastic averaging sgd and gossip sgd. We present a lightweight python framework for distributed training of neural networks on multiple gpus or cpus. the framework is built on the popular keras machine learning library. With pytorch distributed mpi, you can distribute the training workload across multiple gpus or nodes, significantly reducing the training time. to use pytorch distributed mpi, you need to have pytorch installed along with an mpi implementation such as openmpi or mpich.

Optimizin Deep Neural Network Training Using Mpi On Python By The We present a lightweight python framework for distributed training of neural networks on multiple gpus or cpus. the framework is built on the popular keras machine learning library. With pytorch distributed mpi, you can distribute the training workload across multiple gpus or nodes, significantly reducing the training time. to use pytorch distributed mpi, you need to have pytorch installed along with an mpi implementation such as openmpi or mpich. To carry out training and inference, pydtnn exploits inter process parallelism (via mpi) and intra process parallelism (via multithreading), leveraging the capabilities of multicore processors and gpus at the node level. As an example, this script implements distributed training of a convolutional neural network (cnn) on the mnist dataset using pytorch's distributeddataparallel (ddp) to leverage multiple gpus in parallel. Mpi4dl v0.6 is a distributed, accelerated and memory efficient training framework for very high resolution images that integrates spatial parallelism, bidirectional parallelism, layer parallelism, and pipeline parallelism. The outburst of deep learning (dl) technologies in the past few years has been accelerated by the development of efficient frameworks for distributed training of deep neural networks (dnns) on clusters.

Github Vivinbarath Implementation Of Neural Networks Using Mpi In To carry out training and inference, pydtnn exploits inter process parallelism (via mpi) and intra process parallelism (via multithreading), leveraging the capabilities of multicore processors and gpus at the node level. As an example, this script implements distributed training of a convolutional neural network (cnn) on the mnist dataset using pytorch's distributeddataparallel (ddp) to leverage multiple gpus in parallel. Mpi4dl v0.6 is a distributed, accelerated and memory efficient training framework for very high resolution images that integrates spatial parallelism, bidirectional parallelism, layer parallelism, and pipeline parallelism. The outburst of deep learning (dl) technologies in the past few years has been accelerated by the development of efficient frameworks for distributed training of deep neural networks (dnns) on clusters.

Github Packtpublishing Deep Learning Deep Neural Network For Mpi4dl v0.6 is a distributed, accelerated and memory efficient training framework for very high resolution images that integrates spatial parallelism, bidirectional parallelism, layer parallelism, and pipeline parallelism. The outburst of deep learning (dl) technologies in the past few years has been accelerated by the development of efficient frameworks for distributed training of deep neural networks (dnns) on clusters.

Deep Learning Deep Neural Network For Beginners Using Python By Packt

Comments are closed.