Distributed Deep Learning Training Pptx

Slide 14 Distributed Deep Learning Pdf Deep Learning Computer This document discusses distributed deep learning using a cluster of gpus. it begins by comparing cpus and gpus, noting that gpus are better for deep learning due to higher memory bandwidth and more cores. while gpus provide better performance, training models across multiple gpus is challenging. Given a distributed dataset (left), describe a data parallel approach of imputing the missing values (null) of attr1 with its mode, and attr2 with its mean. describe strategies for improving the performance.

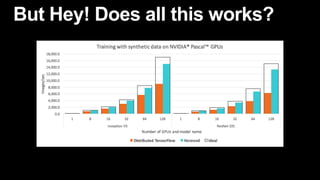

Distributed Deep Learning Training Pptx Conclusion deep learning workloads and network technologies (rdma) => rethink the rpc abstraction for network communication. we designed: a “device” like interface, with static analysis and dynamic tracing, enables cross stack optimizations for deep neural network training: => take full advantage of the underlying rdma capabilities. The document discusses distributed deep learning, focusing on the challenges of training deep neural networks due to their computational intensity and the need for massive datasets. Increasing the scale of deep learning can drastically improve ultimate classification accuracy. increase the number of training examples. increase the number of model parameters. background & motivation. speed up is small when the model doesn’t fit in the gpu memory. Today i am presenting our work thc: accelerating distributed deep learning using tensor homomorphic compression. this work is done in collaboration with our colleagues at ucl and vmwareby broadcom.

Distributed Deep Learning Training Pptx Increasing the scale of deep learning can drastically improve ultimate classification accuracy. increase the number of training examples. increase the number of model parameters. background & motivation. speed up is small when the model doesn’t fit in the gpu memory. Today i am presenting our work thc: accelerating distributed deep learning using tensor homomorphic compression. this work is done in collaboration with our colleagues at ucl and vmwareby broadcom. More efficient for large scale training to thousands of processes where point to point communication is much cheaper than collective operations such as allreduce or all gather. Machine learning (ml) algorithms that allow computers to learn from examples without being explicitly programmed. deep learning (dl) subset of ml, using deep artificial neural networks as models, inspired by the structure and function of the human brain. example:. Elevate your presentations with our optimizing distributed deep learning training techniques ppt template. this professional deck features comprehensive slides, insightful graphics, and expert content designed to enhance understanding of advanced ai methodologies. Introduction to machine learning(keywords: model, training, inference, stochastic gradient descent, overfitting) how to compute the gradient(keywords: backpropagation, multi layer perceptrons, activation function) what is machine learning (ml)? the goal of ml is to learn from data >.

Comments are closed.