Diffusion Action Segmentation Deepai

Diffusion Action Segmentation Deepai Extensive experiments on three benchmark datasets, i.e., gtea, 50salads, and breakfast, are performed and the proposed method achieves superior or comparable results to state of the art methods, showing the effectiveness of a generative approach for action segmentation. We propose a novel framework via denoising diffusion models, which nonetheless shares the same inherent spirit of such iterative refinement. in this framework, action predictions are iteratively generated from random noise with input video features as conditions.

Multimodal Diffusion Segmentation Model For Object Segmentation From About code for diffusion action segmentation (iccv 2023) readme mit license activity. Understanding long form videos requires precise temporal action segmentation. while existing studies typically employ multi stage models that follow an iterative refinement process, we present a novel framework based on the denoising diffusion model that retains this core iterative principle. In this paper, we recognize that diffusion models, given their iterative refine ment properties, are especially suitable for temporal action segmentation. to the best of our knowledge, this work is the first one employing diffusion models for action analysis. This paper addresses the task of temporal action segmentation and presents a joint approach that combines low level and high level video analysis techniques. our approach introduces the usage of diffusion models to enhance the quality and accuracy of temporal action segmentation.

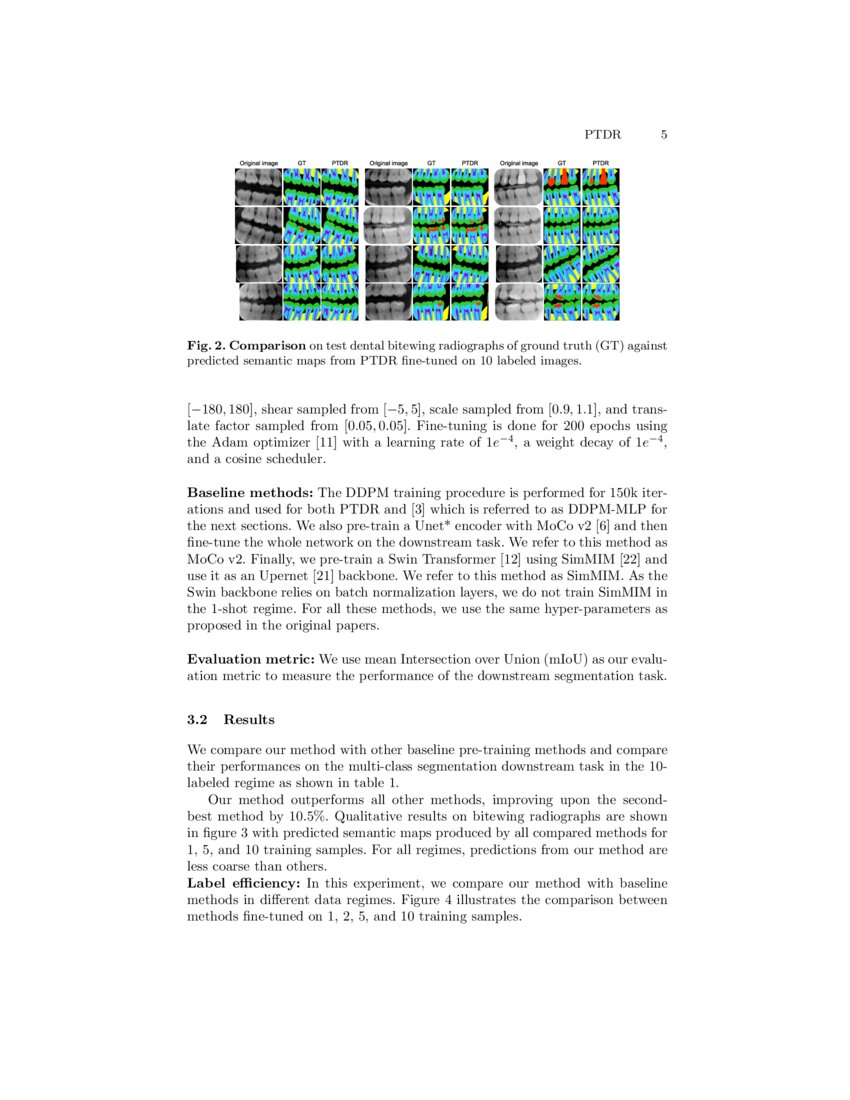

Pre Training With Diffusion Models For Dental Radiography Segmentation In this paper, we recognize that diffusion models, given their iterative refine ment properties, are especially suitable for temporal action segmentation. to the best of our knowledge, this work is the first one employing diffusion models for action analysis. This paper addresses the task of temporal action segmentation and presents a joint approach that combines low level and high level video analysis techniques. our approach introduces the usage of diffusion models to enhance the quality and accuracy of temporal action segmentation. Extensive experiments on three benchmark datasets, i.e., gtea, 50salads, and breakfast, are performed and the proposed method achieves superior or comparable results to state of the art methods, showing the effectiveness of a generative approach for action segmentation. Understanding long form videos requires precise temporal action segmentation. while existing studies typically employ multi stage models that follow an iterative refinement process, we present a novel framework based on the denoising diffusion model that retains this core iterative principle. We propose a novel framework via denoising diffusion models, which nonetheless shares the same inherent spirit of such iterative refinement. in this framework, action predictions are iteratively generated from random noise with input video features as conditions. Understanding long form videos requires precise temporal action segmentation. while existing studies typically employ multi stage models that follow an iterative refinement process, we present a novel framework based on the denoising diffusion model that retains this core iterative principle.

Diffusion Action Segmentation Extensive experiments on three benchmark datasets, i.e., gtea, 50salads, and breakfast, are performed and the proposed method achieves superior or comparable results to state of the art methods, showing the effectiveness of a generative approach for action segmentation. Understanding long form videos requires precise temporal action segmentation. while existing studies typically employ multi stage models that follow an iterative refinement process, we present a novel framework based on the denoising diffusion model that retains this core iterative principle. We propose a novel framework via denoising diffusion models, which nonetheless shares the same inherent spirit of such iterative refinement. in this framework, action predictions are iteratively generated from random noise with input video features as conditions. Understanding long form videos requires precise temporal action segmentation. while existing studies typically employ multi stage models that follow an iterative refinement process, we present a novel framework based on the denoising diffusion model that retains this core iterative principle.

Comments are closed.