Dgx Cloud Benchmarking Nvidia Developer

Dgx Cloud Benchmarking Nvidia Developer Discover how nvidia dgx cloud benchmarking accurately measures performance in real world environments and identifies optimization opportunities in ai training and inference workloads. The following tables list each benchmark used to evaluate the model's performance, along with their specific configurations. note: the "scale (# of gpus)" column indicates the minimum supported scale and the maximum scale tested for each workload.

Measure And Improve Ai Workload Performance With Nvidia Dgx Cloud Nvidia nemotron is a collection of open source models, datasets, and techniques developed and accelerated on dgx cloud, giving developers the ability to build, customize, and deploy powerful agentic ai solutions with unparalleled performance and scalability. Browse the documentation to install and monitor your environment, manage resources and organizations, run and scale ai workloads, and integrate nvidia run:ai into your workflows using apis. The dgxc benchmarking repository provides tools and methodologies for evaluating ai model performance on nvidia dgx cloud infrastructure. To that end, nvidia has been working on a set of performance testing tools, called dgx cloud benchmark recipes, that are designed to help organizations evaluate how their hardware and cloud.

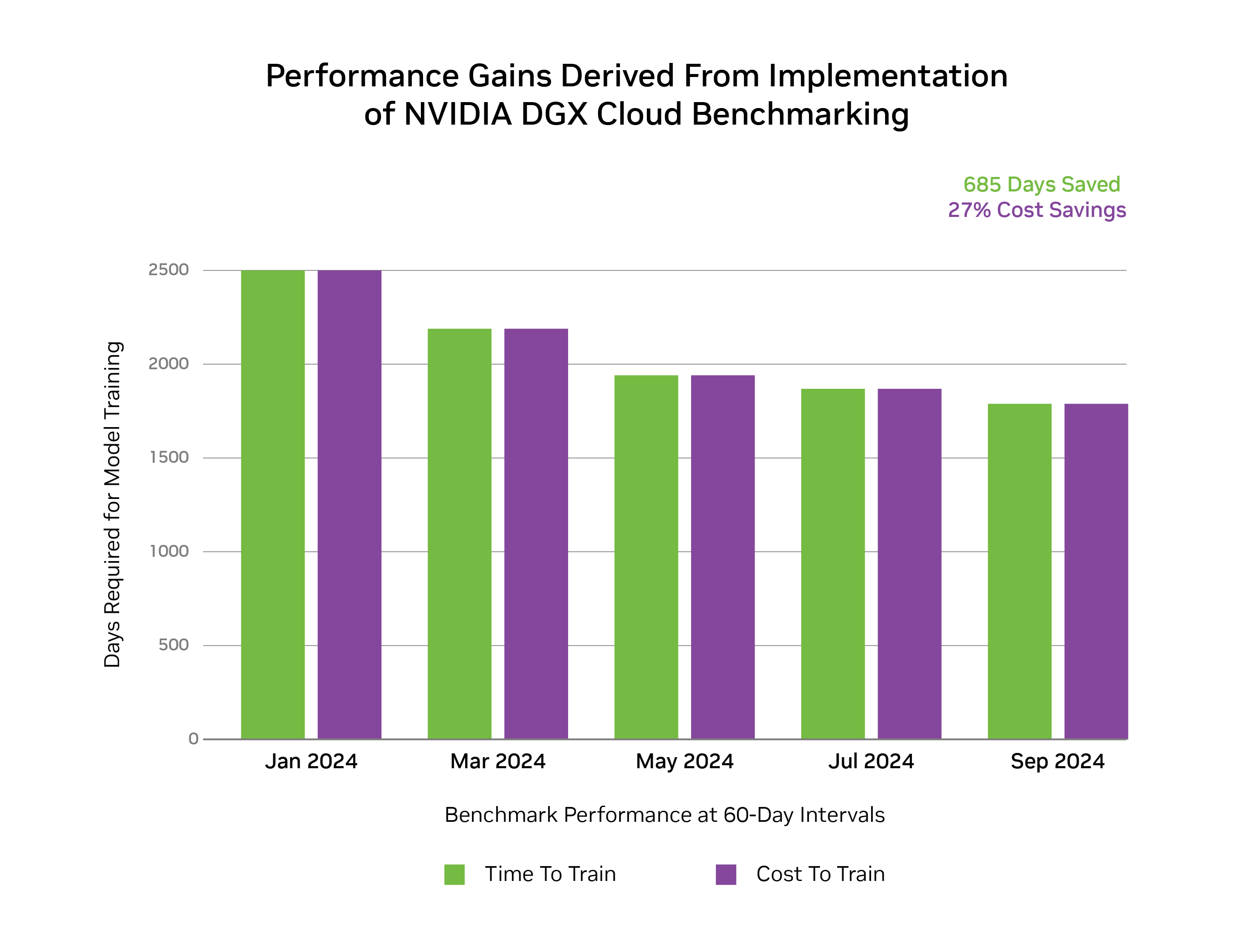

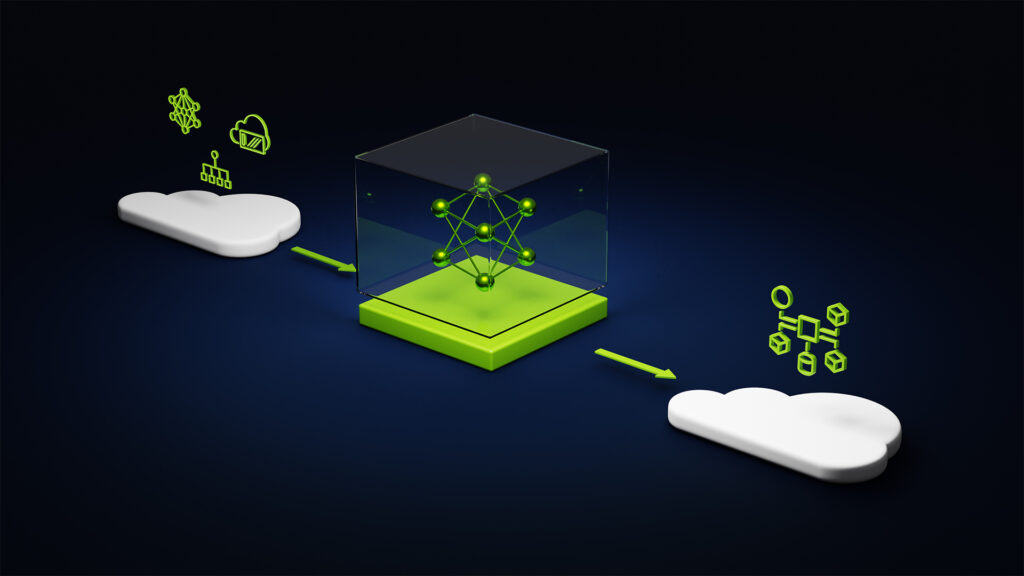

Measure And Improve Ai Workload Performance With Nvidia Dgx Cloud The dgxc benchmarking repository provides tools and methodologies for evaluating ai model performance on nvidia dgx cloud infrastructure. To that end, nvidia has been working on a set of performance testing tools, called dgx cloud benchmark recipes, that are designed to help organizations evaluate how their hardware and cloud. Nvidia dgx cloud benchmarking provides a standardized framework for evaluating the performance of large scale ai workloads using containerized recipes. each recipe targets a specific workload and supports flexible configuration across cluster scales and numerical precisions. It enables consistent comparison of performance metrics across various cloud platforms and on premises infrastructure through containerized "performance recipes" that encapsulate specific ai workloads. this document covers the overall framework architecture, workflow, and implementation details. Nvidia has been working on a set of performance testing tools, called dgx cloud benchmark recipes, that are designed to help organizations evaluate how their hardware and cloud infrastructure perform when running the most advanced ai models available today. Leveraging the latest nvidia gpu architectures—blackwell and hopper—dgx cloud on aws accelerates training for large language models (llms) and generative ai workloads. benefit from faster model training, reduced time to solution, and higher productivity from day one.

Measure And Improve Ai Workload Performance With Nvidia Dgx Cloud Nvidia dgx cloud benchmarking provides a standardized framework for evaluating the performance of large scale ai workloads using containerized recipes. each recipe targets a specific workload and supports flexible configuration across cluster scales and numerical precisions. It enables consistent comparison of performance metrics across various cloud platforms and on premises infrastructure through containerized "performance recipes" that encapsulate specific ai workloads. this document covers the overall framework architecture, workflow, and implementation details. Nvidia has been working on a set of performance testing tools, called dgx cloud benchmark recipes, that are designed to help organizations evaluate how their hardware and cloud infrastructure perform when running the most advanced ai models available today. Leveraging the latest nvidia gpu architectures—blackwell and hopper—dgx cloud on aws accelerates training for large language models (llms) and generative ai workloads. benefit from faster model training, reduced time to solution, and higher productivity from day one.

Comments are closed.