Depth Anything

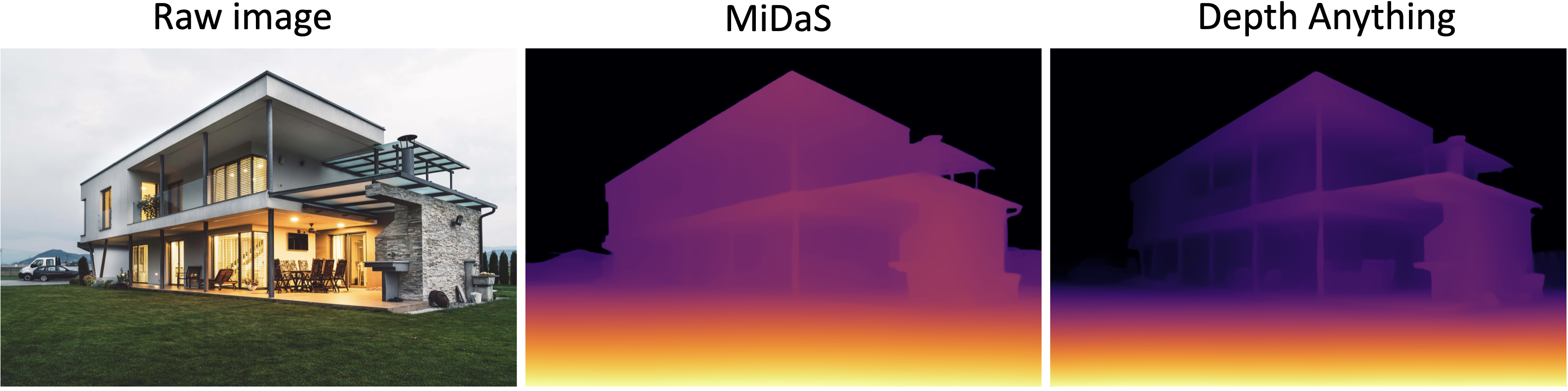

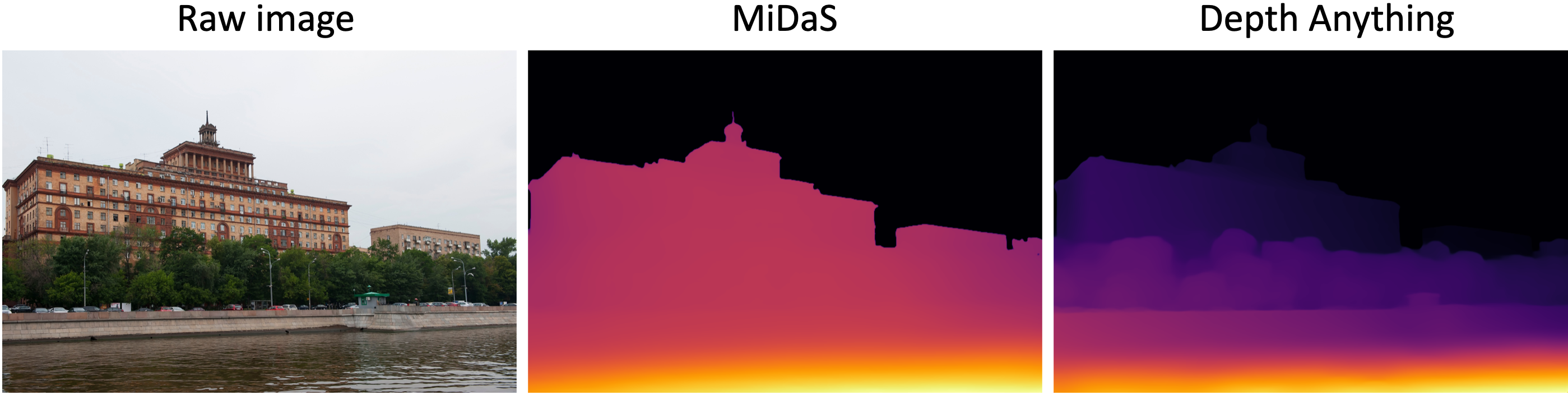

Depth Anything Upload an image and the app creates a detailed depth map that shows how far each part of the scene is from the camera. the result is a visual depth image (and optional 3d view) that you can downloa. This work presents depth anything, a highly practical solution for robust monocular depth estimation by training on a combination of 1.5m labeled images and 62m unlabeled images. try our latest depth anything v2 models!.

Depth Anything Depth anything is a cvpr 2024 paper that presents a simple yet powerful foundation model for robust monocular depth estimation. it trains on 1.5m labeled images and 62m unlabeled images, and achieves zero shot and in domain metric depth estimation, as well as depth conditioned video editing. This paper presents a solution for robust monocular depth estimation using large scale unlabeled data. it proposes a data engine, a challenging optimization target, and an auxiliary supervision to improve the generalization ability of the model. We present depth anything 3 (da3), a model that predicts spatially consistent geometry from an arbitrary number of visual inputs, with or without known camera poses. Upload a photo and the app will estimate how far each part of the scene is, creating a colorful depth map that you can explore with a slider. it also provides downloadable grayscale and 16‑bit raw.

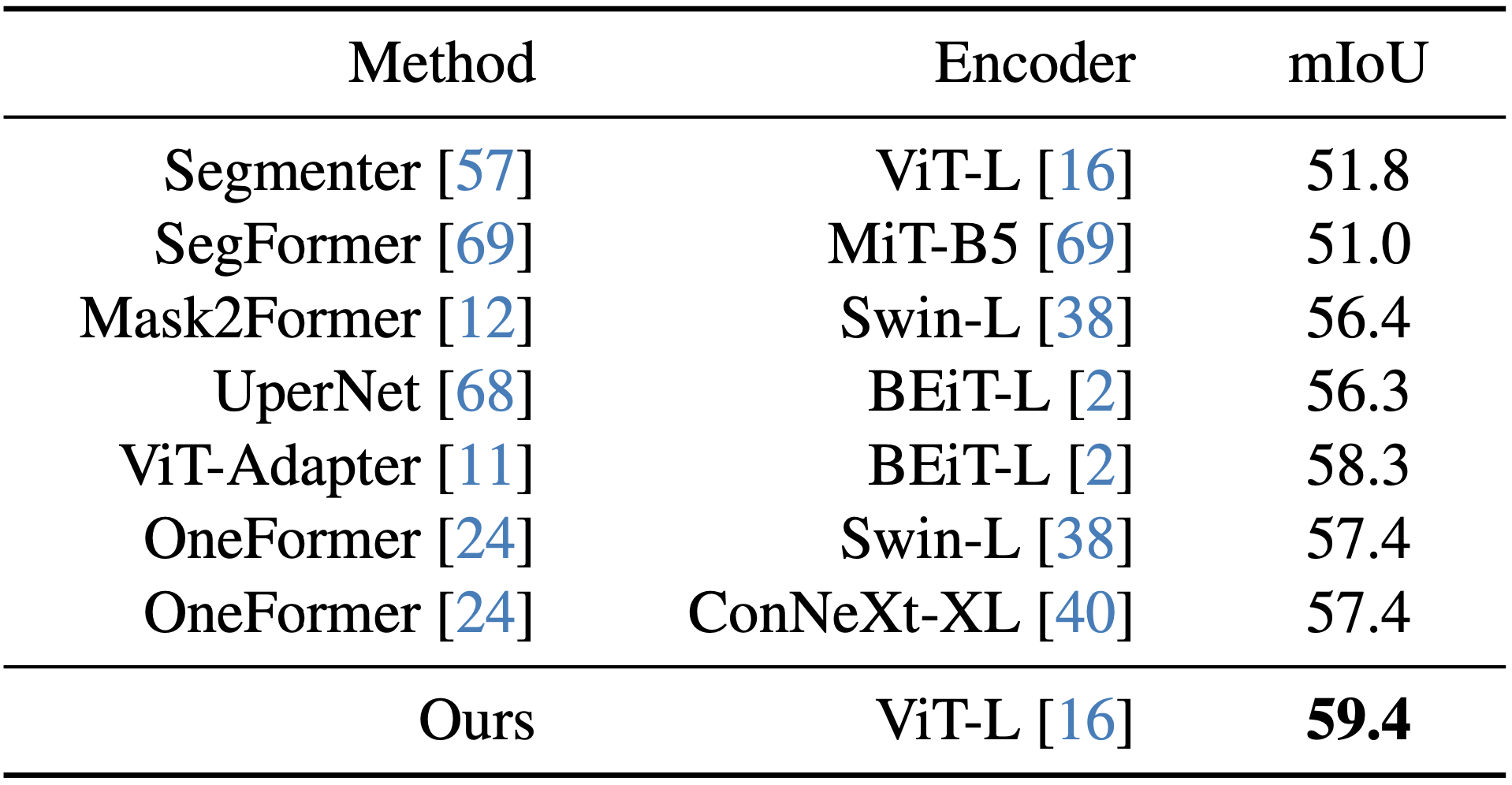

Depth Anything We present depth anything 3 (da3), a model that predicts spatially consistent geometry from an arbitrary number of visual inputs, with or without known camera poses. Upload a photo and the app will estimate how far each part of the scene is, creating a colorful depth map that you can explore with a slider. it also provides downloadable grayscale and 16‑bit raw. This work presents depth anything v2. it significantly outperforms v1 in fine grained details and robustness. compared with sd based models, it enjoys faster inference speed, fewer parameters, and higher depth accuracy. Depth anything is a novel approach that leverages large scale unlabeled data to build a robust and generalizable monocular depth estimation model. it uses data augmentation, self training, and auxiliary semantic priors to improve the model performance and zero shot capabilities. Depth anything v2 is a neural network that estimates depth from images using synthetic and real data. it outperforms previous models in accuracy, robustness and efficiency, and provides pre trained models and a benchmark for evaluation. This work presents depth anything, a highly practical solution for robust monocular depth estimation by training on a combination of 1.5m labeled images and 62m unlabeled images.

Comments are closed.