Depth Anything V2

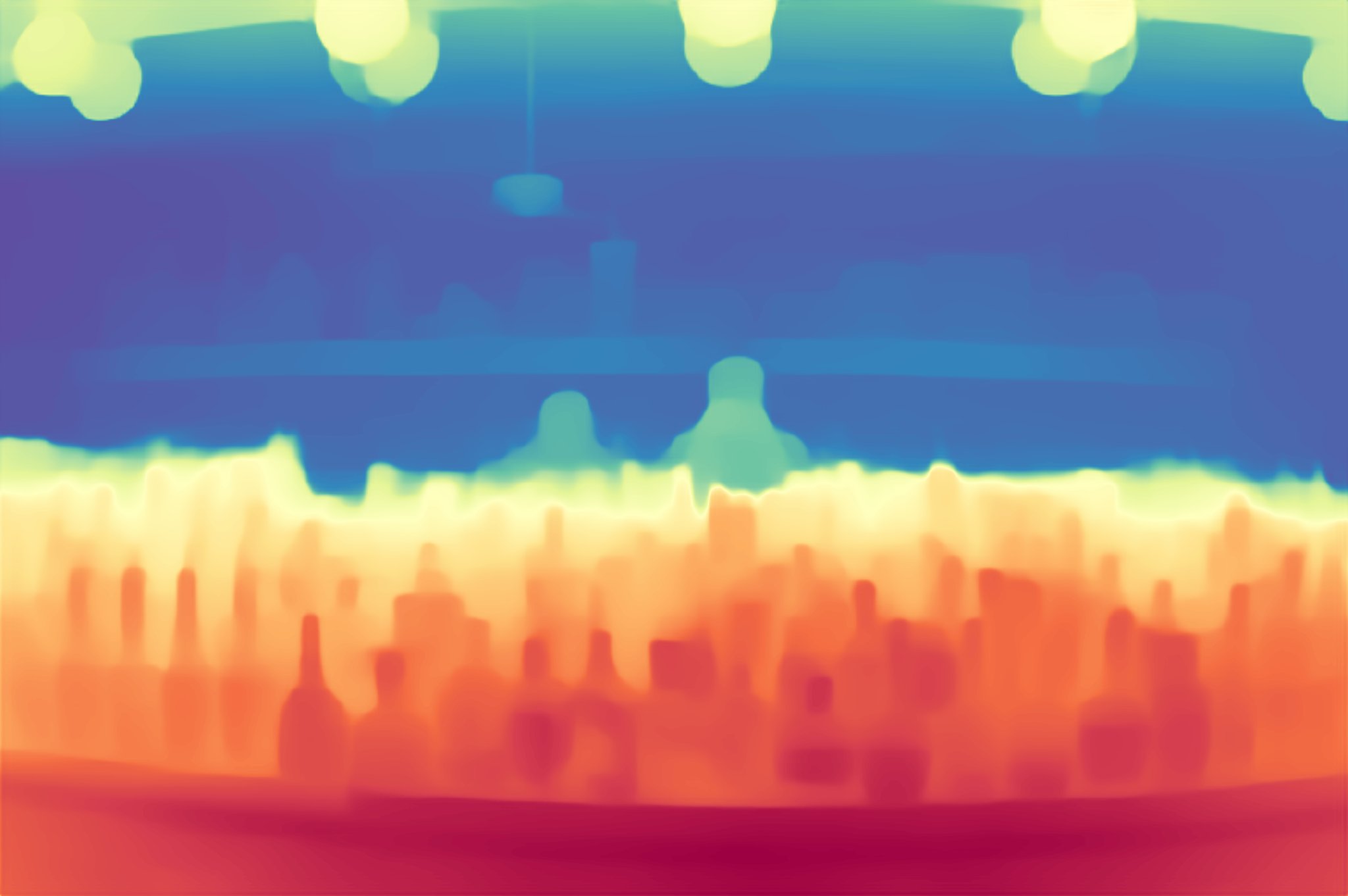

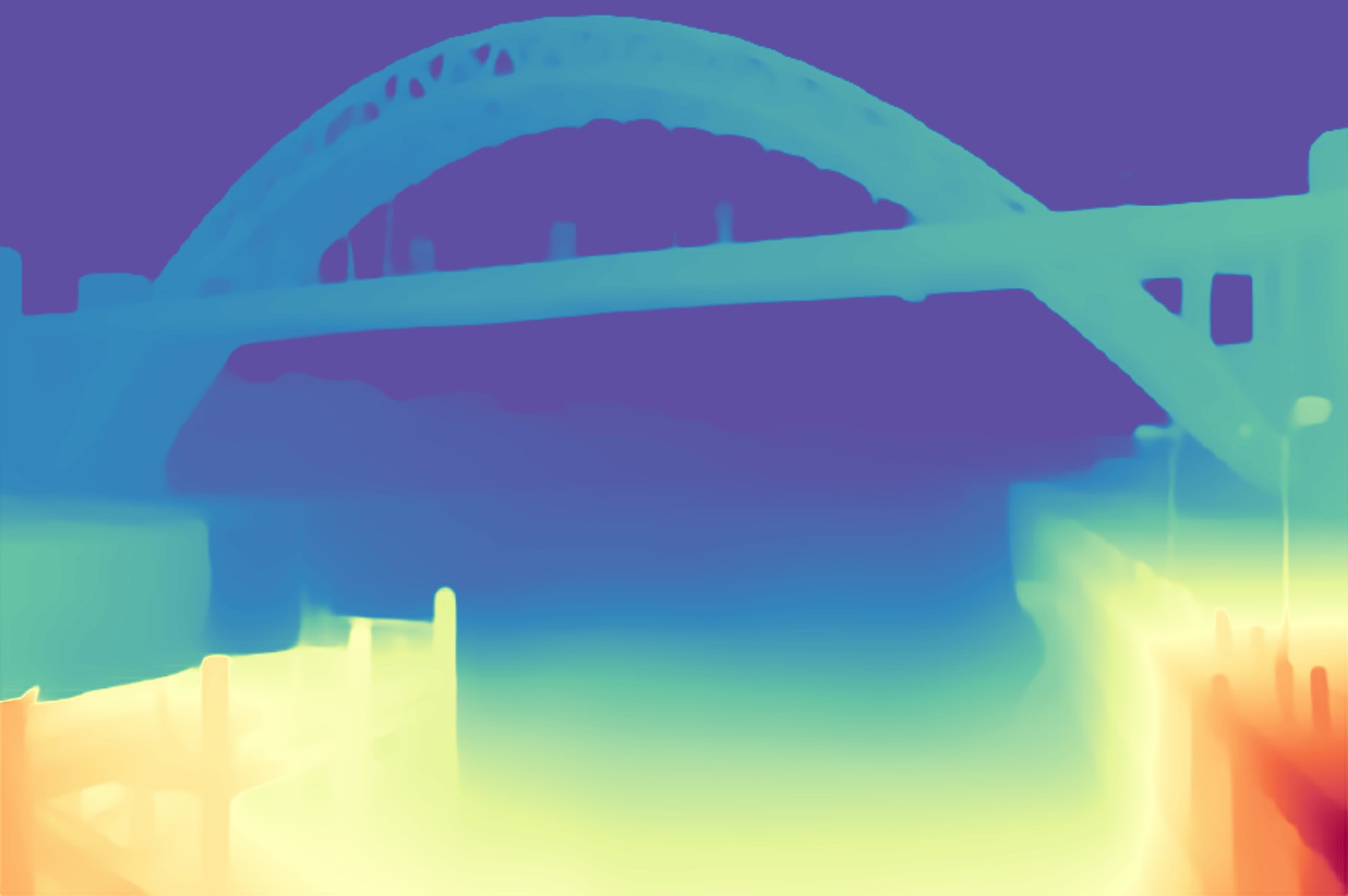

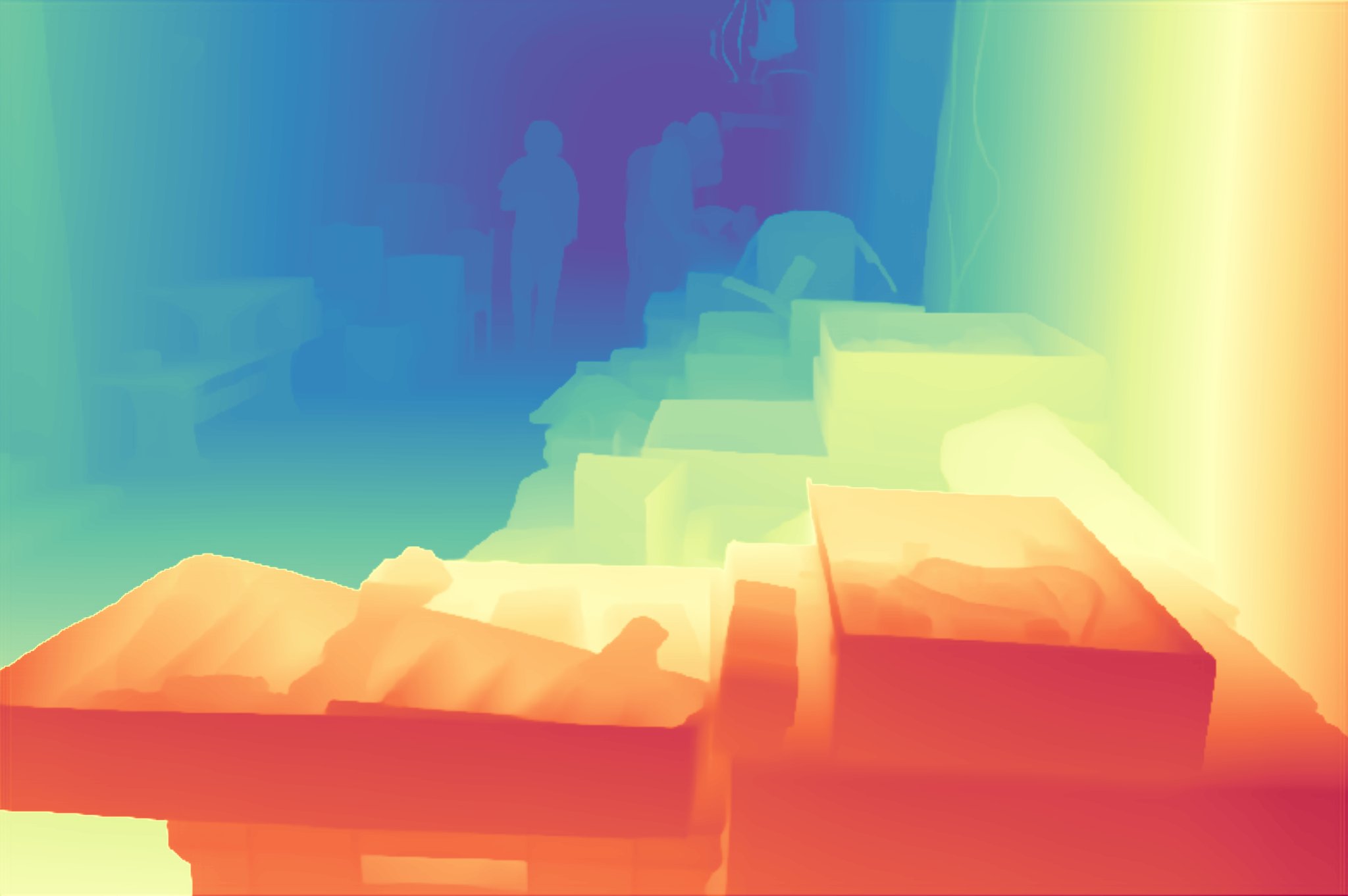

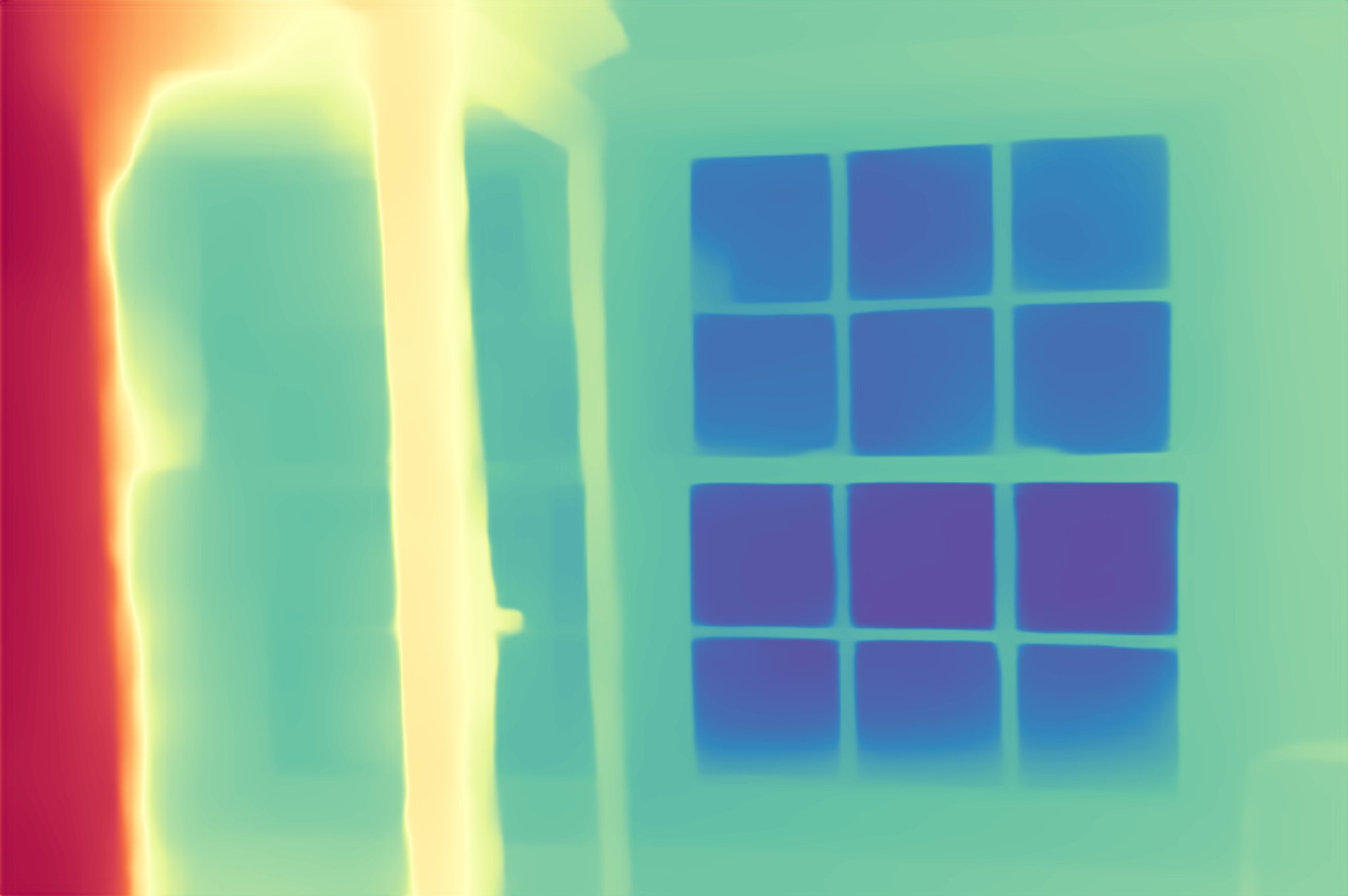

Depth Anything V2 This work presents depth anything v2. it significantly outperforms v1 in fine grained details and robustness. compared with sd based models, it enjoys faster inference speed, fewer parameters, and higher depth accuracy. Official demo for depth anything v2. please refer to our paper, project page, and github for more details. upload a photo and the app will estimate how far each part of the scene is, creating a colorful depth map that you can explore with a slider. it also provides downloadable grayscale and 16‑bit raw.

Depth Anything V2 Depth anything v2 is a neural network that can generate high quality depth maps from images. it is trained on synthetic and real data, and outperforms previous models in accuracy, efficiency and robustness. Depth anything v2 is a monocular depth estimation model that uses synthetic images, large scale pseudo labeled real images, and a bridge network to improve accuracy and efficiency. the paper presents the model architecture, training strategy, evaluation benchmark, and results of different scales. Depth anything v2 is a depth estimation model developed by researchers from hku and tiktok. depth anything v2 is a state of the art monocular depth estimation model, meaning it can predict depth information from a single image, without needing multiple cameras. Depth anything v2 is a neural network that predicts depth from a single image. it uses synthetic images, large scale pseudo labels, and a scaled up teacher model to improve accuracy and efficiency. it also provides a versatile evaluation benchmark and models of different scales.

Depth Anything V2 Depth anything v2 is a depth estimation model developed by researchers from hku and tiktok. depth anything v2 is a state of the art monocular depth estimation model, meaning it can predict depth information from a single image, without needing multiple cameras. Depth anything v2 is a neural network that predicts depth from a single image. it uses synthetic images, large scale pseudo labels, and a scaled up teacher model to improve accuracy and efficiency. it also provides a versatile evaluation benchmark and models of different scales. Depth anything v2 is a state of the art monocular depth estimation model leveraging a vision transformer encoder and a dpt decoder to predict relative and metric depth from single rgb images. it employs a synthetic to real teacher student training pipeline with scale and shift invariant log loss and gradient matching to ensure accuracy and robustness across diverse domains. the model offers. Depth anything v2 is a state of the art monocular depth estimation system that generates high quality depth maps from single rgb images. this document introduces the repository's purpose, key components, and capabilities. Depth anything v2 was introduced in the paper of the same name by lihe yang et al. it uses the same architecture as the original depth anything release, but uses synthetic data and a larger capacity teacher model to achieve much finer and robust depth predictions. This work presents depth anything v2. without pursuing fancy techniques, we aim to reveal crucial findings to pave the way towards building a powerful monocular depth estimation model.

Depth Anything V2 Depth anything v2 is a state of the art monocular depth estimation model leveraging a vision transformer encoder and a dpt decoder to predict relative and metric depth from single rgb images. it employs a synthetic to real teacher student training pipeline with scale and shift invariant log loss and gradient matching to ensure accuracy and robustness across diverse domains. the model offers. Depth anything v2 is a state of the art monocular depth estimation system that generates high quality depth maps from single rgb images. this document introduces the repository's purpose, key components, and capabilities. Depth anything v2 was introduced in the paper of the same name by lihe yang et al. it uses the same architecture as the original depth anything release, but uses synthetic data and a larger capacity teacher model to achieve much finer and robust depth predictions. This work presents depth anything v2. without pursuing fancy techniques, we aim to reveal crucial findings to pave the way towards building a powerful monocular depth estimation model.

Depth Anything V2 Depth anything v2 was introduced in the paper of the same name by lihe yang et al. it uses the same architecture as the original depth anything release, but uses synthetic data and a larger capacity teacher model to achieve much finer and robust depth predictions. This work presents depth anything v2. without pursuing fancy techniques, we aim to reveal crucial findings to pave the way towards building a powerful monocular depth estimation model.

Depth Anything V2

Comments are closed.