Delman Data Lab Import Csv Data Load Data Ingestion From Csv

Delman Data Lab Click on the add data source button on the top left corner. scroll down on the new connection (external) tab and select upload file and click the continue button. Delman.io cloud.delman.io.

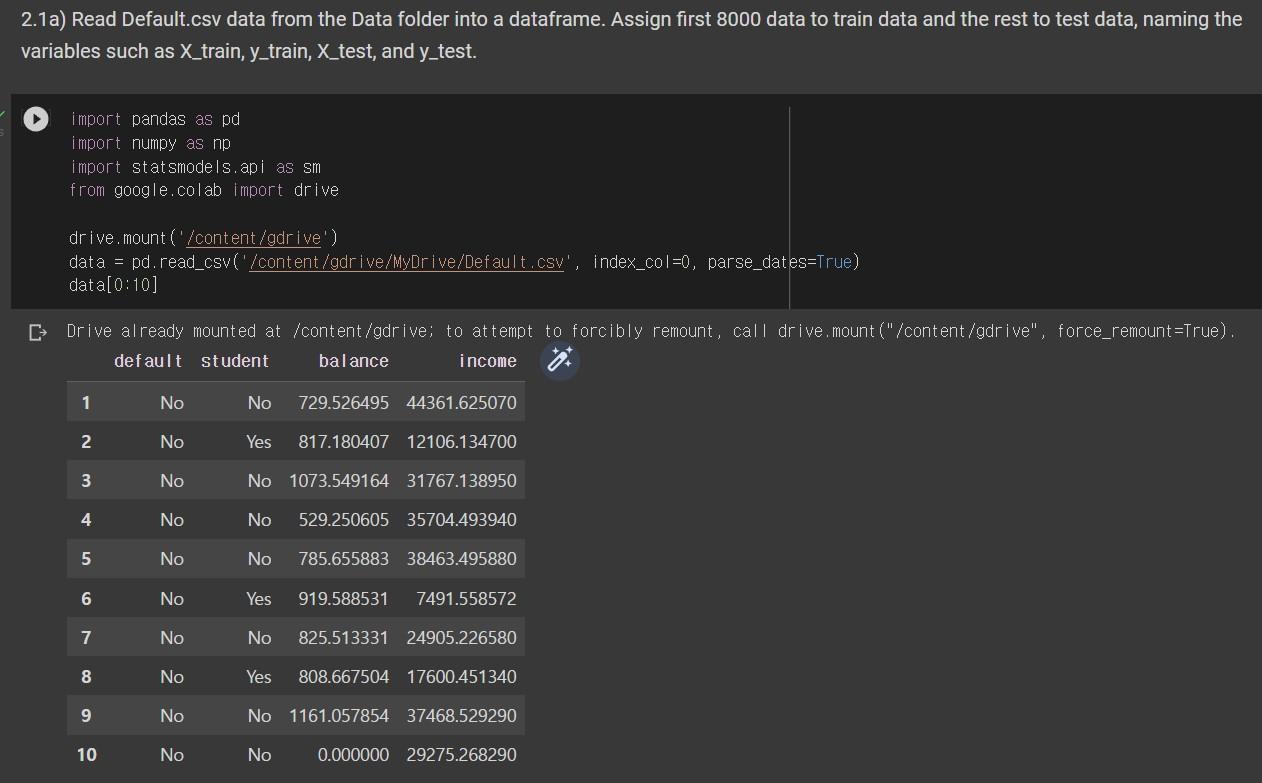

Solved 2 1a Read Default Csv Data From The Data Folder Into Chegg Pipeline is the place where you can prepare the data you need by transforming the data from the data sources or from a file. click on the data source node previously imported and click on the import data button on the top right. You only need to create connection with online data sources. you don't need to create a connection to gain data from offline file (csv, excel, json upload) where to start? next: create a connection. Our standard practice is to get producers to write their data to an s3 bucket and configure an aws glue crawler to produce a schema for us to use to ingest said data (lack of published schemas are a chronic issue at my org and yes it's annoying). Projects often face hurdles due to diverse data formats and sources. this article focuses on effective data ingestion techniques, primarily using csv files.

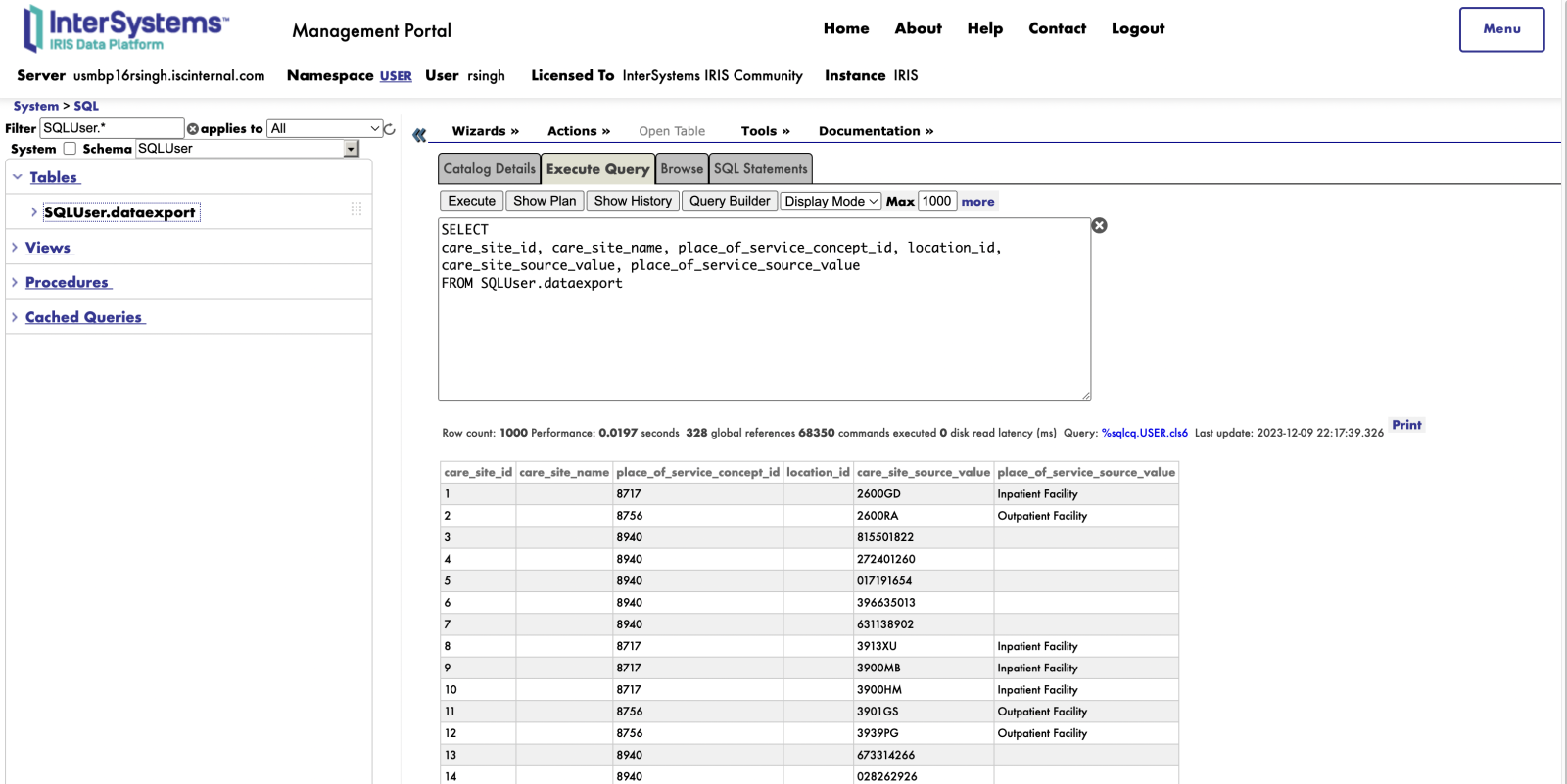

A Better Data Import Experience For Load Data Intersystems Developer Our standard practice is to get producers to write their data to an s3 bucket and configure an aws glue crawler to produce a schema for us to use to ingest said data (lack of published schemas are a chronic issue at my org and yes it's annoying). Projects often face hurdles due to diverse data formats and sources. this article focuses on effective data ingestion techniques, primarily using csv files. These are all perfect candidates to generate csv data for loading into a database, data warehouse, or data lake for further analysis. one of the most convenient options for csv loading to a destination data lake or data warehousing system is using an automated etl solution. Using our duckdb migration module, you can load data from any duckdb supported source, run analytical queries directly on it, and import the results into memgraph. By the end of this lab, you will be able to: create a unity catalog hierarchy (catalog, schema, volume) to house ingested data load csv data from a managed volume into a delta table using pyspark dataframes use copy into to incrementally load files with built in deduplication create summary tables using create table as select. In this blog post, we’ll explore a robust architecture designed to handle the ingestion of flat files (csv, json, xml) while ensuring data quality and reliability.

Comments are closed.