Deepseek V3 Redefines Llm Performance And Cost Efficiency

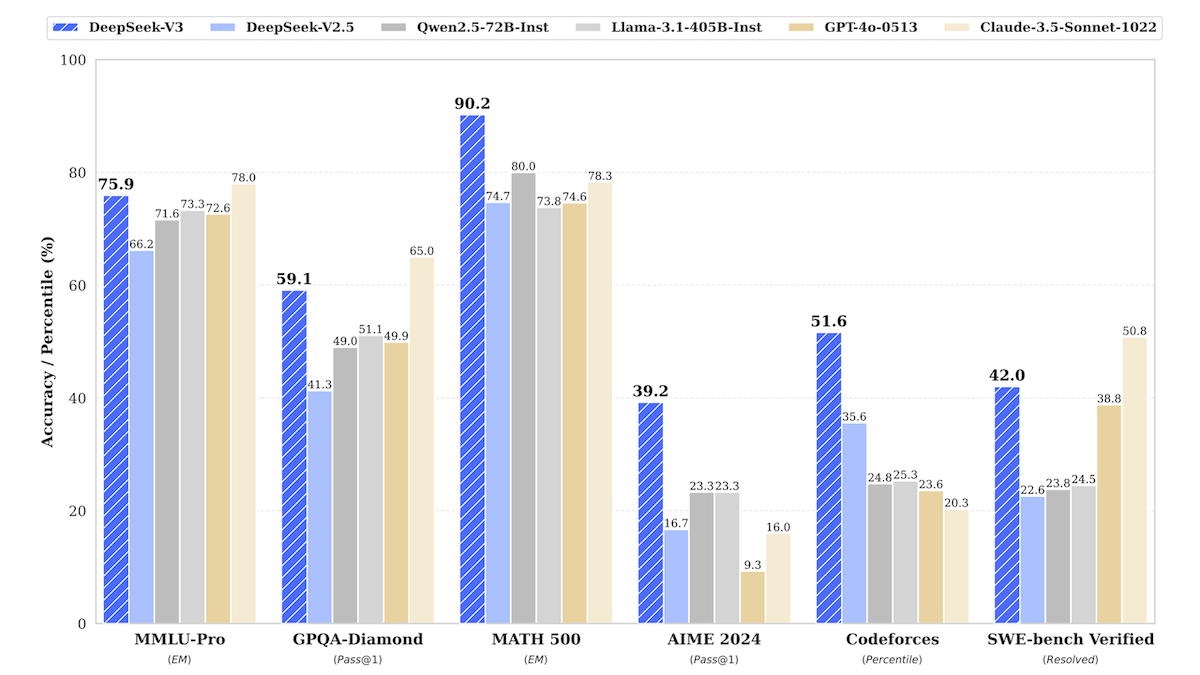

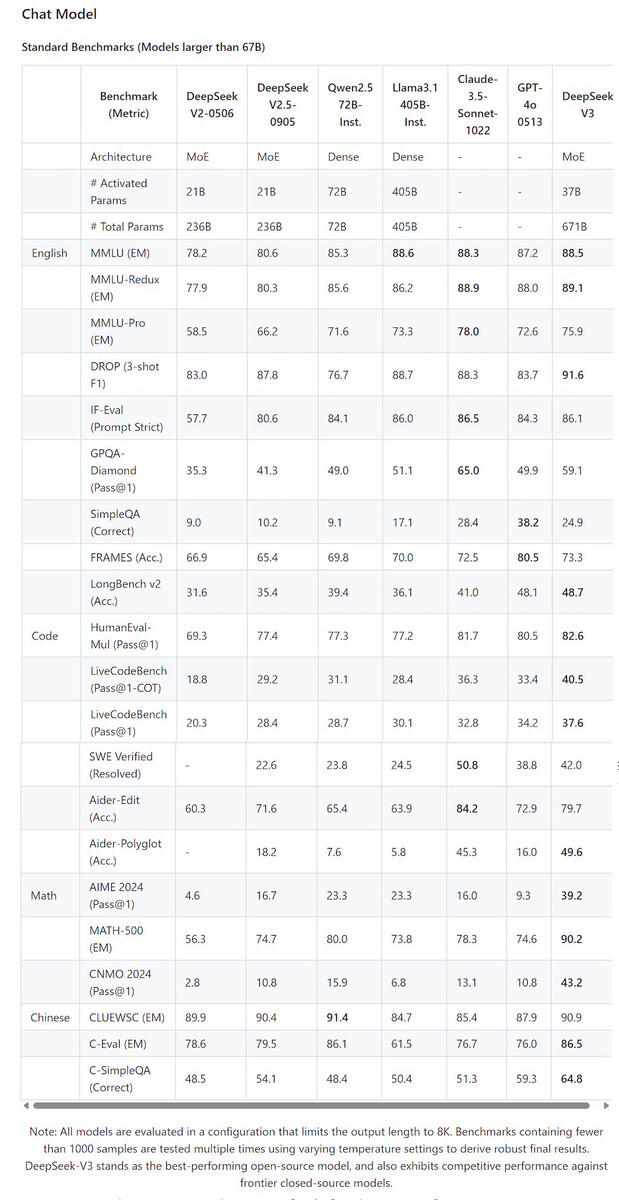

Deepseek V3 Redefines Llm Performance And Cost Efficiency A new model from hangzhou upstart deepseek delivers outstanding performance and may change the equation for training costs. what’s new: deepseek v3 is an open large language model that outperforms llama 3.1 405b and gpt 4o on key benchmarks and achieves exceptional scores in coding and math. While acknowledging its strong performance and cost effectiveness, we also recognize that deepseek v3 has some limitations, especially on the deployment. firstly, to ensure efficient inference, the recommended deployment unit for deepseek v3 is relatively large, which might pose a burden for small sized teams.

Deepseek V3 Redefines Llm Performance And Cost Efficiency Deepseek v3 shows that complex and well thought out design, architectural and training choices can significantly reduce training time and costs, making powerful llms more accessible to smaller development teams. This significantly enhances our training efficiency and reduces the training costs, enabling us to further scale up the model size without additional overhead. at an economical cost of only 2.664m h800 gpu hours, we complete the pre training of deepseek v3 on 14.8t tokens, producing the currently strongest open source base model. Deepseek v3 redefines what’s possible in open source large language models. it combines moe efficiency, fp8 training innovation, auxiliary loss free routing, and multi token learning to deliver closed source tier performance at open source cost and complexity. Deepseek v3 is an open source large language model that leverages mixture of experts (moe) architecture to achieve state of the art performance in computational efficiency and accuracy.

Llm Model Deepseek V3 By Deepseek Deepranking Ai Deepseek v3 redefines what’s possible in open source large language models. it combines moe efficiency, fp8 training innovation, auxiliary loss free routing, and multi token learning to deliver closed source tier performance at open source cost and complexity. Deepseek v3 is an open source large language model that leverages mixture of experts (moe) architecture to achieve state of the art performance in computational efficiency and accuracy. Comprehensive evaluations reveal that deepseek v3 outperforms other open source models and achieves performance comparable to leading closed source models. Deepseek v3’s training efficiency represents perhaps its most significant achievement. with 2.788 million h800 gpu hours and total cost of $5.576 million, it achieves performance comparable to systems requiring ten to twenty times more computational investment. Deepseek's latest models, v3 and r1, leverage architectural breakthroughs like multi head latent attention to rival gpt 4o and claude 3.5 at a fraction of the cost. On top of the efficient architecture of deepseek v2, we pioneer an auxiliary loss free strategy for load balancing, which minimizes the performance degradation that arises from encouraging load balancing.

Deepseek V3 1 Review The Best Open Source Llm For Developers Geeky Comprehensive evaluations reveal that deepseek v3 outperforms other open source models and achieves performance comparable to leading closed source models. Deepseek v3’s training efficiency represents perhaps its most significant achievement. with 2.788 million h800 gpu hours and total cost of $5.576 million, it achieves performance comparable to systems requiring ten to twenty times more computational investment. Deepseek's latest models, v3 and r1, leverage architectural breakthroughs like multi head latent attention to rival gpt 4o and claude 3.5 at a fraction of the cost. On top of the efficient architecture of deepseek v2, we pioneer an auxiliary loss free strategy for load balancing, which minimizes the performance degradation that arises from encouraging load balancing.

Deepseek Unveils Deepseek V3 Ai Llm With Free Chatbot Access Deepseek's latest models, v3 and r1, leverage architectural breakthroughs like multi head latent attention to rival gpt 4o and claude 3.5 at a fraction of the cost. On top of the efficient architecture of deepseek v2, we pioneer an auxiliary loss free strategy for load balancing, which minimizes the performance degradation that arises from encouraging load balancing.

Comments are closed.