Deepseek R1 Vs Deepseek R1 Zero Deepseek S New Reasoning Models By

Deepseek Ai Releases Deepseek R1 Zero And Deepseek R1 First Generation In this blog, we’ll explore the key differences between the two models and why are they both significant. 1. training methods. deepseek r1 zero: this model is trained exclusively using. Compare deepseek r1 and deepseek r1 zero side by side. detailed analysis of benchmark scores, api pricing, context windows, latency, and capabilities to help you choose the right ai model.

Deepseek Ai Releases Deepseek R1 Zero And Deepseek R1 First Generation Comparison: deepseek r1 vs. deepseek r1 zero r1 zero: best suited for research scenarios exploring pure reinforcement learning training potential but has limited practical applications. Understand the differences between deepseek v3, r1, v3.1, v3.2, and distilled models. learn how to choose the right model and deploy them securely with bentoml. Deepseek’s latest models, r1 zero and r1, represent a pivotal shift in ai reasoning systems. Last week, deepseek published their new r1 zero and r1 “reasoner” systems that is competitive with openai’s o1 system on arc agi 1. r1 zero, r1, and o1 (low compute) all score around 15 20% – in contrast to gpt 4o ’s 5%, the pinnacle of years of pure llm scaling.

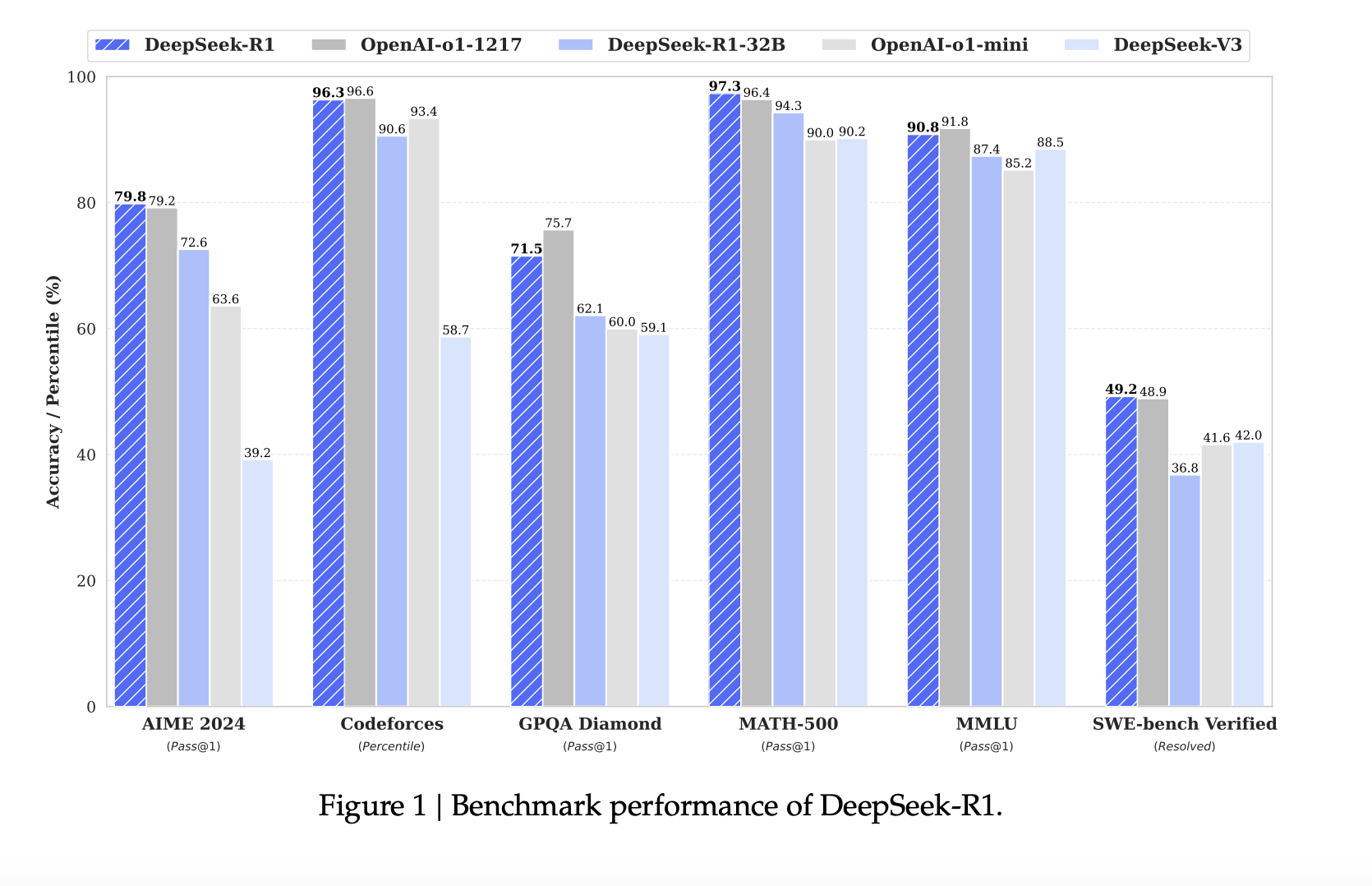

Deepseek Ai Releases Deepseek R1 Zero And Deepseek R1 First Generation Deepseek’s latest models, r1 zero and r1, represent a pivotal shift in ai reasoning systems. Last week, deepseek published their new r1 zero and r1 “reasoner” systems that is competitive with openai’s o1 system on arc agi 1. r1 zero, r1, and o1 (low compute) all score around 15 20% – in contrast to gpt 4o ’s 5%, the pinnacle of years of pure llm scaling. We introduce our first generation reasoning models, deepseek r1 zero and deepseek r1. deepseek r1 zero, a model trained via large scale reinforcement learning (rl) without supervised fine tuning (sft) as a preliminary step, demonstrated remarkable performance on reasoning. We introduce our first generation reasoning models, deepseek r1 zero and deepseek r1. deepseek r1 zero, a model trained via large scale reinforcement learning (rl) without supervised fine tuning (sft) as a preliminary step, demonstrated remarkable performance on reasoning. Deepseek’s r1 and r1 zero highlight a pragmatic approach to rl based language model training. by focusing on simple, verifiable reward signals and avoiding overly complex reward models, they sidestepped the high compute and instability issues often seen in rl for large lms. Chinese ai startup deepseek has released two new ai models that they say match openai's o1 in performance. along with their main models, deepseek r1 and deepseek r1 zero, they've also launched six smaller open source versions, with some performing as well as openai's o1 mini.

Deepseek R1 Zero Vs Deepseek R1 Ai Unveiled We introduce our first generation reasoning models, deepseek r1 zero and deepseek r1. deepseek r1 zero, a model trained via large scale reinforcement learning (rl) without supervised fine tuning (sft) as a preliminary step, demonstrated remarkable performance on reasoning. We introduce our first generation reasoning models, deepseek r1 zero and deepseek r1. deepseek r1 zero, a model trained via large scale reinforcement learning (rl) without supervised fine tuning (sft) as a preliminary step, demonstrated remarkable performance on reasoning. Deepseek’s r1 and r1 zero highlight a pragmatic approach to rl based language model training. by focusing on simple, verifiable reward signals and avoiding overly complex reward models, they sidestepped the high compute and instability issues often seen in rl for large lms. Chinese ai startup deepseek has released two new ai models that they say match openai's o1 in performance. along with their main models, deepseek r1 and deepseek r1 zero, they've also launched six smaller open source versions, with some performing as well as openai's o1 mini.

Deepseek S R1 Zero Vs R1 Deepseek’s r1 and r1 zero highlight a pragmatic approach to rl based language model training. by focusing on simple, verifiable reward signals and avoiding overly complex reward models, they sidestepped the high compute and instability issues often seen in rl for large lms. Chinese ai startup deepseek has released two new ai models that they say match openai's o1 in performance. along with their main models, deepseek r1 and deepseek r1 zero, they've also launched six smaller open source versions, with some performing as well as openai's o1 mini.

Deepseek R1 And Deepseek R1 Zero Redefining Ai Reasoning And Developer

Comments are closed.