Deepeval The Llm Evaluation Framework Aitoolnet

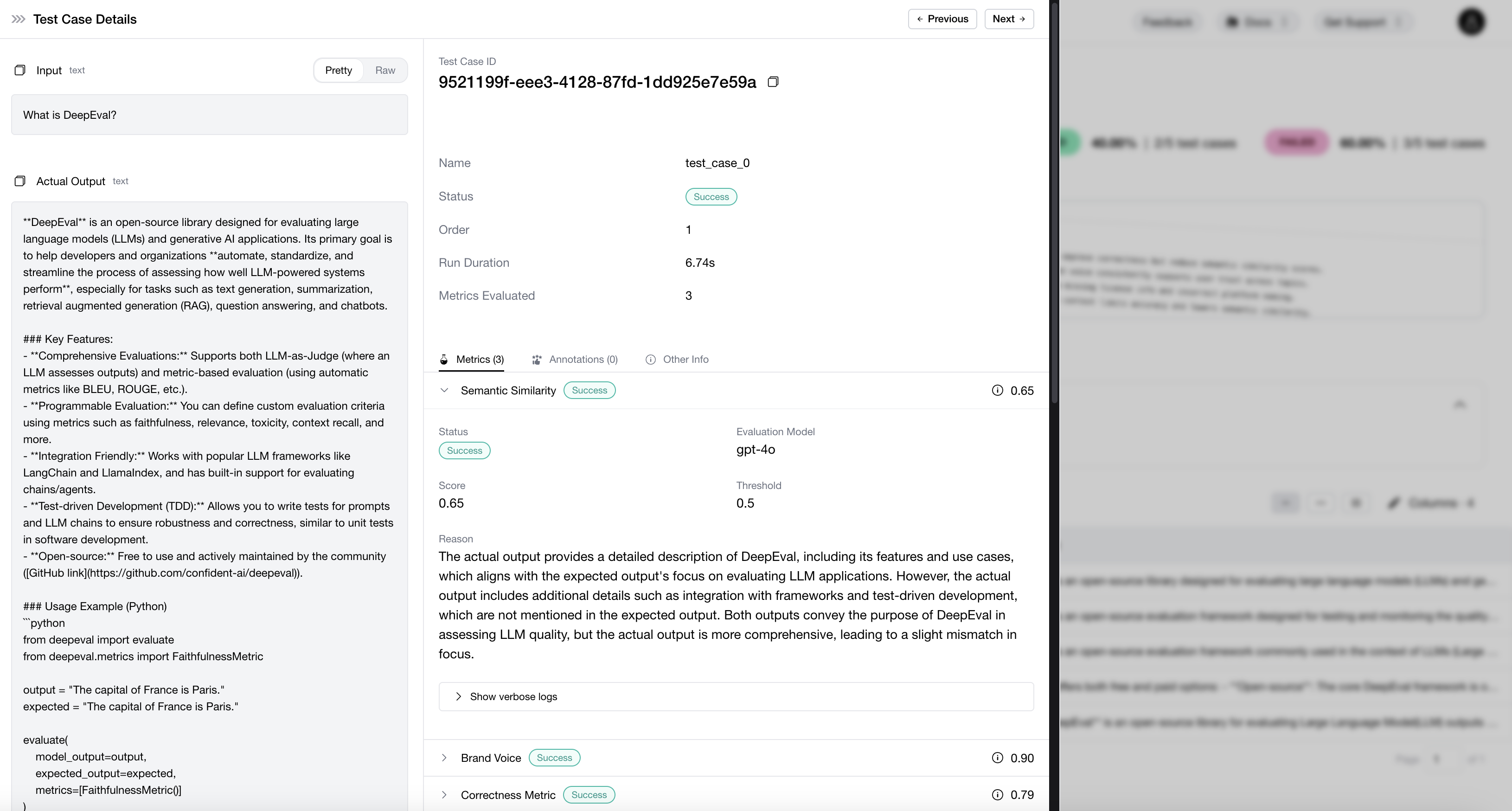

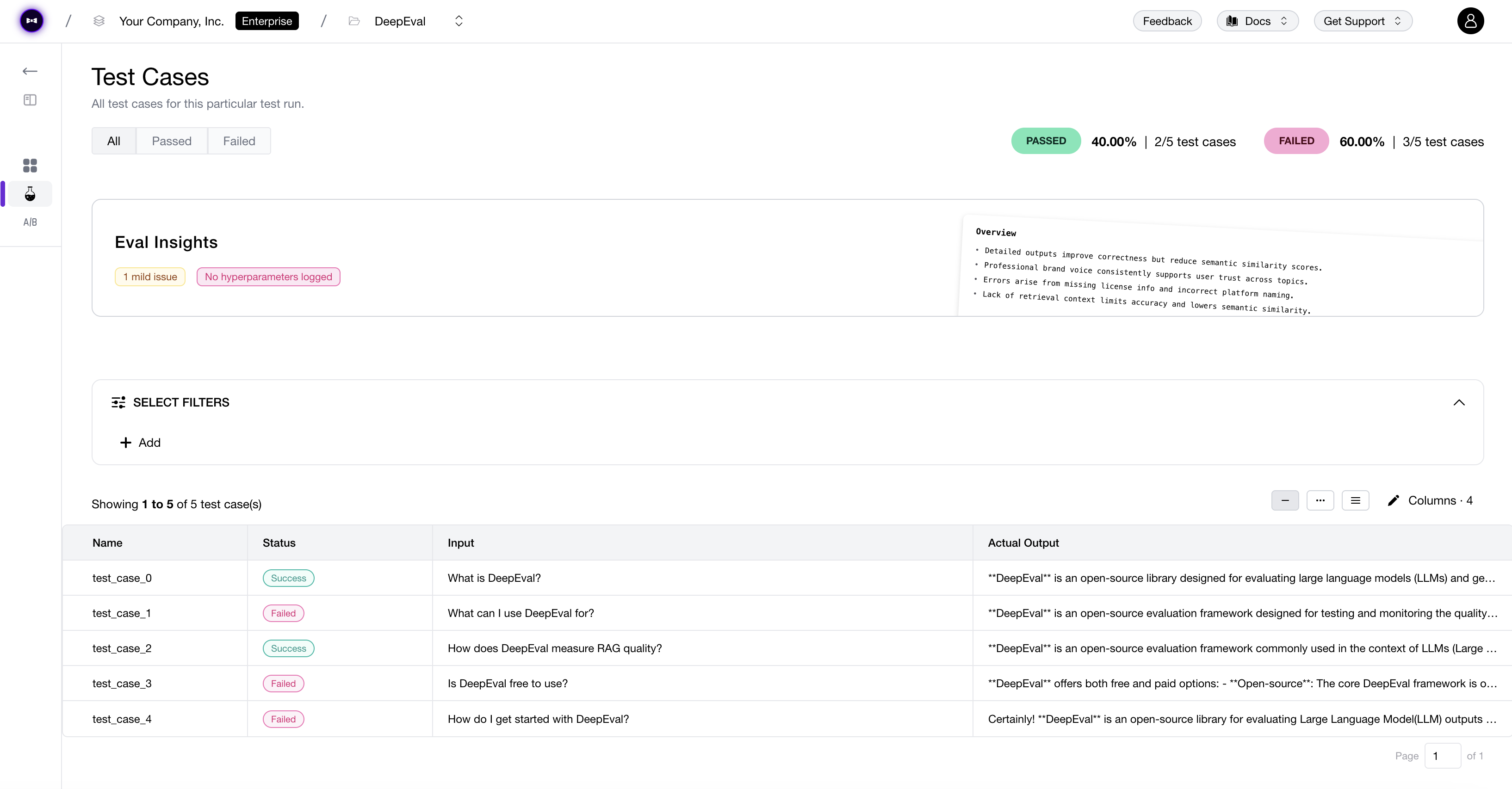

Deepeval The Llm Evaluation Framework Aitoolnet Deepeval bridges the gap between experimental prompt engineering and production grade software engineering. it provides the tools necessary to quantify llm performance, automate quality assurance, and maintain high standards as your ai applications scale. By the authors of deepeval, confident ai is a cloud llm evaluation platform. it allows you to use deepeval for team wide, collaborative ai testing. try deepeval free on confident ai.

Github Confident Ai Deepeval The Llm Evaluation Framework Deepeval is a simple to use, open source llm evaluation framework, for evaluating large language model systems. it is similar to pytest but specialized for unit testing llm apps. Deepeval is a simple to use, open source llm evaluation framework, for evaluating large language model systems. it is similar to pytest but specialized for unit testing llm apps. We offer 50 research backed metrics mainly using llm as a judge evaluators that use a language model to assess quality, tone, safety, and more. every metric is powered by deepeval, the open source evaluation framework used by teams at openai, google, and microsoft. Deepeval is a specialized framework for evaluating llm outputs. unlike traditional unit testing frameworks, it is tailored specifically for llms, making it easier to test ai responses against.

Github Confident Ai Deepeval The Llm Evaluation Framework We offer 50 research backed metrics mainly using llm as a judge evaluators that use a language model to assess quality, tone, safety, and more. every metric is powered by deepeval, the open source evaluation framework used by teams at openai, google, and microsoft. Deepeval is a specialized framework for evaluating llm outputs. unlike traditional unit testing frameworks, it is tailored specifically for llms, making it easier to test ai responses against. Deepeval is a comprehensive llm evaluation framework used by leading ai companies including openai, google, adobe, and walmart. it provides a native pytest integration that fits directly into ci cd workflows, enabling unit testing for llms with over 50 research backed metrics. This document provides a comprehensive guide to enabling, using, configuring, and extending deepeval within the litmus framework for evaluating llm responses. what is deepeval? deepeval is a python library specifically designed for evaluating the quality of responses generated by llms. Instead of manually checking every output, deepeval lets you define metrics and run automated tests against your llm's responses. this saves you a tremendous amount of time and effort. In this tutorial, you will learn how to set up deepeval and create a relevance test similar to the pytest approach. then, you will test the llm outputs using the g eval metric and run mmlu benchmarking on the qwen 2.5 model.

Github Bigdatasciencegroup Llm Evaluation Deepeval The Llm Deepeval is a comprehensive llm evaluation framework used by leading ai companies including openai, google, adobe, and walmart. it provides a native pytest integration that fits directly into ci cd workflows, enabling unit testing for llms with over 50 research backed metrics. This document provides a comprehensive guide to enabling, using, configuring, and extending deepeval within the litmus framework for evaluating llm responses. what is deepeval? deepeval is a python library specifically designed for evaluating the quality of responses generated by llms. Instead of manually checking every output, deepeval lets you define metrics and run automated tests against your llm's responses. this saves you a tremendous amount of time and effort. In this tutorial, you will learn how to set up deepeval and create a relevance test similar to the pytest approach. then, you will test the llm outputs using the g eval metric and run mmlu benchmarking on the qwen 2.5 model.

Deepeval By Confident Ai The Llm Evaluation Framework Instead of manually checking every output, deepeval lets you define metrics and run automated tests against your llm's responses. this saves you a tremendous amount of time and effort. In this tutorial, you will learn how to set up deepeval and create a relevance test similar to the pytest approach. then, you will test the llm outputs using the g eval metric and run mmlu benchmarking on the qwen 2.5 model.

Deepeval By Confident Ai The Llm Evaluation Framework

Comments are closed.