Deep Autoencoder With Encoding Decoding Layers Represented In Amber

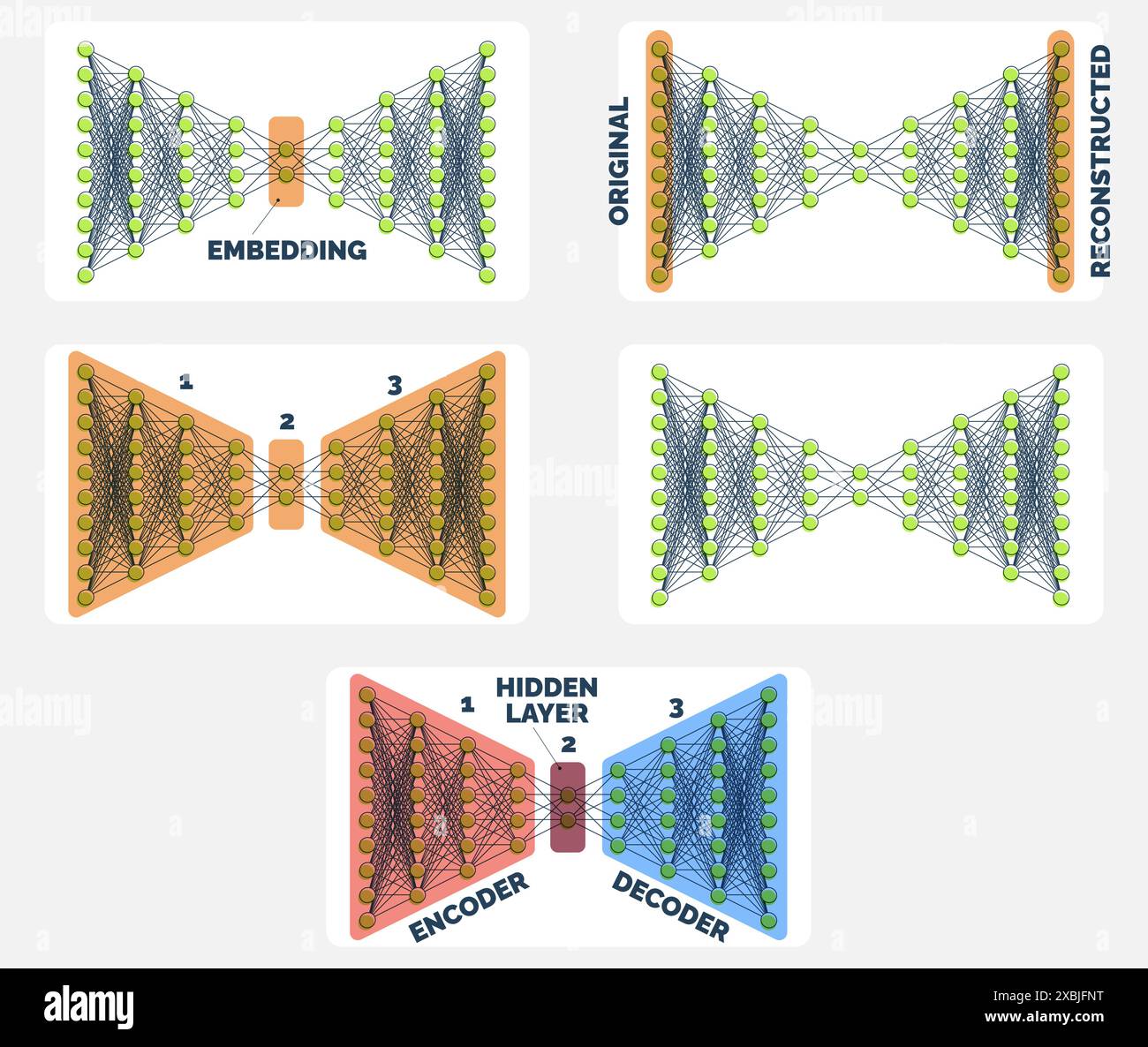

Deep Autoencoder With Encoding Decoding Layers Represented In Amber Deep autoencoders, which utilize multiple layers of encoding and decoding, significantly enhance the capability to capture hierarchical features of the data. this progression allows the models to handle tasks requiring detailed feature extraction. This thesis explores the idea that features extracted from deep neural networks (dnns) through layered weight analysis are knowledge components and are transferable.

Decoding Layers Hi Res Stock Photography And Images Alamy A deep autoencoder is composed of two, symmetrical deep belief networks that typically have four or five shallow layers representing the encoding half of the net, and second set of four or five layers that make up the decoding half. Constraining an autoencoder helps it learn meaningful and compact features from the input data which leads to more efficient representations. after training only the encoder part is used to encode similar data for future tasks. various techniques are used to achieve this are as follows:. In order to extract features from images and reduce their dimensionality, a deep autoencoder with numerous layers is first built. the five lightweight classifiers were then each integrated with the specified autoencoder model. A deep autoencoder is composed of two, symmetrical deep belief networks that typically have four or five shallow layers representing the encoding half of the net, and second set of four or five layers that make up the decoding half.

Deep Autoencoder Encoding And Decoding Process Download Scientific In order to extract features from images and reduce their dimensionality, a deep autoencoder with numerous layers is first built. the five lightweight classifiers were then each integrated with the specified autoencoder model. A deep autoencoder is composed of two, symmetrical deep belief networks that typically have four or five shallow layers representing the encoding half of the net, and second set of four or five layers that make up the decoding half. Define an autoencoder with two dense layers: an encoder, which compresses the images into a 64 dimensional latent vector, and a decoder, that reconstructs the original image from the latent. At a high level, autoencoders are a type of artificial neural network used primarily for unsupervised learning. their main goal is to learn a compressed, or “encoded,” representation of data. This document discusses deep autoencoders, focusing on their architecture, including encoding and decoding processes. it highlights their applications in image search and data compression, particularly in modeling topics across document collections. Two baseline models are included: a vanilla ae (vanillix) and a variational autoencoder (vae, named varix), both with fully connected encoder and decoder layers and a bottleneck.

Autoencoders Deep Learning For Beginners Define an autoencoder with two dense layers: an encoder, which compresses the images into a 64 dimensional latent vector, and a decoder, that reconstructs the original image from the latent. At a high level, autoencoders are a type of artificial neural network used primarily for unsupervised learning. their main goal is to learn a compressed, or “encoded,” representation of data. This document discusses deep autoencoders, focusing on their architecture, including encoding and decoding processes. it highlights their applications in image search and data compression, particularly in modeling topics across document collections. Two baseline models are included: a vanilla ae (vanillix) and a variational autoencoder (vae, named varix), both with fully connected encoder and decoder layers and a bottleneck.

Our Deep Autoencoder Architecture Composed Of 5 Hidden Layers 3 For This document discusses deep autoencoders, focusing on their architecture, including encoding and decoding processes. it highlights their applications in image search and data compression, particularly in modeling topics across document collections. Two baseline models are included: a vanilla ae (vanillix) and a variational autoencoder (vae, named varix), both with fully connected encoder and decoder layers and a bottleneck.

Our Deep Autoencoder Architecture Composed Of 5 Hidden Layers 3 For

Comments are closed.