Decoding Vision Language Models A Comprehensive Examination Only Ai

Decoding Vision Language Models A Comprehensive Examination Only Ai A team of researchers from hugging face and sorbonne université has conducted in depth studies on vision language models (vlms), aiming to better understand the critical factors that impact their performance. This survey offers a comprehensive synthesis of recent advancements in vision language models, examining 115 peer reviewed studies across five core components of vlm: fine tuning methods, prompt engineering, adapter based tuning, pre trained model architectures, and benchmark datasets.

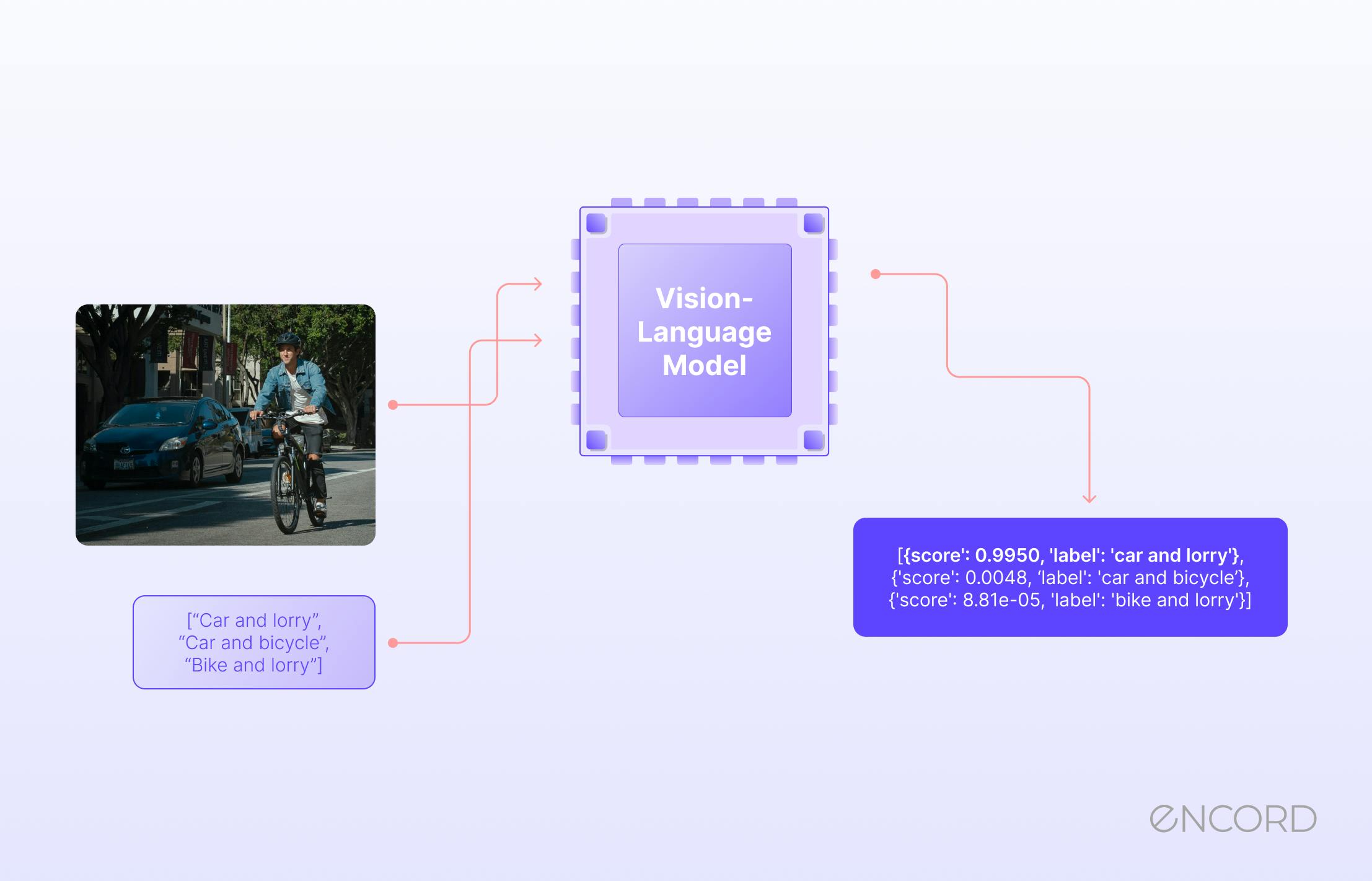

Vision Language Models How They Work Overcoming Key Challenges Encord This foundational review presents a comprehensive synthesis of recent advancements in vision language action models, systematically organized across five thematic pillars that structure the landscape of this rapidly evolving field. Decoding vision language models: a developer’s guide definition: a vision language model (vlm) is a multimodal ai system that jointly processes image and text inputs to generate text outputs. Vision language models (vlms) are ai systems that combine computer vision and natural language processing to understand and generate language grounded in visual information. This guide on vision language models (vlms) has highlighted their revolutionary impact on combining vision and language technologies. we explored essential capabilities like object detection and image segmentation, notable models such as clip, and various training methodologies.

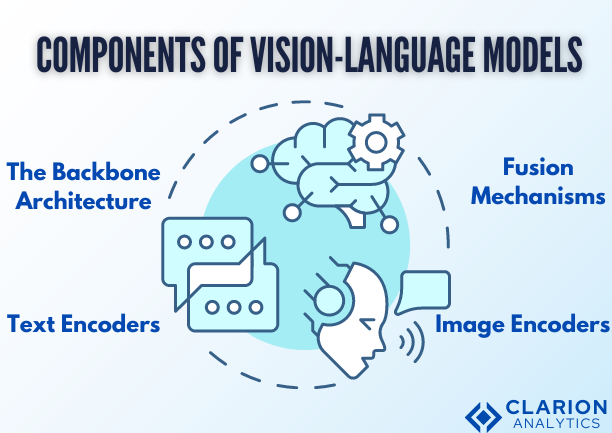

Decoding Vision Language Models A Developer S Guide Vision language models (vlms) are ai systems that combine computer vision and natural language processing to understand and generate language grounded in visual information. This guide on vision language models (vlms) has highlighted their revolutionary impact on combining vision and language technologies. we explored essential capabilities like object detection and image segmentation, notable models such as clip, and various training methodologies. Vision language models (vlms) are challenging for the field of deep learning interpretability given their billions of parameters and recurrence based reasoning. This chapter introduces the foundational concepts underlying these models, emphasizing their unique ability to learn multimodal representations through novel neural network architectures and large scale data pre training. In this paper, we propose to learn multi grained vision language alignments by a unified pre training framework that learns multi grained aligning and multi grained localization simultaneously. Below we compile awesome papers and model and github repositories that state of the art vlms collection of newest to oldest vlms (we'll keep updating new models and benchmarks).

Comments are closed.